我爬取了 1,500 個網站:30% 封鎖 AI 機器人,僅 0.2% 使用 llms.txt

一項針對 1,500 個網站的分析研究發現,30% 的網站透過 robots.txt 主動封鎖 AI 機器人,而僅有極少數 (0.2%) 網站透過結構化資料為 AI 搜尋做好準備,顯示出顯著的「AI 可讀性差距」。

Home

PromptOs

UCP Compliance Generator

Products

Blog

Home

PromptOs

UCP Compliance Generator

GeoAssetGenerator

GeoAuditChecklist

Products

Blog

1,500 Site Audit: The 6 Critical Errors Blocking Your AI Citations

Case Study: The State of AI Readability (Analysis of 1,500 Websites)

The AI Readability Gap is the divergence between how a website appears to a human user (Visually Rich) and how it appears to an AI Agent (Structurally Empty). Over the last month, we conducted a forensic audit of 1,500 active websites using the Website AI Score engine. The goal: to determine if modern web infrastructure is ready for the era of Answer Engine Optimization (AEO).

The results were alarming. While the industry obsesses over Google Core Updates, our data reveals that most websites are structurally invisible to the new wave of AI Search.

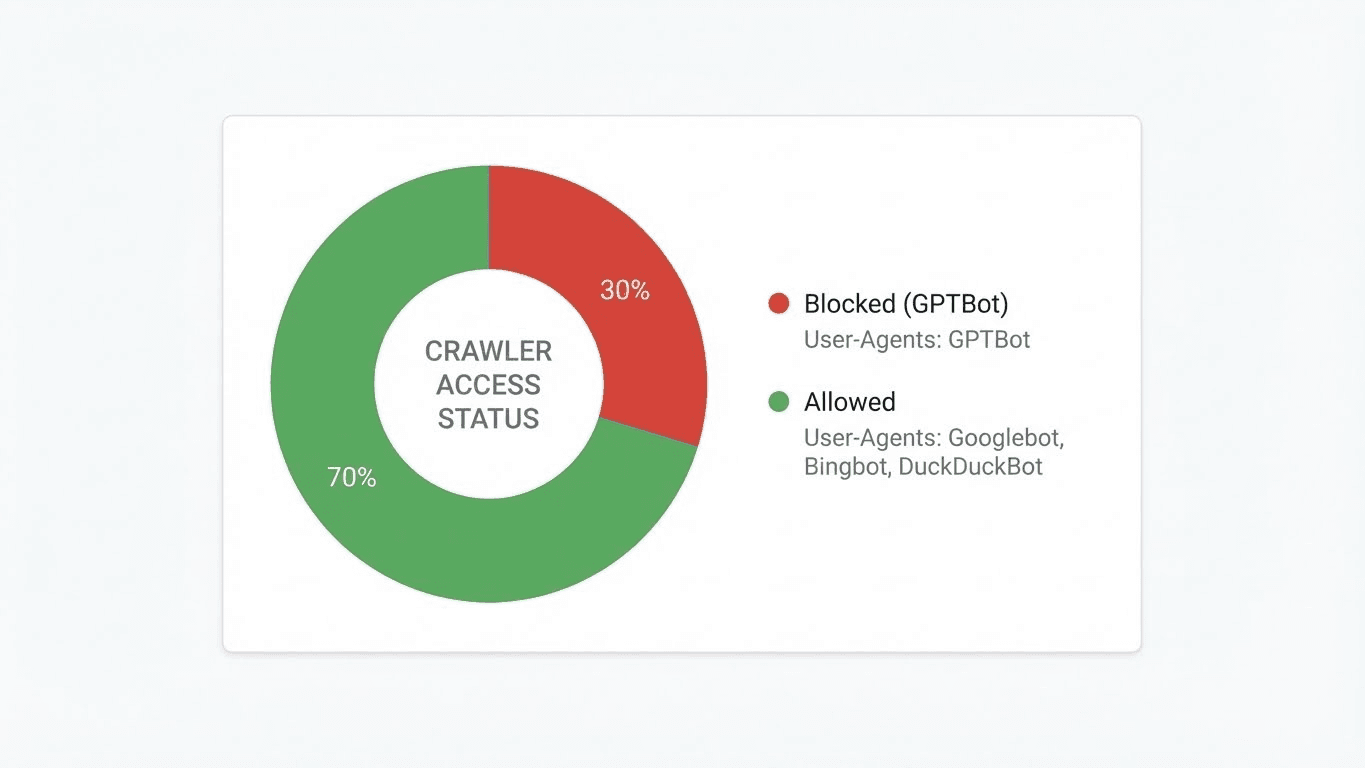

Finding #1: The Accidental Blockade (30% Failure Rate)

We began by checking the "Front Door" of the AI web: robots.txt. To our surprise, 30% of the sites scanned were actively blocking AI bots.

Solution: Implement a Strategic Robots.txt Protocol that distinguishes between "Search Bots" (Allowed) and "Training Bots" (Blocked).

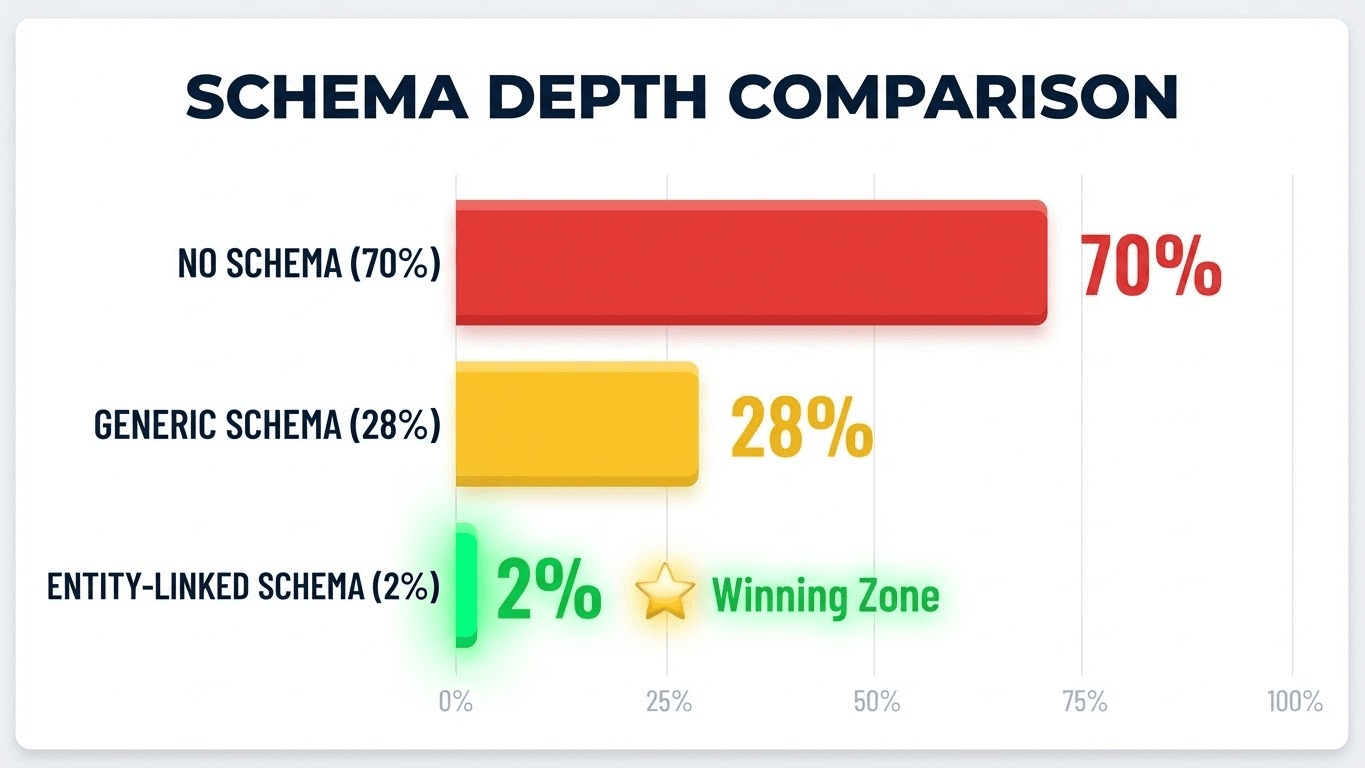

Finding #2: The Schema Void (70% Failure Rate)

Structured Data is the language of AI. Yet, our scan revealed a massive "Semantic Void."

The Impact: Without Schema, LLMs struggle to connect your Brand Name to your Industry. You remain a "String" rather than an "Entity." This is why Knowledge Graph Validation is the single biggest opportunity for immediate AEO lift.

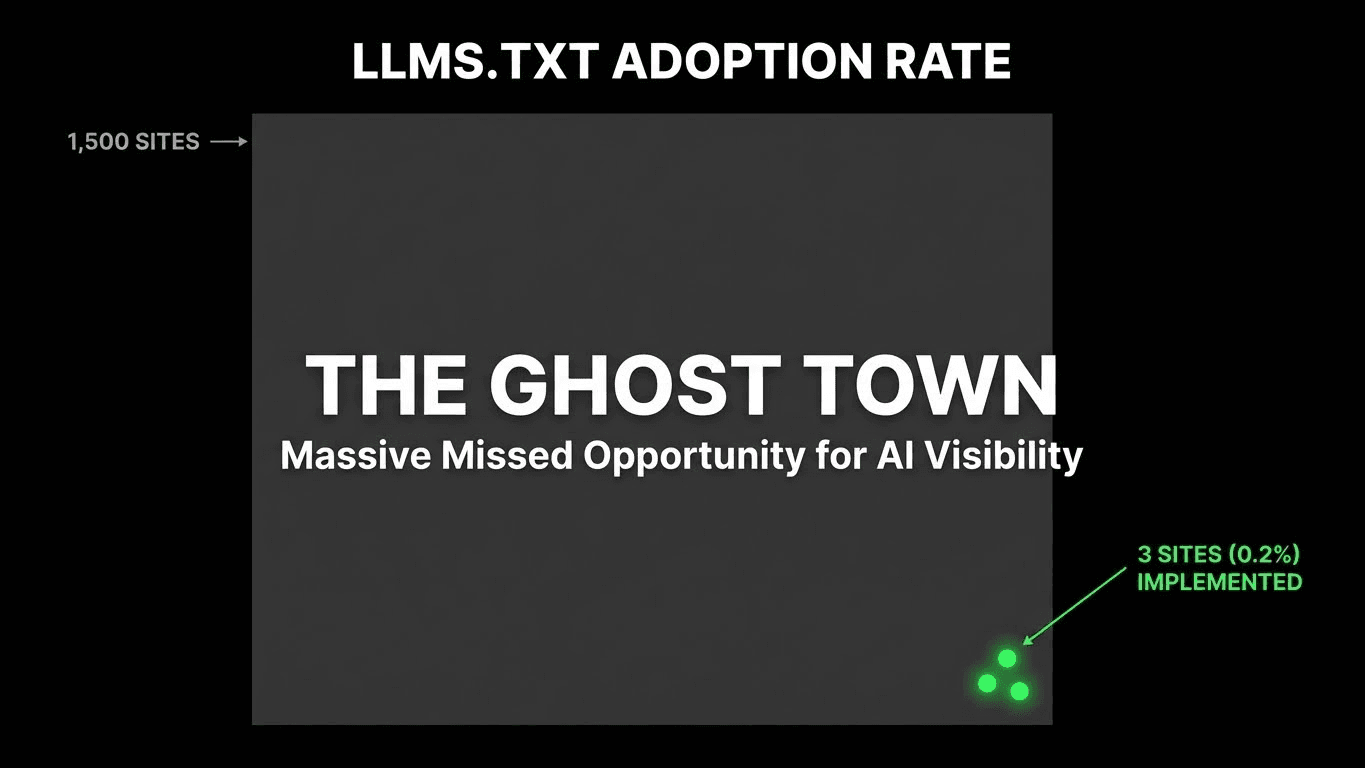

Finding #3: The llms.txt Ghost Town (0.2% Adoption)

The llms.txt file is the new sitemap.xml. It acts as a "Cheat Sheet" for AI agents, pointing them directly to your most valuable markdown content.

Solution: Deploy the LLMs.txt Standard immediately to gain a "First Mover" advantage.

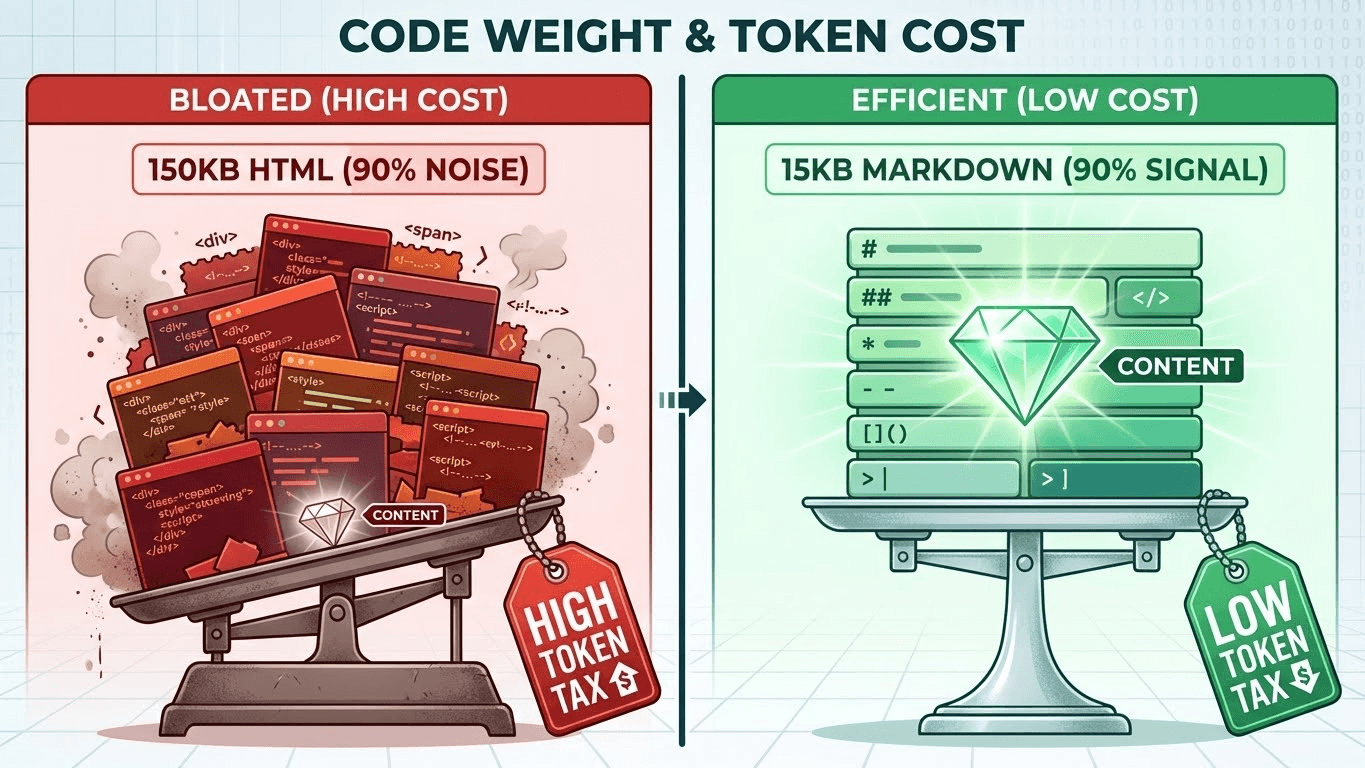

Finding #4: The Token Budget Disaster (High "Cost to Read")

We analyzed the Signal-to-Noise Ratio of the HTML source code. LLMs operate on "Token Budgets." If your page is expensive to read, they skip it.

Solution: Audit your Token Efficiency and strip non-semantic HTML for bot user-agents.

Finding #5: The JavaScript Trap (40% Risk)

Modern web development loves Client-Side Rendering (CSR). AI crawlers hate it.

Solution: Perform an Empty Shell Audit to ensure your core HTML is visible without hydration.

Finding #6: Hierarchy Abuse

Finally, we looked at Semantic HTML structure (<h1> through <h6>).

Conclusion: The "Invisible" Web

The data from this 1,500-site audit paints a clear picture: The web is currently optimized for Browsers, not Agents.

We are entering a new phase of search where "Visuals" matter less and "Structure" matters more. The sites that fix these 6 issues—Robots.txt, Schema, Token Density, Rendering, and Semantic Hierarchy—will be the ones cited by the next generation of AI models.

Verification: All data in this study was gathered using Website AI Score, a specialized engine built to test these exact AEO metrics. You can verify your own site's status in the beta today.

Audited by Hristo Stanchev

Founder & GEO Specialist

Website AI Score

AI-powered analytics and optimization for modern marketers.

© 2025 Website AI Score. All rights reserved.

相關文章