OptiMind:具備優化專業知識的小型語言模型

微軟研究院推出OptiMind,這是一個具備優化專業知識的小型語言模型。該模型旨在協助企業進行複雜的營運規劃,例如供應鏈和物流。

Global

Microsoft Research Blog

OptiMind: A small language model with optimization expertise

Published

January 15, 2026

By

Xinzhi Zhang

,

Research Intern

Zeyi Chen

,

Research Intern

Humishka Hope

,

Research Inten

Hugo Barbalho

,

Research SDE

Konstantina Mellou

,

Principal Researcher

Marco Molinaro

,

Principal Researcher

Janardhan (Jana) Kulkarni

,

Principal Researcher

Ishai Menache

,

Partner Research Manager

Sirui Li

,

Sr. Research SDE

Share this page

At a glance

Enterprises across industries, from energy to finance, use optimization models to plan complex operations like supply chains and logistics. These models work by defining three elements: the choices that can be made (such as production quantities or delivery routes), the rules and limits those choices must follow, and the goal, whether that’s minimizing costs, meeting customer demand, or improving efficiency.

Over the past few decades, many businesses have shifted from judgment-based decision-making to data-driven approaches, leading to major efficiency gains and cost savings. Advances in AI promise to accelerate this shift even further, potentially cutting decision times from days to minutes while delivering better results.

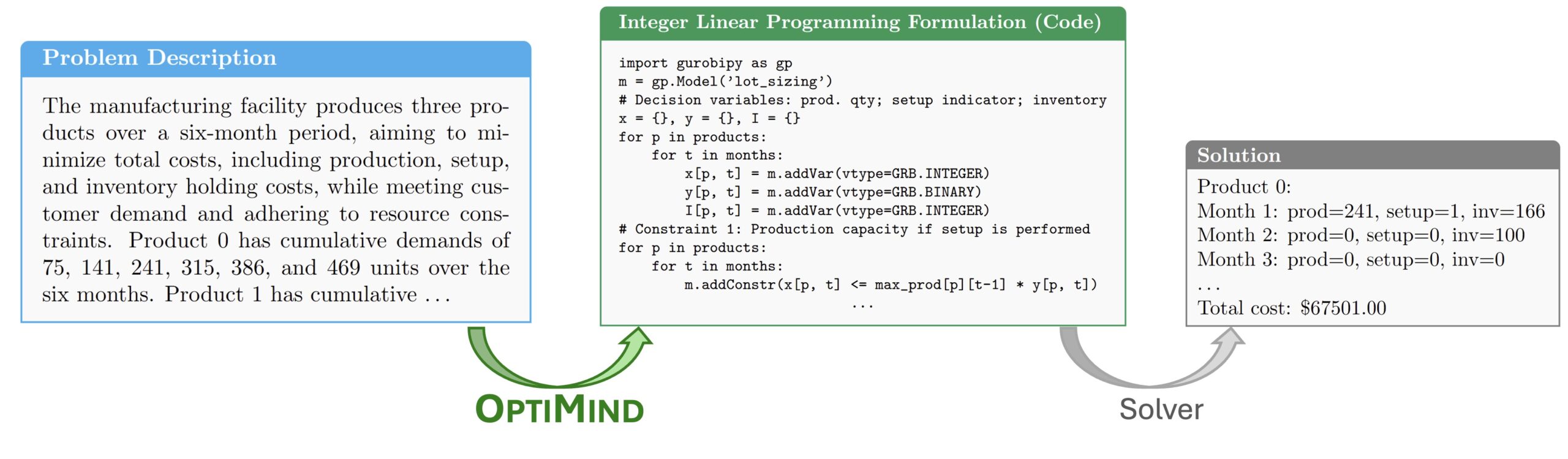

In practice, however, turning real-world business problems into a form that optimization software can understand is challenging. This translation process requires expressing decisions, constraints, and objectives in mathematical terms. The work demands specialized expertise, and it can take anywhere from one day to several weeks to solve complex problems.

To address this challenge, we’re introducing OptiMind, a small language model designed to convert problems described in plain language into the mathematical formulations that optimization software needs. Built on a 20-billion parameter model, OptiMind is compact by today’s standards yet matches the performance of larger, more complex systems. Its modest size means it can run locally on users’ devices, enabling fast iteration while keeping sensitive business data on users’ devices rather than transmitting it to external servers.

Azure AI Foundry Labs

Get a glimpse of potential future directions for AI, with these experimental technologies from Microsoft Research.

How it works

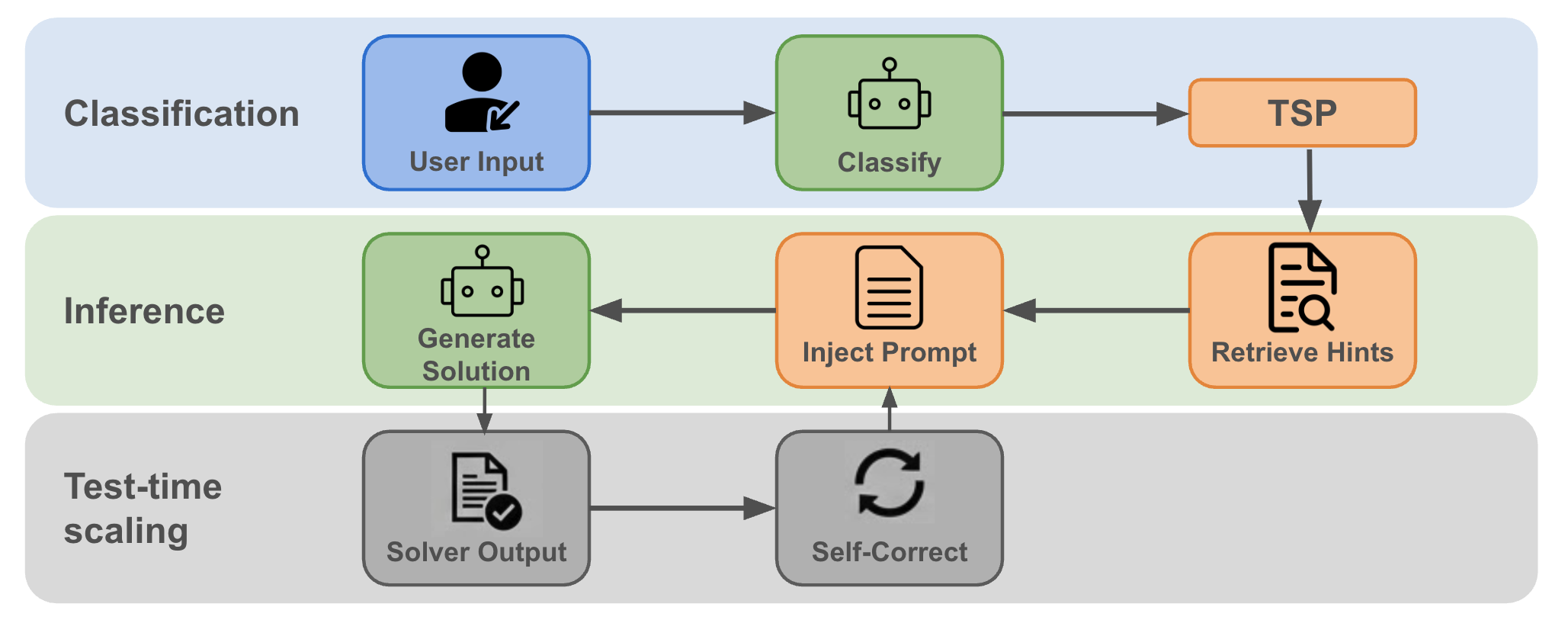

OptiMind incorporates knowledge from optimization experts both during training and when it’s being used to improve formulation accuracy at scale. Three stages enable this: domain-specific hints improve training data quality, the model undergoes fine-tuning, and expert reasoning guides the model as it works.

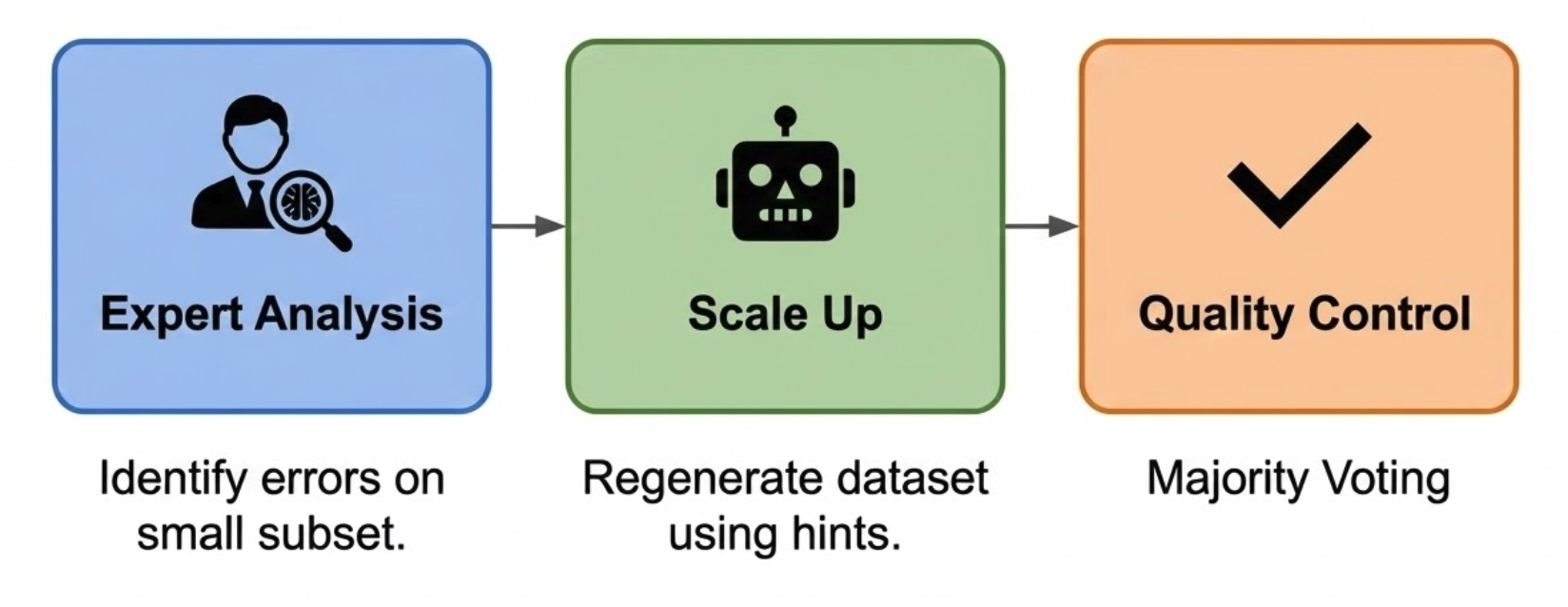

One of the central challenges in developing OptiMind was the poor quality of existing public datasets for optimization problems. Many examples were incomplete or contained incorrect solutions. To address this, we developed a systematic approach that combines automation with expert review. It organizes problems into well-known categories, such as scheduling or routing, and identifies common error patterns within each. Using these insights, we generated expert-verified “hints” to guide the process, enabling the system to regenerate higher-quality solutions and filter out unsolvable examples (Figure 2). The result is a training dataset that more accurately reflects how optimization experts structure problems.

Using this refined dataset, we applied supervised fine-tuning to the base model. Rather than simply generating code, we trained OptiMind to produce structured mathematical formulations alongside intermediate reasoning steps, helping it avoid the common mistakes found in earlier datasets.

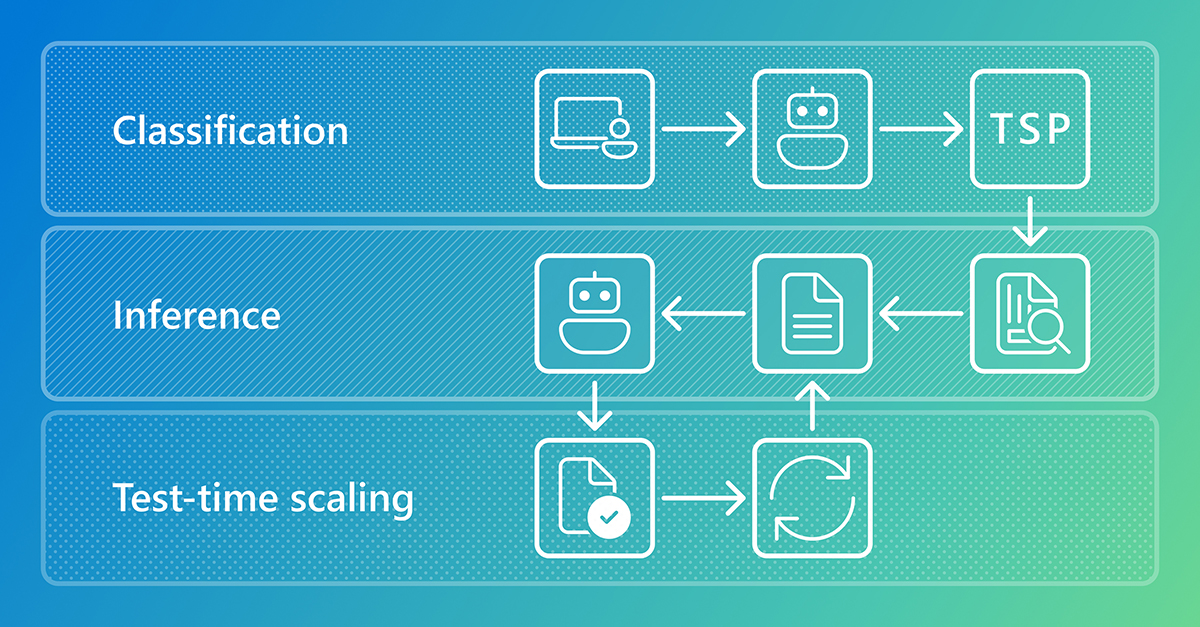

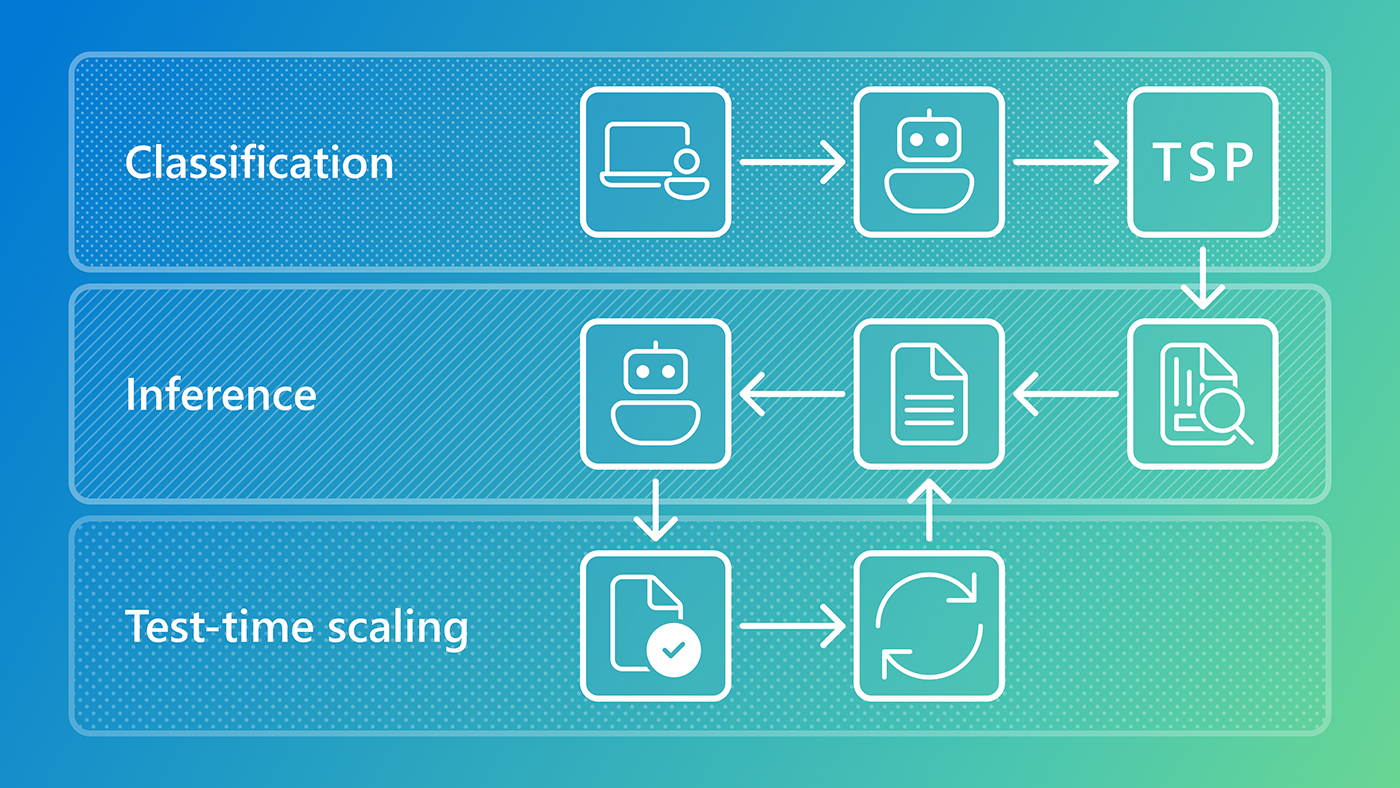

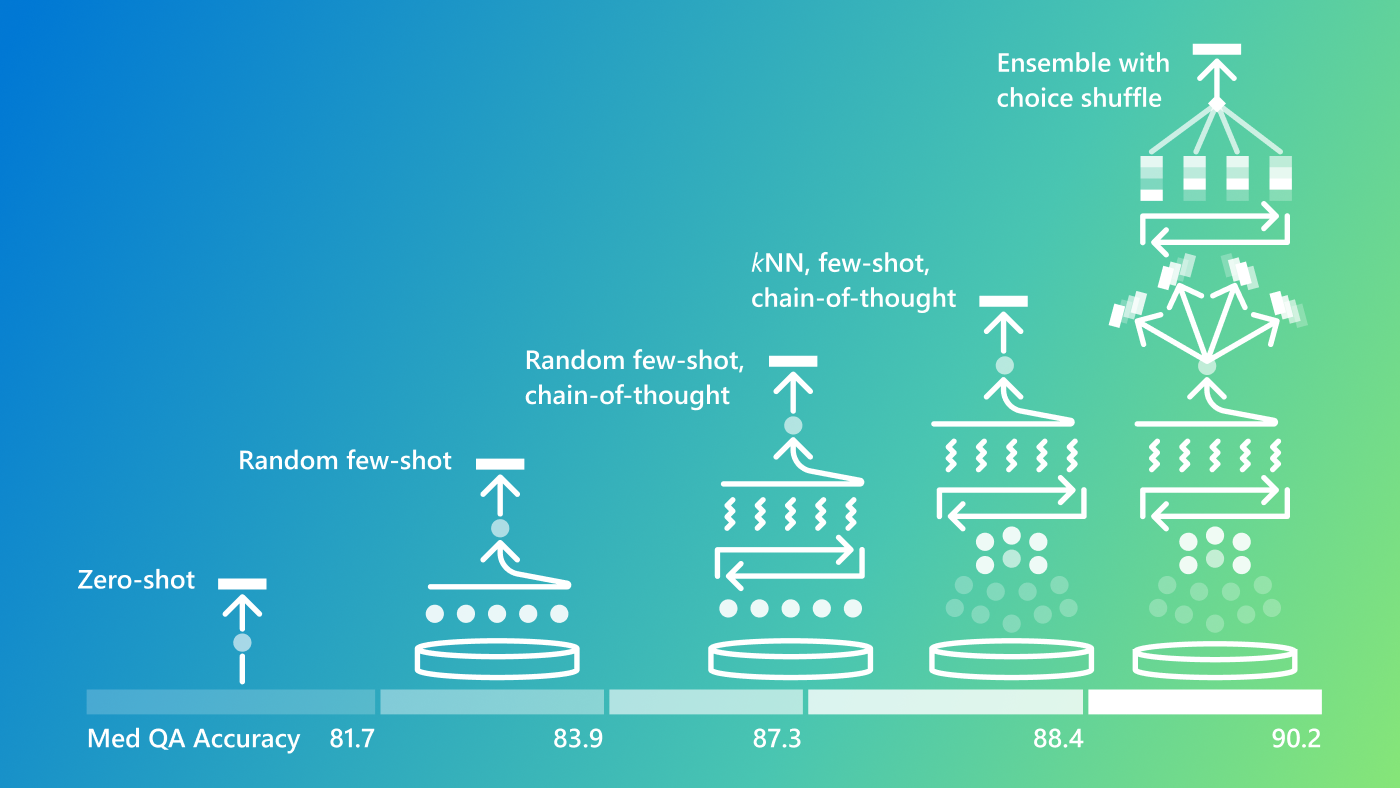

When in use, the model’s reliability further improves. When given a new problem, OptiMind first classifies it into a category, such as scheduling or network design. It then applies expert hints relevant to that type of problem, which act as reminders to check for errors before generating a solution. For particularly challenging problems, the system generates multiple solutions and either selects the most frequently occurring one or uses feedback to refine its response. This approach increases accuracy without requiring a larger model, as illustrated in Figure 3.

Evaluation

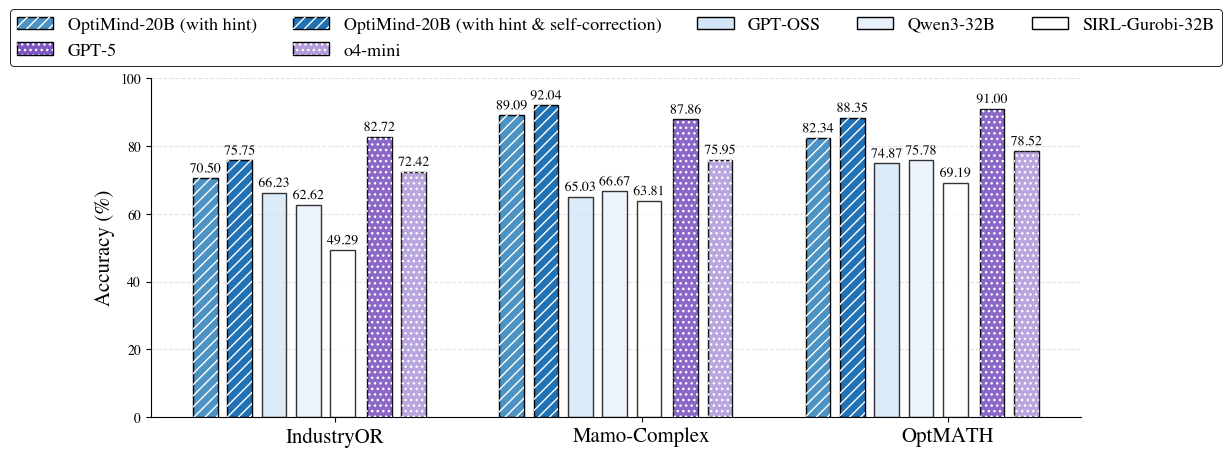

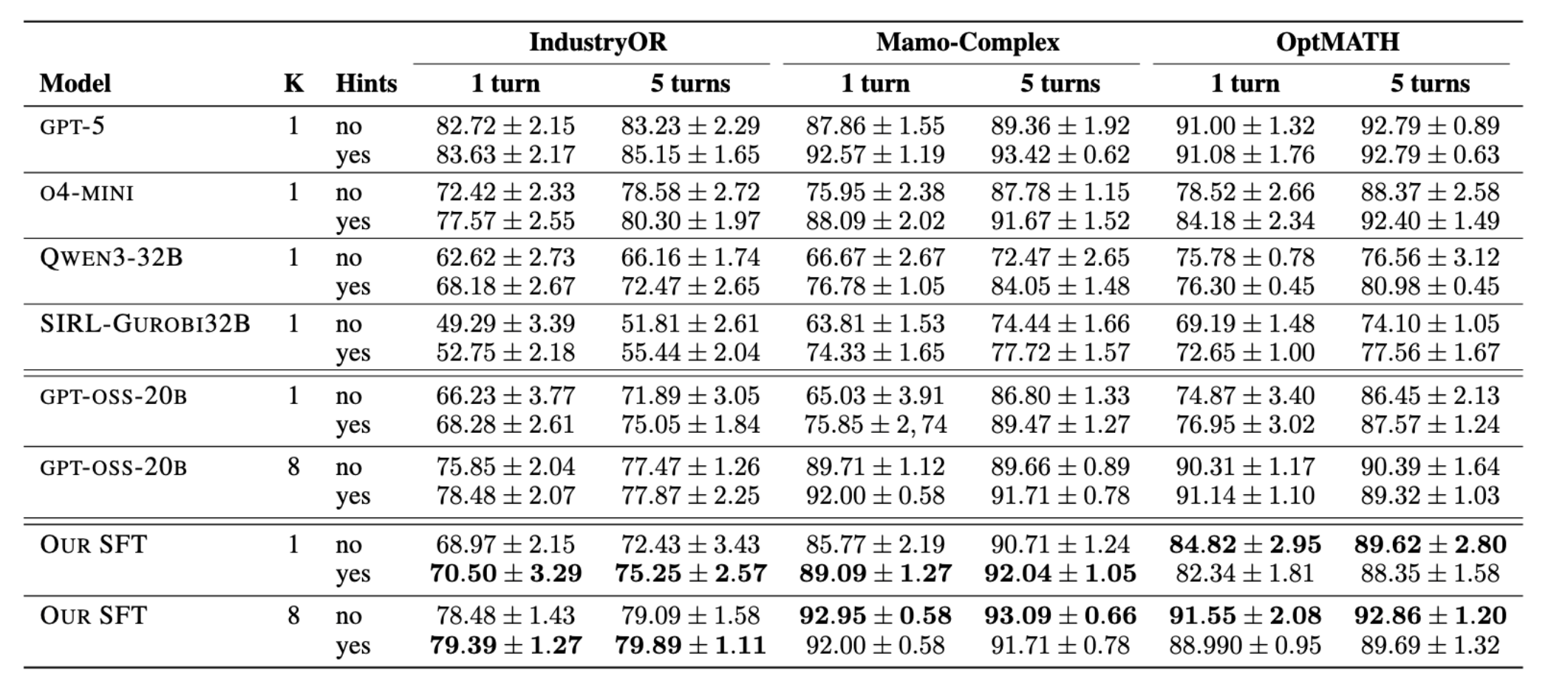

To test the system, we turned to three widely used public benchmarks that represent some of the most complex formulation tasks in the field. On closer inspection, we discovered that 30 to 50 percent of the original test data was flawed. After manually correcting the issues, OptiMind improved accuracy by approximately 10 percent over the base model. Figure 4 and Table 1 show detailed comparisons: OptiMind outperformed other open-source models under 32 billion parameters and, when combined with expert hints and correction strategies, matched or exceeded the performance of current leading models.

OptiMind is more reliable than other models because it learns from higher-quality, domain-aligned data. And by correcting errors and inconsistencies in standard datasets, we significantly reduced the model’s tendency to hallucinate relative to the base and comparison models.

Looking forward

While supervised fine-tuning has provided a strong foundation, we are exploring reinforcement learning to further refine OptiMind’s reasoning capabilities. We’re also investigating automated frameworks that would allow LLMs to generate their own expert hints, enabling continuous autonomous improvement. Additionally, we are working with Microsoft product teams and industry collaborators to expand OptiMind’s utility, adding support for more programming languages and a variety of input formats, including Excel and other widely used tools.

We’re releasing OptiMind as an experimental model to gather community feedback and inform future development. The model is available through Microsoft Foundry (opens in new tab) and Hugging Face (opens in new tab), and we’ve open-sourced the benchmarks and data-processing procedures on GitHub (opens in new tab) to support more reliable evaluation across the field. We welcome feedback through GitHub (opens in new tab), and invite those interested in shaping the future of optimization to apply for one of our open roles.

Related publications

OptiMind: Teaching LLMs to Think Like Optimization Experts

Meet the authors

Xinzhi Zhang

Research Intern

Zeyi Chen

Research Intern

University of Washington

Humishka Hope

Research Inten

Hugo Barbalho

Research SDE

Konstantina Mellou

Principal Researcher

Marco Molinaro

Principal Researcher

![]()

Janardhan (Jana) Kulkarni

Principal Researcher

Ishai Menache

Partner Research Manager

Sirui Li

Sr. Research SDE

Continue reading

MindJourney enables AI to explore simulated 3D worlds to improve spatial interpretation

New methods boost reasoning in small and large language models

PromptWizard: The future of prompt optimization through feedback-driven self-evolving prompts

Advances in run-time strategies for next-generation foundation models

Research Areas

Research Groups

Related projects

Related labs

Follow us:

Share this page:

相關文章