AI胡言亂語致死

作者對當前圍繞AI和AGI的討論感到幻滅,批評將未來AI神化並以模糊的技術救贖承諾來迴避當前挑戰的趨勢,認為這種討論更像是宗教而非科學。

Elocination

Death by AI gibberish

How we're speaking about AGI sounds more like religion than it does science. At least the Garden of Eden was beautiful.

I was once fascinated by machine learning. I read textbooks on it, studied applied statistics, and dove into alignment books. When AlphaFold came out, I was mindblown.

But as of late, any passion I had for it has been utterly nuked. I cannot stand the deflection of every problem known to man onto some vague future machine god. Choirs of ‘It’s so over’ hiding behind anime profile pictures imitate each other in an endless chain of dominoes, drowning out any semblance of rational discussion. Every word spoken by Lord Altman is seen not as a sales ploy, but as a prophecy.

What I can't shake is the contrast between my feeling of awe at the mysteries of the universe and this wildly unsatisfying ‘answer’ to it all. AGI will just solve it, they say! It will ‘self-improve’.

I am unsure if I have the reflexive run-away-from-hype gene or if everyone is actually mad. Something about the quality of the discussion around AI/AGI is so off-putting, so intellectually unsatisfying, that I reflexively want to disengage. I notice that same uneasy look on people's faces when they talk about the singularity, an empty stare preceding a nervous swallow. Something is off.

As each new benchmark is smashed through, another expert doubter is sacrificed. Hoards more are then converted to this nebulous future when everything is solved, but no one can articulate how. You couldn't possibly doubt it when all the doubters are progressively 'wrong', right? Well, I’m saying it — this is a dangerous form of non-thinking. Skepticism was the wellspring of the Enlightenment, yet faith in AI development is looking more like religion than science.

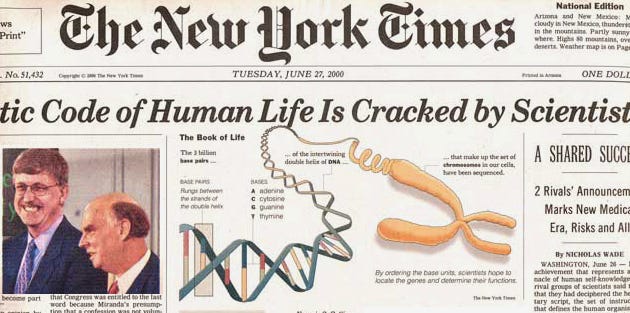

Was there ever a time in history when people were at such a consensus that a new technology would be the final frontier, and they were right? The human genome project showed us the opposite. We discovered biology was not so simple. Twenty years on, and we're still largely in the dark. Every new discovery seems to reveal a new unknown, and I don’t think it’s unreasonable to suggest that LLMs will be the same.

What's most bothersome is the handwaving. The nature of reality, consciousness, and the intricate self-organizing machines our thought bubbles inhabit will all just be solved by AGI. How convenient! Do any of you actually find this satisfying? Enough to resign yourselves to it?

I cannot distinguish how these claims differ from claiming we'll find a wormhole. Sure, mathematics allows for it — but when will we find it?

And what of the energy problem? We know we haven't solved biology with AI yet if they're taking orders of magnitude more energy and data to do what we do on the smell of an oily rag. Maybe we will replicate something like us in silicon with 1000x more energy, and it will do our jobs well enough if we can make that energy free of cost. But look, it’s not answering any of life’s mysteries for me. I’m still grasping to understand how the hell this all works! What the hell is on the outside of this thing we’re inside of?

Something feels logically incoherent about the AI machine lord emerging from silicon. All the information we have created in history is filtered through our own minds. These minds, we are almost sure, lack the ability to sense all elements of reality. Yet we now believe the hierarchy will bend in on itself and emerge above us from the filtered information we’ve fed it. It's possible, but that goes back to my point on the wormhole. What I suspect is more likely is we'll get to the end of this cycle and realize it was the same as the genome; it works up to a point. At least the genome gave us a mechanism to work and build with, though — what on earth do these uninterpretable models give us?1

What I’m really longing for are laws of nature. I want rules that help us solve mysteries and allow us to do things we've never done before. Not just do things we already could faster. Those are tools — not solutions in themselves.

I think there are some valuable people in this space. I think applying the tech to scientific problems will help us scale ourselves. I even agree that it’s valid to be concerned about how these technologies could evolve to harm us. At the same time, I would like us to remember that we humans are so prone to this imitative mania. We’re never good at predicting the future. Silicon Valley always sells you a story first.

If you find yourself doubting something, it is ok to ask questions. It is ok to be wrong! We needn’t sacrifice every individual who dared to say that maybe this machine intelligence is indeed bounded by our own, merely a model of everything we’ve created, and nothing more. No one is effectively denying this point in any rigorous way.

The problem with the comeback of ‘it will self-improve’ is that it’s unfalsifiable today but intuitively feels gappy and wrong. I can’t say definitively that you’re wrong. You can’t say when you’ll be right, nor where such a belief even gets us in the interim. So do we need new language to get us out of this fantasy? Are the sci-fi connotations of ‘AGI’ unmooring us? This topic has been spiraling in my mind today. In our intensifying modern void of meaning, a machine God just isn’t doing it for me (yet).

Thanks for reading. I’d love your support!

A note that yes, I am aware of interpretability research and very much for it. In order to use insights from models to make interventions, we need to break down cause and effect. Correlations are cool, but they don’t give you mechanisms.

![]()

![]()

It's the new "NYC is going to be underwater in 10 years"

So far as I can tell, being an AI expert requires a priori faith in raising up the AI god. If you have an opinion on AI that isn't, "we will make it god", you can't be an AI expert.

Couple that with the fact that articles about singularity and the end of human intuition get more engagement on blogs, and you have a field immune to skepticism.

One hard limit of the current paradigm of LLMs I always come back to, is that telos is always a human input. An AI cannot have a purpose or goal without human input (in the prompt, or the training data). It turns out, on a fundamental level a goal is necessary to achieve basic perception (in real situations).

Take for example, an article I recently read about how mathematicians will be replaced with AI in 5 years. Math is 100% translatable to computers, so there's no hold up there. But being a mathematician involves being presented with the infinite array of possible directions one might take math, and pushing it intuitively towards one of the directions in the limited set of useful directions. This is an impossible problem for LLMs. As such LLMs will be (and probably already are) an excellent tool that mathematicians leverage, but it will not replace "mathematician" as a human endeavor.

The idea that we can raise up circuits ontologically (despite being kind of ridiculous --- by what mechanism? Perhaps with some horror of cybernetics) also runs up the against the same problem --- even if we are able to create the machine god, he will not be much of a god, because all of his proclamations will run up against the same constraint. The machine god's power will always be wielded by some human behind the curtain, directing inputs, Wizard of Oz style.

I came to similar conclusions in this article I wrote below but yes, there are definitely dogmatic undertones shaping the narrative of the LLM revolution.

https://generativeforms.substack.com/p/pascals-wager-reloaded-and-the-limitation

No posts

Ready for more?

相關文章

其他收藏 · 0