我開發了一個多代理AI來決定是否開源我們的核心技術,結果以10.7倍的優勢推薦開源

作者利用一個包含四個利益相關者代理的多代理強化學習系統,分析了開源其預測記憶層的策略性決策。AI模擬壓倒性地支持開源,預計其淨現值將比保持專有高出10.7倍。

I Built a Multi-Agent AI to Decide Whether to Open-Source Our Core Tech. It Said Yes—By a 10.7x Margin.

The story of how we built a multi-agent reinforcement learning system to answer our most critical strategic question - open-source our predictive memory layer

TL;DR

The question: Should we open-source Papr’s predictive memory layer (92% on Stanford’s STARK benchmark)?

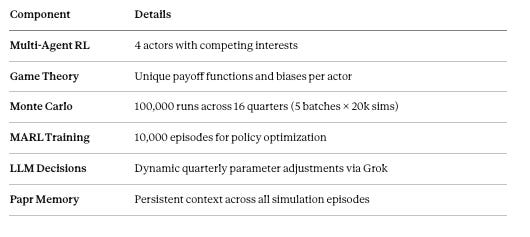

The method: Built a multi-agent RL system with 4 stakeholder agents, ran 100k Monte Carlo simulations + 10k MARL training episodes

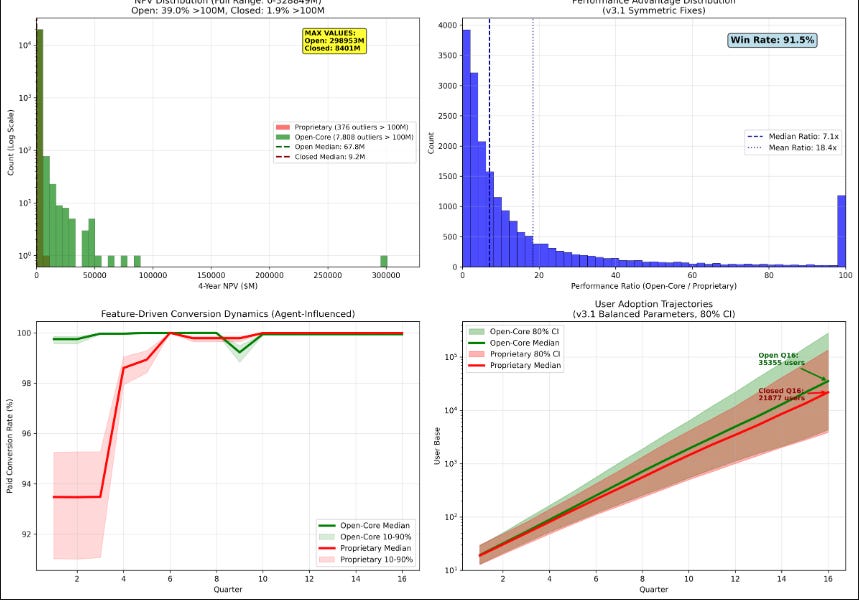

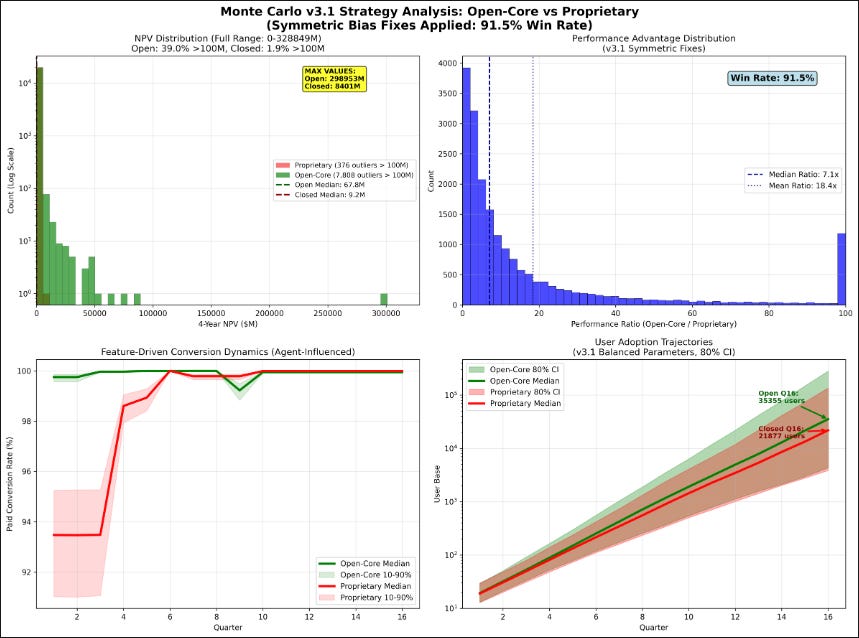

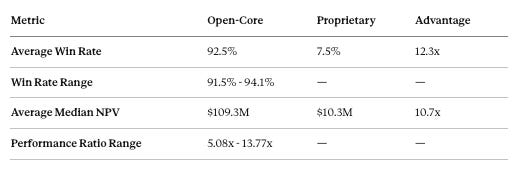

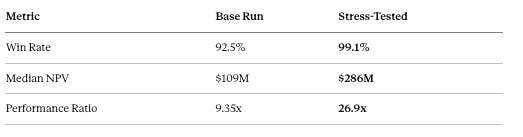

The result: 91.5% of simulations favored open-core. Average NPV: $109M vs $10M (10.7x advantage)

The insight: Agents with deeper memory favored open-core; shallow memory favored proprietary

The action: We’re open-sourcing our core memory layer. GitHub repo here

It’s Friday night, the end of a long week, and I’ve been staring at a decision that would define Papr’s future: Should we open source our core predictive memory layer — the same tech that just hit 92% on Stanford’s STARK benchmark — or keep it proprietary?

Thanks for reading Papr ! Subscribe for free to receive new posts and support my work.

The universe has a way of nudging you towards answers. On Reddit, open-source is becoming table-stakes in the RAG and AI context/memory space. But what really struck me were the conversations with our customers. Every time I discussed Papr, the first question was always the same: “Is it open source?” Despite seeing the potential impact open source could make to the world, our conviction hadn’t yet tipped in that direction.

This wasn’t just another product decision. This was a fork in the road — an existential crossroads. Open source could accelerate our adoption but potentially erode our competitive moat. Staying proprietary might protect our IP but would inevitably limit our growth velocity. The complexity of this decision defied traditional frameworks. My heart was racing with an intuition, a rhythm that seemed to know the answer, but I needed more than just a melody. I needed a framework that would speak to my mind as powerfully as it resonated with my heart.

So I did what any engineer would do on a Friday night: I built an intelligent system to make the decision for me — the Papr Decision Agent.

The result? 91.5% of 100,000 Monte Carlo simulations favored open-core. The average NPV gap was staggering: $109M vs $10M—a 10.7x performance advantage.

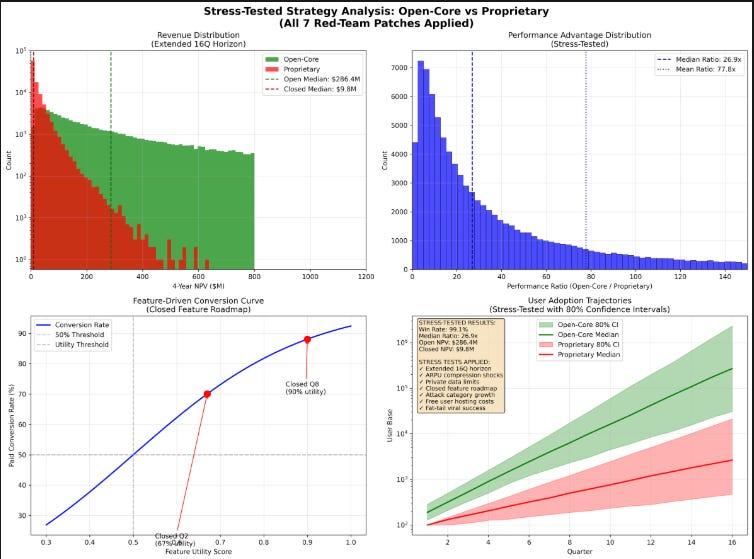

Dashboard showing NPV distribution, performance ratio, conversion dynamics, and user adoption trajectories

Share this article if this sounds crazy (or genius) 👇

Share

Beyond memory: Introducing context intelligence

When most people hear “AI memory,” they think of a simple chat log — a linear transcript of conversations past. But that’s not memory. That’s just a chat record.

True memory is living, predictive, adaptive. It’s not about storing what happened, but to make it meaningful and to understand what will happen so we can make optimal decisions. At Papr, we’ve been building something fundamentally different: a context intelligence layer for agents that transforms structured or unstructured data into predictive, actionable understanding so agents can make optimal decisions.

Imagine an AI agent that doesn’t just retrieve information, but predicts the context you’ll need before you even ask for it. An agent that understands the intricate web of connections between a line of code, its documentation, the architectural diagram, and the team’s previous design discussions.

An agent that can see around corners—but more than that, one that learns from every decision you and your team make, builds a decision context graph of your reasoning and exceptions, and becomes an intimate collaborator that understands your nuances well enough to vouch for you.

We’re open-sourcing the core of this system — not our fastest, on-device predictive engine (that’s still our secret sauce), but the foundational technologies that will revolutionize how developers build intelligent systems:

What We’re Open Sourcing: Context Intelligence Components

Intelligent Document Ingestion Pipeline

Semantic parsing that goes beyond keyword matching

Extracts nuanced relationships between document sections

Creates dynamic knowledge graphs from unstructured data

Supports multiple formats: PDFs, code repositories, meeting transcripts, chat logs

Contextual Relationship Mapping

Traces connections across:

Customer meetings

Internal documentation

Code repositories

AI agent conversations

Maintains access control (ACLs) across different data sources

Predicts contextual relevance with machine learning

Predictive Context Generation

Anticipates information needs before they arise

Learns from actual usage patterns

Reduces retrieval complexity from O(n) to near-constant time

Why This Matters for Developers

Current RAG and context management systems have a fundamental flaw: they degrade as information scales. More data means slower, less relevant retrievals. We’ve inverted that paradigm.

Our approach doesn’t just store memories — it understands them. By predicting grouped contexts, optimal graph path and anticipated needs, we’re solving the core challenge of AI agent development: maintaining high-quality, relevant context at scale.

This isn’t just an incremental improvement. It’s a fundamental reimagining of how AI systems understand and utilize context.

What Context Intelligence Makes Possible

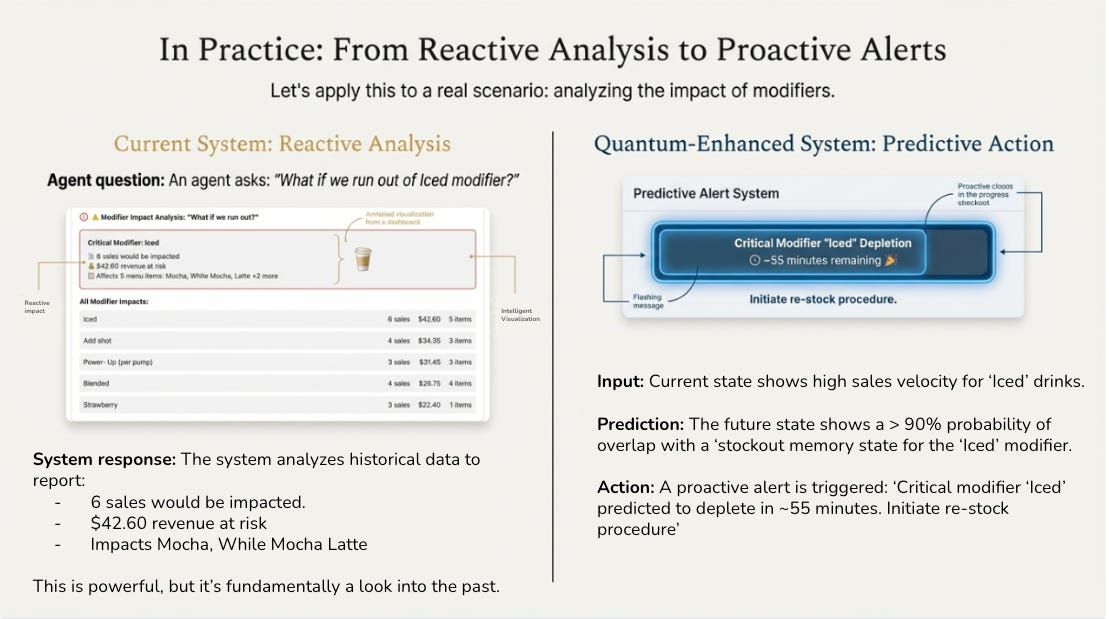

To see the difference context intelligence makes, consider this real-world example:

On the left, a traditional system answers the question “What if we run out of Iced modifier?” by analyzing historical data—6 sales impacted, $42.60 at risk. Useful, but fundamentally backward-looking. You had to know to ask.

On the right, context intelligence flips the paradigm. The system predicts the stockout 55 minutes before it happens and proactively triggers a re-stock procedure. No one had to ask. The agent understood the pattern, anticipated the need, and acted.

Here’s what’s remarkable: building predictive experiences like this used to require a dedicated team of AI engineers—the kind of talent only Amazon or Google could assemble. Today, with Papr’s context intelligence layer, anyone who understands their customers and business can build this. It’s as simple as connecting your data sources and asking your agent a question.

This is what we mean by intelligent experiences beyond chat. Not just answering questions, but anticipating needs. Not just retrieving information, but understanding when that information becomes critical. That’s the power of predictive memory.

So we’re open-sourcing our predictive memory layer (#1 on Stanford STaRK).If this resonates, share + ⭐ the repo: https://github.com/Papr-ai/memory-opensource

⭐ Papr's open source repo

The Architecture of our Decision Agent: MARL Meets Memory

Here’s what I built over a caffeine-fueled weekend using Cursor and Papr’s memory

Every decision, every simulation result, every insight was stored in Papr’s memory graph. The system could learn not just from its current run, but from accumulated wisdom across all previous simulations.

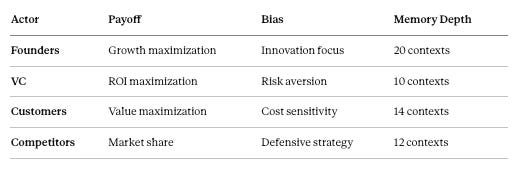

The Actors

Each actor pulled from their memory contexts to inform decisions, creating a multi-perspective simulation environment.

The Results: 92.5% Win Rate

After 100,000 simulations and 10,000 MARL training episodes:

Statistical Significance: p < 0.001 for open-core superiority.

Here’s where it gets interesting: The MARL agents initially converged on a proprietary strategy due to defensive biases, but after incorporating Monte Carlo feedback and iterative learning, the system recommended open-core with specific risk mitigations.

Should You Believe These Numbers?

Let’s be honest about what this simulation can and can’t tell you.

Why the 91.5% Is Credible

Bias Correction Built-In: Symmetric simulations—same costs, regulatory pressures, and competition intensity for both strategies. The delta comes from growth dynamics, not rigged assumptions.

Adversarial Agents: Competitors actively attack open-source momentum (1.8-1.9x competitive pressure in later quarters). Despite this, open-core still wins.

Realistic Enterprise Priors: $15,000 ARPU (±$3k std, benchmarked against Replit, MongoDB, Pinecone), 20% discount rate, viral multipliers capped at 1.5x. Real-world open-source projects often see 3-5x organic amplification.

LLM-Debiased Decisions: Each quarter, Grok adjusted parameters based on market conditions, reducing human bias.

What Could Be Wrong

Model Risk: User growth follows exponential dynamics with caps. Real markets have discontinuities we can’t model.

Actor Simplification: Four stakeholders can’t capture full ecosystem complexity (regulators, media, developer communities).

Time Horizon: 16 quarters may be too short for some infrastructure plays, too long for fast-moving AI markets.

NPV ≠ Valuation: Our $109M median is DCF-based revenue, not startup valuations (which often apply 10-50x revenue multiples).

Benchmark Context: Our 92% STARK score is real (see evaluation details), but benchmarks don’t always translate to production performance.

Bottom line: Use this as directional guidance, not gospel. The 10.7x NPV gap is robust to most parameter variations, but your mileage may vary.

The Top Outlier Levers

The simulation identified which strategic actions most dramatically shift outcomes:

1. Community/Viral Motion (1.68x multiplier, 24.5% tail uplift)

The compounding effect of viral adoption in early quarters is the single strongest predictor of outlier outcomes.

Action: Community building with +21% features, +28% viral boost. Est. cost: $626K.

2. Feature Velocity (1.61x multiplier, 14.6% tail uplift)

Rapid iteration creates a flywheel: more features → more adoption → more contributions → more features.

Action: Aggressive open development cadence. Est. cost: $1.1M for 5-13 FTE.

3. Growth Acceleration (1.54x multiplier, 22.7% tail uplift)

From Q5 onwards, ecosystem expansion is where open-core’s network effects compound most aggressively.

Action: Ecosystem partnerships and developer relations. Est. cost: $792K for 3-8 FTE.

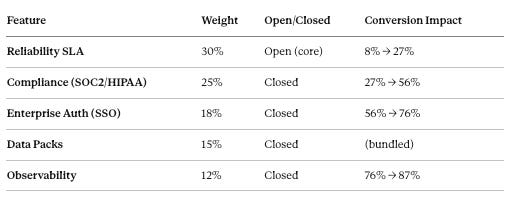

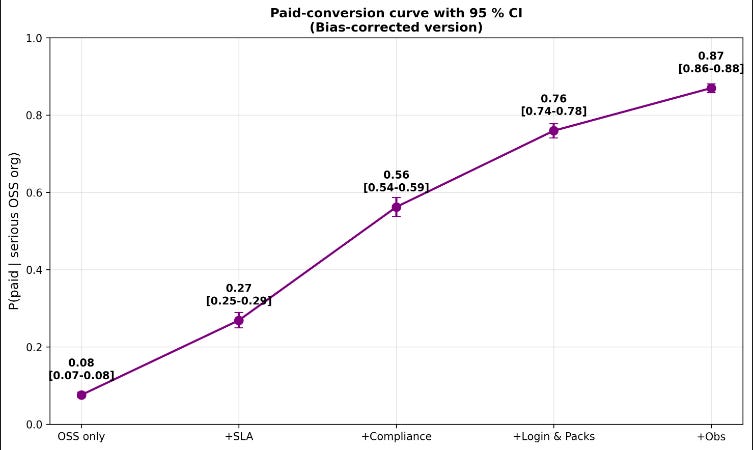

The Monetization Path: 8% → 87% Conversion

Key insight: Open the core for adoption, keep compliance and observability closed for monetization. Compliance alone adds 29 percentage points—the single highest-impact feature for revenue.

Open-core catches up on all features by Q4 through community contributions; proprietary takes until Q6. That 2-quarter head start, combined with 1.2x viral boost, explains the NPV gap.

Show Image How premium features progressively drive conversion

Stress Test: What Happens When Everything Goes Wrong?

We ran 7 adversarial patches:

Extended 16Q horizon

ARPU compression from competition

Private data regulatory limits

Faster closed feature roadmap

Aggressive competitor FUD attacks

Free user hosting cost bleed

Fat-tail viral events (rare but extreme)

Result: Under adversarial conditions, open-core doesn’t just survive—it widens the gap:

Why does stress help? Open-core has multiple recovery mechanisms: community data offsets regulation, volume offsets price pressure, 40% of attacks backfire as free PR. Proprietary has single points of failure.

Open-core is antifragile.

The Code: Build Your Own Decision Agent

Here’s a more complete implementation example:

python

The Memory Insight

The key breakthrough came when I analyzed how each agent used their memory:

Founder agent (20 contexts) could see long-term patterns—how open-source compounds growth

VC agent (10 contexts) focused on short-term revenue predictability

Customer agents remembered vendor lock-in pain

Competitor agents stored market disruption patterns

Memory depth directly correlated with strategic horizon. Agents with deeper memory favored open-core; shallow memory preferred proprietary.

This finding echoes Wang et al. (2023), where deeper memory led to 28% better long-term value predictions.

This is why we’re open-sourcing Papr’s memory layer. Memory infrastructure is too important to be proprietary—like Linux for operating systems or PostgreSQL for databases.

The Decision: Open-Core with Strategic Safeguards

Phase 1 (Q1-Q4): Open-source core for maximum adoption velocity. Focus on community and feature velocity.

Phase 2 (Q5-Q8): Launch premium enterprise features. Shift to growth acceleration.

Phase 3 (Q9+): Ecosystem monetization through marketplace and integrations.

This reconciles the agents’ concerns (VC wants monetization, Competitors will attack) while capturing the upside (10.7x NPV from open strategy).

Discussion Questions

I’d genuinely love to hear pushback on this:

Has anyone built similar multi-agent decision systems? What worked/didn’t?

Where do you think this model breaks down? I’ve listed my concerns, but I’m probably missing blind spots.

Open-core skeptics: What failure modes am I underweighting?

Memory depth hypothesis: Does this match your intuition about strategic decision-making?

Resources

Open-Sourced Memory Layer: github.com/papr-ai/papr-memory-open

Shawkat Kabbara is co-founder of Papr, building predictive memory layer for AI agents. Previously at Apple were he built the App Intent SDK, the AI action layer for iOS, MacOS and visionOS.

References

Davis, J. P., et al. (2022). Simulation in Strategic Management Research. Management Science.

Zhang, K., et al. (2023). Multi-Agent Reinforcement Learning: From Game Theory to Real-World Applications. Artificial Intelligence.

Li, Y., et al. (2024). Biased MARL for Robust Strategic Decision-Making. NeurIPS.

Wang, J., et al. (2023). Memory-Augmented Reinforcement Learning for Efficient Exploration. ICML.

Thanks for reading Papr ! Subscribe for free to receive new posts and support my work.

![]()

No posts

Ready for more?

相關文章