發現的幻覺:AI 生成的「開放式」數學難題證明

本文探討了 AI,特別是像 GPT 5.2 Pro 這樣的語言模型,在解決複雜的開放式數學難題方面的影響。文章質疑 AI 是否真正發現了新的數學真理,還是僅僅變得更擅長引用現有知識。

The Illusion of Discovery: AI-Generated Proofs of 'Open' Math Problems

This article evaluates the practical impact of AI on open math problems and outlines the current community consensus on its technical strengths and limitations.

Do you use AI as a source for:

Generating novel scientific ideas for open research problems?

Reviewing scientific drafts?

Literature review?

Then this article is for you.

Thanks for reading! Subscribe for free to receive new posts and support my work.

A year ago, the idea of an LLM executing multi-step complex proofs was considered a distant goal. Today, they can can function as sophisticated research assistants. But as AI begins 'solving' open math problems like those posed by Paul Erdős, we have to ask: is it discovering new truths, or just reading the map better than we do?

The Breakthrough

Ever since GPT 5.2 Pro was released last month, AI-generated proofs of open math problems are taking off. According to an active contributor who volunteers in verifying AI-written proof of Erdős problems, GPT 5.2 Pro currently is mathematically the strongest model and can actually outperform “independent” checks by Gemini/Claude. Recently, a post on X went viral declaring that they have solved a second Erdős problem (#281) using only GPT 5.2 Pro.

The Reality Check

So, what was the aftermath? In less than 24 hours, a prior proof from literature was located and it turned out that GPT had only discovered new proof of a non-open problem. Is that impressive? Absolutely. Infact, that inspired me to find and submit a solution for an open Erdős problem. Does that mean AGI is already knocking on our doors? Before we answer that question, let’s dive into their wiki page of the AI-contributions.

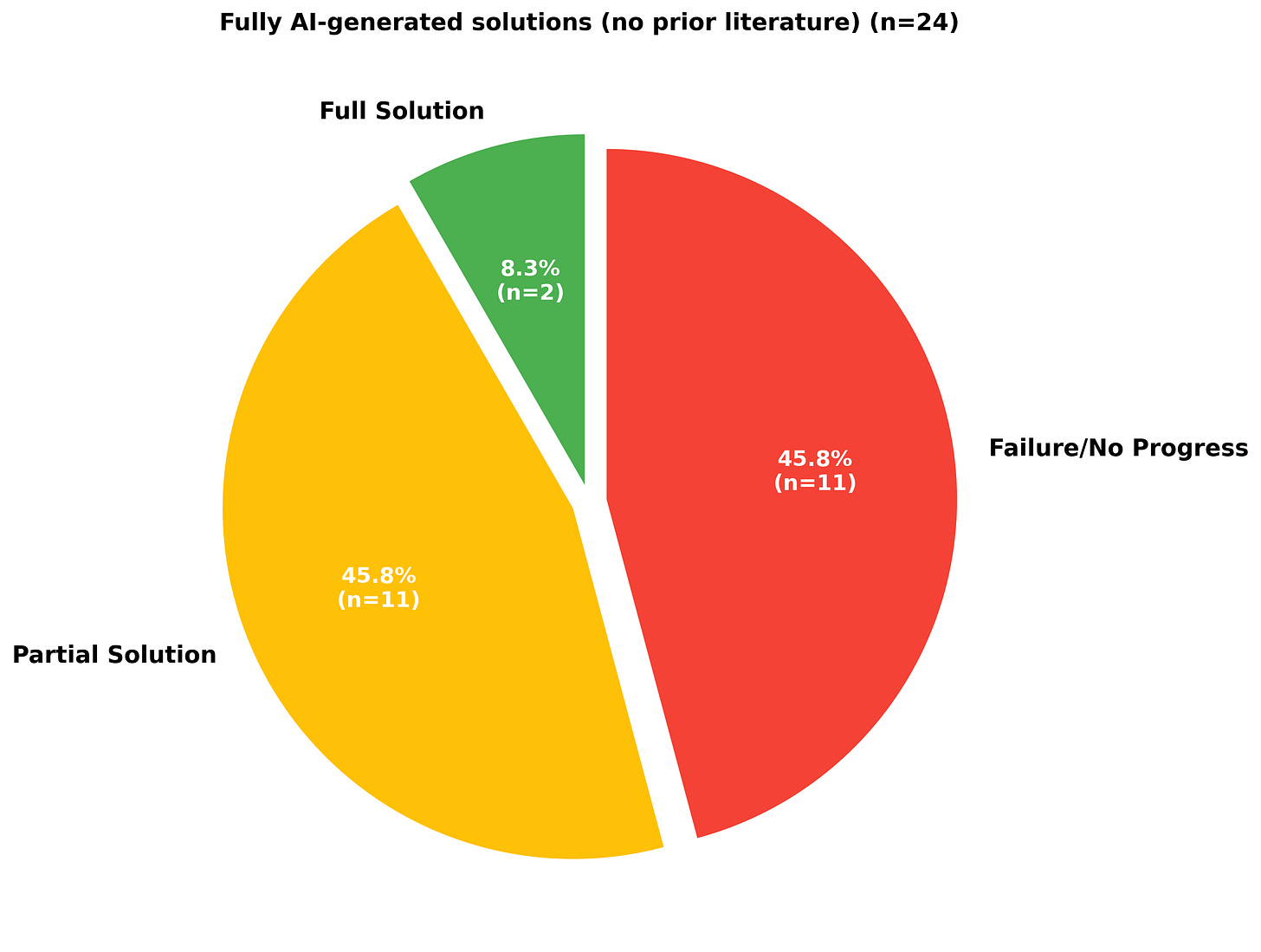

As shown in Figure 1, we could see that only 2 problems have actually been solved completely by AI without any existence of prior literature review out of which 1 solution was achieved by applying an existing theorem.

When trying to assess the success rate of these tools, reporting bias should be kept in mind. The share of the ‘Failure/No Progress’ category in Figure 1 should be much higher than expected due to underreporting of failures to solve such problems. Quoting from its wiki page: “We have found empirically that problems that could be solved fully by AI without the benefit of prior literature or human interaction tend to be simpler and have shorter proofs than problems that required a combination of AI, human input, and past literature to solve.”

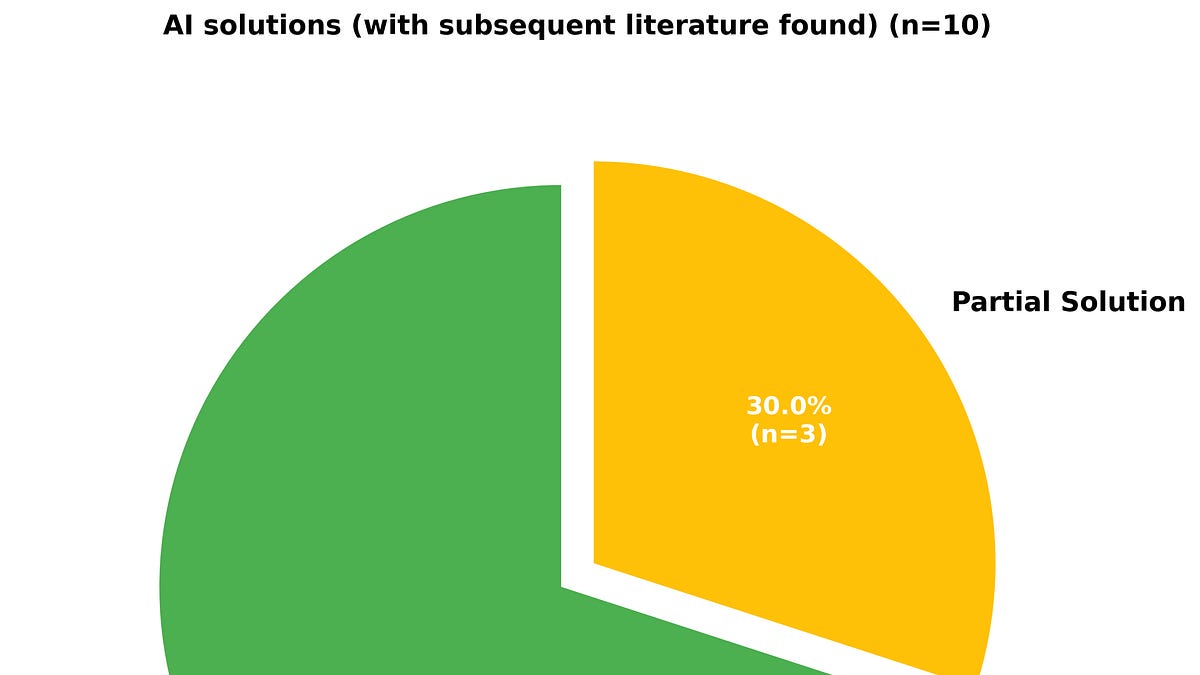

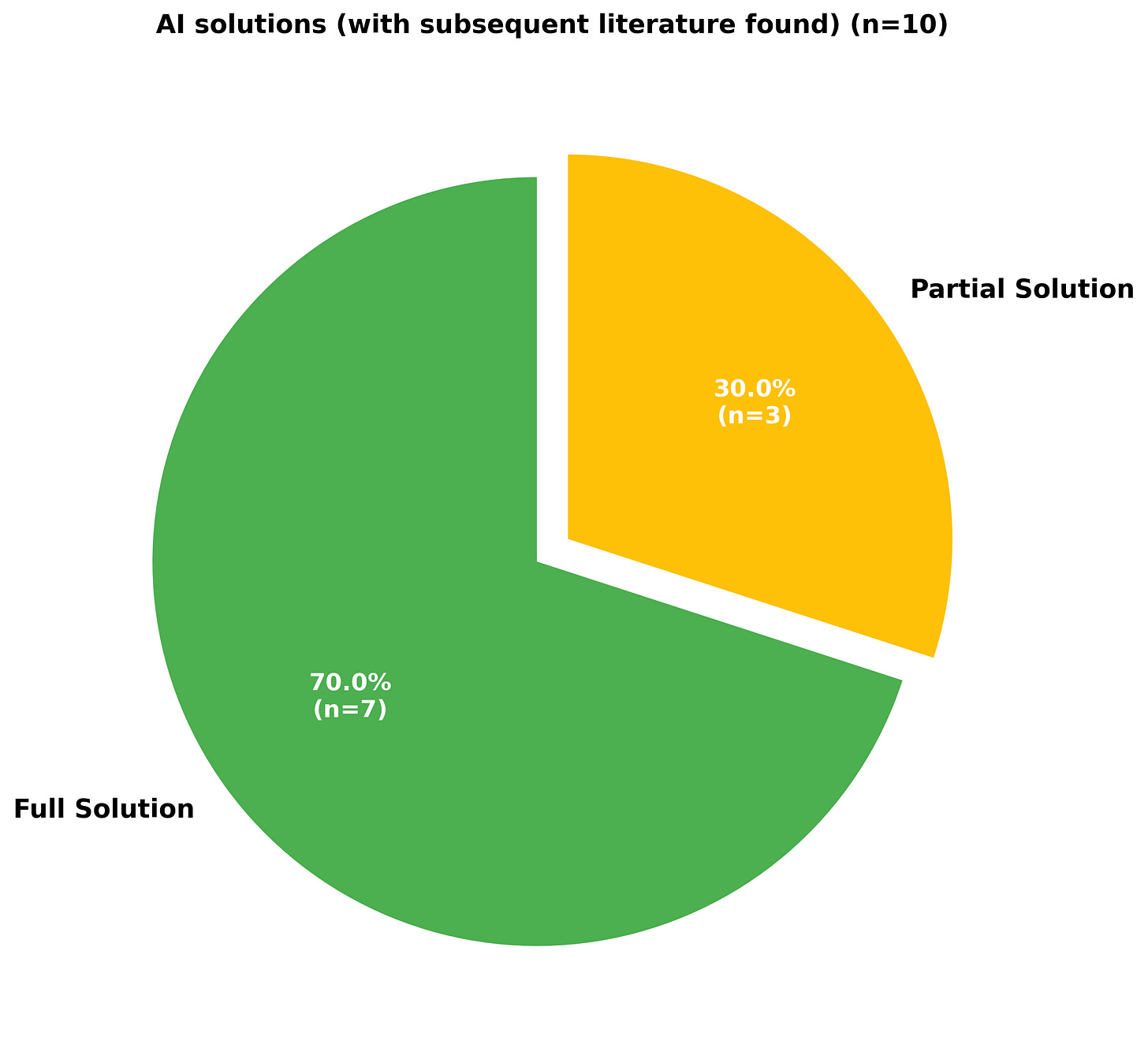

In other cases, a subsequent literature review revealed the problem already had a partial solution or alternate full solutions as shown in Figure 3. In only 1 case was the AI able to generate a full solution with an existing partial solution from past literature. It is also difficult to say whether the AI solution was arrived at independently or was it genuinely influenced by prior literature. At best, we can say that AI is currently better at "re-synthesizing" existing mathematical knowledge than venturing into "uncharted territory."

According to Terence Tao, “AI-written proofs have improved to the point of being human-readable and technically correct, but still feel slightly ‘off’, for instance sometimes stressing easy statements and being overly brief on core aspects of the argument”. AI often treats a foundational axiom and a complex lemma with the same weight. The point 5 on the disclaimer section in the wiki stresses on how a problem solved by an AI tool must be received:

“If an Erdős problem was first posed N years ago, and was recently solved by an AI tool, it may be misleading to make announcements such as “this problem resisted all human efforts at proof for N years” in order to imply that the problem is particularly difficult. Such an announcement may be technically true, especially if a comprehensive literature review turns up no significant prior literature on the problem, but it is also possible that this particular question received very little attention from human mathematicians during this time period, and the absence of any past progress may therefore be more a reflection of the obscurity of the problem than of its difficulty. (If on the other hand there is substantial literature expending significant effort on establishing partial results, without generating a clear path to a full solution, this is more convincing evidence of difficulty than mere absence of literature.)”

The Researcher’s Toolkit

Here are some AI-pitfalls that they have observed:

Self-fulfills the problem’s status as open based on available literature

Gets confused with closely related problems

Lack of remarks and observations unlike human-generated proofs

AI-math slop/Hallucination

Weak performance for problems in other subdomain (eg. geometric)

Usefulness of the solution

So before you take the output of an AI tool seriously for research, they recommend to consider the following checklist for rigor before announcing your solution. This guide also applies to the non-experts in the field and in extension other fields of research as AI-tools have reduced the barriers for entry and participation:

Do I understand why this question was asked, and why the hypotheses and conclusions were formulated the way they were?

Have I performed my own literature review on the problem? How does this problem relate to other results and questions in the field?

Do I understand what the key ideas of the solution are, and how the hypotheses are utilized to reach the conclusion?

How does the proof method compare to that in past literature? What was the key new insight that allowed this solution to succeed when previous methods failed?

Can I generate a formalized version of the proof?

Did I submit the AI-generated proof to a separate AI tool and ask it to critically evaluate the proof for correctness?

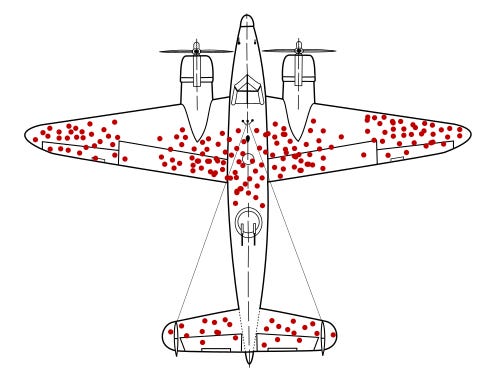

AI deep research tools have been successful in locating solutions to some previously “open” Erdős problems. It can find a needle in a haystack of existing literature that humans have forgotten. However, it isn’t yet an architect capable of building entirely new mathematical frameworks. Based on that reality, Mr. Tao suggests that a more realistic and productive use of AI tools proposed in research is to crowd source literature review.

Conclusion

AI is excellent at re-synthesizing obscured knowledge, which is a massive win for productivity, even if it isn’t AGI. We are entering an era of "Citizen Mathematics."

Sources:

Wiki: AI contributions to Erdős problems

erdosproblems.com: TerrenceTao

erdosproblems.com: natso26

Thanks for reading! Subscribe for free to receive new posts and support my work.

![]()

Ready for more?

相關文章