預見 2026 年的 AI 工程領域發展

本文預測 2026 年的 AI 工程領域將從對 AI 代理的初期炒作轉向更可靠的 AI 工作流程,隨著產業成熟並區分穩固基礎與投機性嘗試。

AI Agent Engineering

What to Expect from the AI Engineering World in 2026

Hello there :) I’m writing to you from New Zealand, and as the new year countdown begins here before the rest of the world, let me invite you into 2026 with some thoughts on how AI Engineering will change.

2025 was crazy, we’ve seen model releases that felt like magic, startups raising nine-figure rounds on the promise of “AI agents,” and every company scrambling to bolt ChatGPT onto their product. But the hype cycle is maturing, and in 2026 we’re about to see who’s been building on solid ground versus who’s been stacking cards.

Here’s what I’m watching for.

1. AI Workflows Will Beat AI Agents (Most of the Time)

Agents are autonomous systems that can reason, plan, and execute complex tasks. But they don’t really work reliably enough for most business use cases.

Think of it as a spectrum:

On one end, you have fully autonomous agents: unconstrained systems that can theoretically follow any path to try and get the job. done.

On the other end, you have tightly controlled workflows: step-by-step automations that handle specific, well-defined tasks within guardrails.

Most teams are learning the hard way that they jumped to agents too quickly. They built these ambitious systems without figuring out how to measure accuracy, how to debug when things go wrong, or how to prevent the agent from doing something catastrophic. (If you’re stuck in this situation and need help getting unstuck, I’m happy to help.)

The smart money in 2026 is moving toward constrained workflows. Yes, they require more upfront design thinking. Yes, you need to map out the logic and decision trees. But they actually work. They’re maintainable. They don’t hallucinate your customer data as much as agents do.

When should you use agents versus workflows:

If the task has high variability and unclear success criteria agents can be great. Eg, most AI coding systems are agentic by design, and that works great for them.

But if you need reliability, predictability, or you’re dealing with anything that touches money, data, or customer trust — build a workflow. Chain your LLM calls together with explicit logic, validation steps, and human checkpoints where they matter.

Thanks for reading AI Agent Engineering! Subscribe for free to receive new posts.

2. The AI Bubble Won’t Pop (Yet), But Expect Corrections

If you look at the numbers, there are definitely bubble characteristics. Nearly two-thirds of U.S. venture capital deal value in early 2025 came from AI companies. Semiconductor valuations are at record price-to-sales ratios. There’s a lot of circular deal-making where big tech companies invest in startups that then spend that money right back on the investor’s cloud infrastructure.

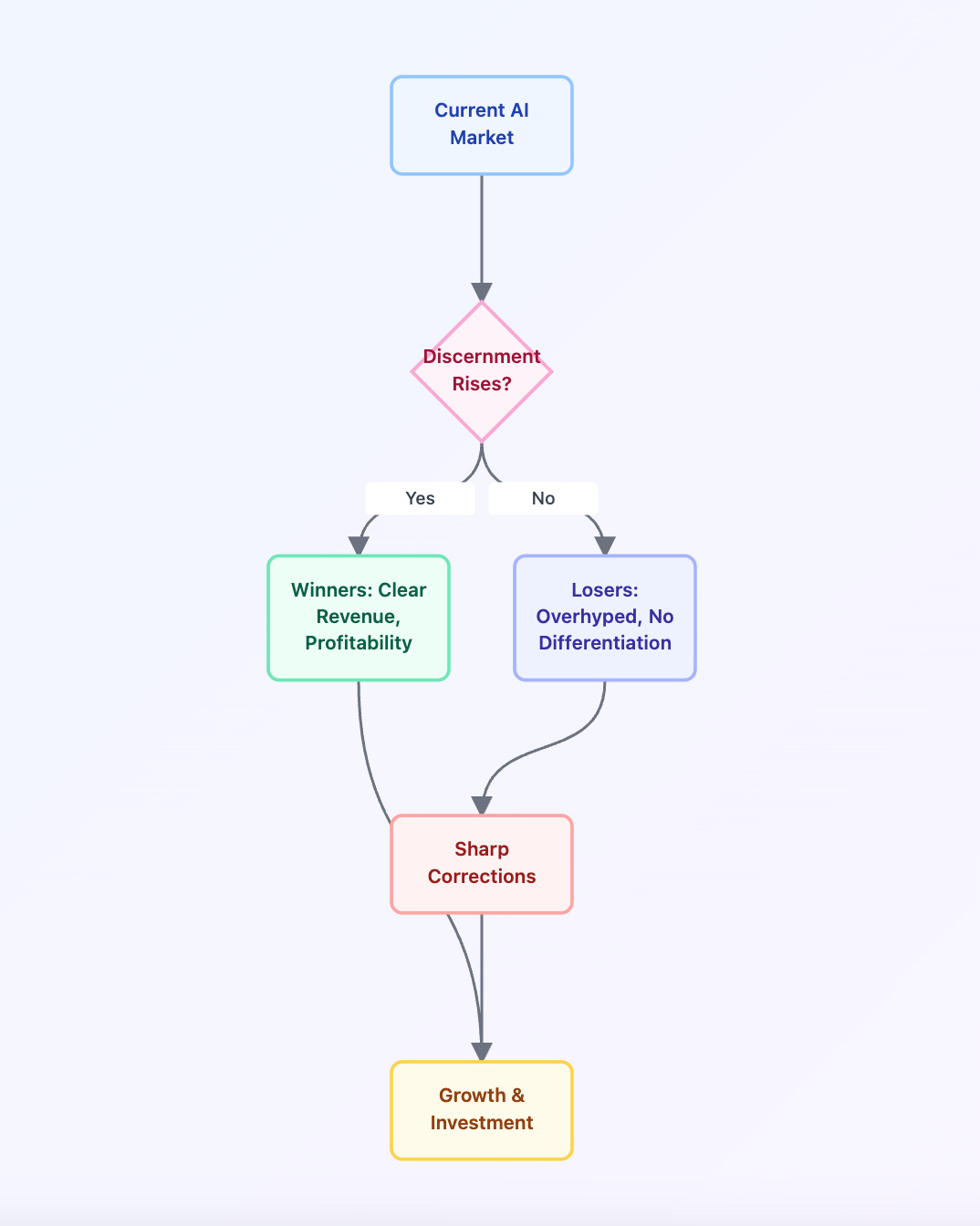

Classic bubble behaviour. What I expect in 2026 is a splintering. The market will get a lot more discerning. Companies with clear AI-driven revenue, real customers, and paths to profitability will keep growing. The overhyped players eg ones promising AGI in six months or the hundredth “AI copilot for X” with no differentiation, will see sharp corrections.

I’d expect rotation away from the crowded names. Expect bigger spreads between winners and losers. The entire sector won’t crater, but individual companies absolutely will.

3. Open Source Continues Its March Forward

One of the most important trends from 2024 will accelerate in 2026: open source models are getting scary good.

We’ve reached a point where open-weight models from Meta, Mistral, DeepSeek and others are genuinely competitive with closed alternatives for many tasks. And they’re only getting better. The gap between GPT-4 and open models was enormous in 2023. By late 2024, that gap had narrowed to a crack. In 2026, for a lot of use cases, it’ll be gone.

This matters because it fundamentally changes the economics. When you can run a capable model on your own infrastructure (or cheap inference providers), you’re not locked into per-token pricing from the big labs. You can optimise. You can fine-tune.

You can compete on innovation and domain expertise instead of just who has the deepest pockets.

The proprietary labs will still have their place, they’re pushing the frontier on scale and capability. But 2026 will prove that you don’t always need the biggest, most expensive model. Sometimes good enough, fast, and cheap wins.

Thanks for reading AI Agent Engineering! This post is public so feel free to share it.

Share

4. Small Language Models Will Actually Be Useful

2026 is the year of the small language model (SLM).

We’ve been in an arms race of scale. Bigger models, more parameters, more compute. But that’s changing. Labs are realising that training highly specialised, smaller models for specific tasks often beats throwing a general-purpose giant at everything.

Why? Speed, cost, and accuracy. An SLM trained specifically for, say, code review or SQL generation can outperform GPT-5 on that narrow task while running in milliseconds and costing pennies. You can deploy it on-device. You can run it a million times a day without breaking the bank.

This doesn’t mean general-purpose LLMs are going away. But the exciting innovation in 2026 will come from companies figuring out how to decompose problems into specialized steps, each handled by a focused model.

Think of it like microservices for AI: instead of one monolithic model doing everything, you orchestrate a team of specialists.

5. Regulation Finally Catches Up (And It’s Not Uniform)

The EU AI Act fully entered force in August 2024, but its teeth are really showing up now. Most of the framework applies by August 2026, with full enforcement by 2027. If you’re building AI products for European customers, you can’t ignore this anymore.

But here’s the messy part: regulation is fragmented. The EU has strict rules. The U.S. is taking a lighter touch with sector-specific guidelines. Asia is all over the map. This creates real operational complexity—you can’t just build one AI system and deploy it everywhere.

For larger companies, this is annoying but manageable. For startups, it’s a minefield. But it also creates moats. Companies that figure out how to build “regulation-aware” AI systems will have a significant advantage.

Privacy, copyright, and liability are no longer edge cases. If you’re not thinking about them now, you’ll be rewriting everything in 2026.

6. The “AI Engineer” Role Solidifies

For the past couple of years, “AI engineer” has been this fuzzy catch-all term. Are you a data scientist? A software engineer who uses LLMs? A prompt engineer? Nobody really knew.

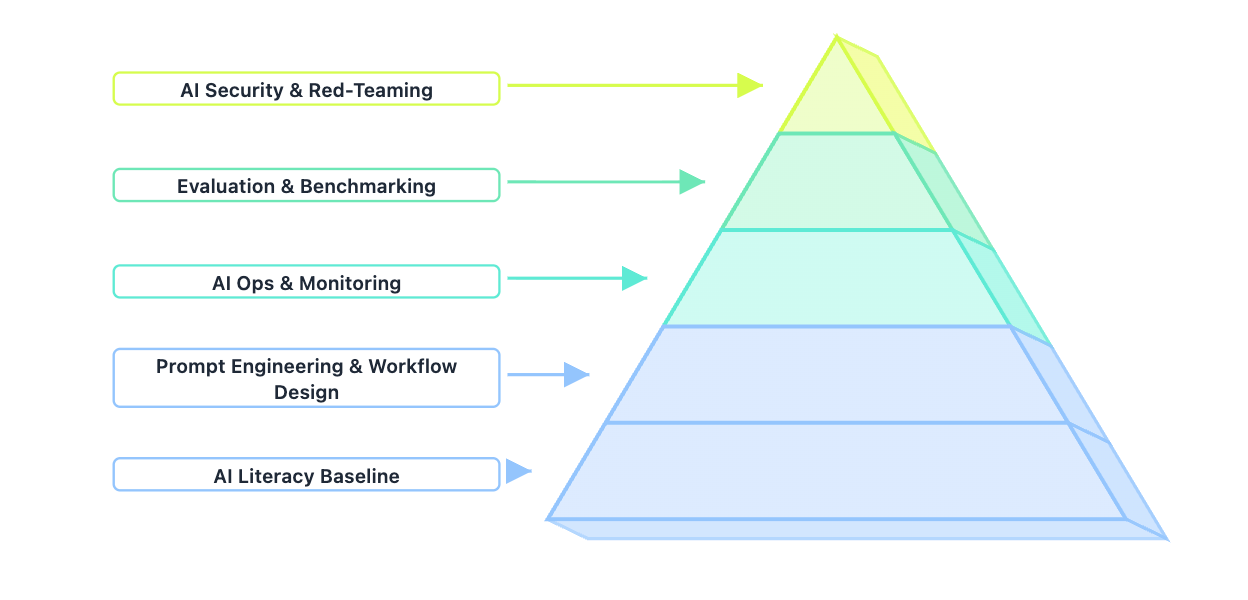

That’s changing. In 2026, AI engineering becomes a real discipline with clear subspecialties. You’ll see distinct career paths emerge: prompt engineering and workflow design, AI ops and monitoring, AI security and red-teaming, evaluation and benchmarking specialists.

Traditional software engineering roles will increasingly require AI literacy as a baseline, the same way web development eventually required understanding APIs and databases. You won’t be able to ignore it.

7. Context Windows Get Huge, But Memory Is the Real Game

Bigger context windows don’t solve the memory problem. They just make it more expensive.

The real innovation in 2026 will be intelligent memory systems. Not just “cram everything into context,” but sophisticated architectures that know what to remember, what to forget, what to surface when, and how to retrieve it efficiently. Think of it like the difference between taking notes on every single thing someone says versus actually remembering the important parts.

RAG (Retrieval-Augmented Generation) will evolve way beyond “chunk documents and stuff them in a vector database.” We’ll see hierarchical memory, episodic versus semantic storage, and systems that can reason about what information is actually relevant. (If you need help designing better retrieval systems, talk to me).

The companies that figure this out will have AI that feels like it actually knows you, rather than just having access to a lot of text about you.

8. AI Testing and Evaluation Grow Up

It’s been embarrassing how we’ve been evaluating AI systems. A lot of companies are still doing “vibe checks” by running a few examples, seeing if the output feels right, and calling it good.

That doesn’t cut it anymore. In 2026, systematic evaluation becomes standard practice. Companies build internal benchmarks specific to their use cases. They track accuracy, consistency, cost, and latency over time. They have regression tests that catch when a model update breaks something.

This is actually great news. It means the industry is maturing. We’re moving from science experiments to engineering discipline.

So, What Does This Mean for You?

If you’re building with AI in 2026:

Focus on reliability over hype. Build workflows, not agents (until you really know you need agents). Embrace open source. Think hard about evaluation and testing from day one.

If you’re a developer: now’s the time to level up on AI engineering fundamentals. Learn how to design, evaluate, monitor, and debug AI systems. Understand the tradeoffs between different model sizes and architectures. Get comfortable with the fact that this is a rapidly moving field and continuous learning isn’t optional.

2026 is going to be fascinating. The easy money is gone. The obvious ideas are played out. What’s left is the hard, interesting work of actually building AI systems that create real value.

I can’t wait to see what you build.

Thanks for reading my work and Happy New Year from New Zealand. 🎉

Want to chat about your AI strategy or need help navigating the agent/eval design? Book time with me here.

Thanks for reading AI Agent Engineering! Subscribe for free to receive new posts

![]()

No posts

Ready for more?

相關文章