「這是AI黑臉模仿」:被譽為澳洲原住民版史蒂夫·厄文的社群帳號竟是AI虛構角色

一個名為「Bush Legend」的熱門社群媒體帳號,因其描繪澳洲原住民版史蒂夫·厄文探索澳洲野生動物而吸引超過18萬粉絲,如今被揭露其角色為在紐西蘭創建的AI虛構人物。

‘It’s AI blackface’: social media account hailed as the Aboriginal Steve Irwin is an AI character created in New Zealand

More than 180,000 people follow the Bush Legend’s accounts across Meta platforms, but its Aboriginal host is a work of digital fiction

Get our breaking news email, free app or daily news podcast

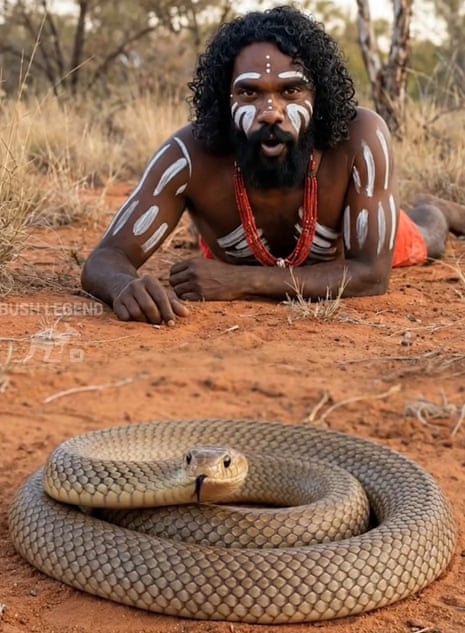

With a mop of dark curls and brown eyes, Jarren stands in the thick of the Australian outback, red dirt at his feet, a snake unfurling in front of him.

In a series of online videos, the social media star, known online as the Bush Legend, walks through dense forests or drives along deserted roads on the hunt for wedge-tailed eagles. Many of the videos are set to pulsating percussion instruments and yidakis (didgeridoo).

His voice sounds like a cross between Gardening Australia’s Costa Georgiadis and Steve Irwin. His speech is peppered with “mate” and “crikey” as he passionately shares snippets with thousands of his followers about Australian wildlife – from venomous snakes and crocodiles to redback spiders and the elusive night parrots, once thought extinct.

His followers leave admiring comments, marvelling at how he can get so close to the animals and even suggesting he needs his own TV show.

But none of it is real. The wildlife and the man presenting them are all creations of AI.

Created in October 2025, Meta indicates the account is based in New Zealand, with the Instagram account originally sharing an AI-generated satirical news account called ‘Nek Minute News before pivoting to wildlife content. Earlier incarnations of Bush Legend show the character wearing white body paint seeming to mimic ochre, a beaded necklace adorning his neck.

As of this week, the Bush Legend account has 90,000 followers on Instagram and 96,000 on Facebook. It says its focus is on building awareness and education about Australian wildlife.

Guardian Australia has contacted the person believed to have created the account, who is a South African living in New Zealand. They did not respond to multiple approaches.

‘Cultural flattening’

The choice to create an avatar of an Indigenous person has raised ethical concerns.

Dr Terri Janke, a lawyer and Indigenous cultural and intellectual property, expert, says the images and content are “remarkable” in their realism.

“You think it’s real, I was just scrolling through and I was like, ‘How come I’ve never heard of this guy?’ He’s deadly, he should have his own show,” she says. “Is he the Black Steve Irwin? In his greens or the khakis, he’s a bit like Steve Irwin meets David Attenborough.

But while the Wuthathi, Yadhaigana and Meriam woman says the engaging videos are “pretty incredible when you look at it as a tool for education”, the creation of a seemingly Indigenous avatar is offensive and carries a risk of “cultural flattening”.

“Whose personal image did they use to make this person? Did they bring together people?” she asks. “I feel a bit misled by it all.”

AI-generated content poses a particular risk to marginalised communities and could be considered theft of cultural and intellectual property. It also potentially takes opportunities away from authentic accounts, such as videos created by the vast network of Aboriginal rangers.

“It’s theft that is very insidious in that it also involves a cultural harm,” Janke says. “Because of the discrimination … the impacts of stereotypes and negative thinking, those impacts do hit harder.”

Janke says it is possible to ethically use AI technology to create content about First Nations people, but it requires the consent and involvement of First Nations people.

Tamika Worrell, a senior lecturer in critical Indigenous studies at Macquarie University, says the AI avatar is a form of cultural appropriation and “digital blackface” where a non-Black person creates a Black or Indigenous caricature online.

The Kamilaroi woman says the proliferation of AI tools without appropriate legislative guardrails means that images, cultural knowledge and stories can be transmitted without appropriate consent.

“AI becomes this new platform that we have no control or no say in it,” she says.

“Not only stories or language but actual visuals of us can often be taken from people that have passed away – or just blending a range of different people [to create an AI avatar] with no kind of accountability to the communities that these people are from.

“It’s AI blackface – people can just generate artworks, generate people, [but] they are not actually engaging with Indigenous people.”

The potential for harm is twofold: such accounts default to sharing the “palatable” or “comfortable” aspects of Indigenous cultural knowledge and experience, rather than the more complex reality; and it also has the potential to amplify racism.

“I was looking at the comments from a latest post of Bush Legend. We see the same racist comments that we know mob online get. We see it again applied to an AI person as well,” she says.

Toby Walsh, laureate fellow and scientia professor of artificial intelligence at the University of New South Wales, says AI is trained to reproduce information and rendering through large-scale data sets with inbuilt biases, meaning it’s not immune to racist or prejudicial content.

“They are going to carry the biases of that training data,” he says. “Certain groups may be stereotyped because the video data or the image data that exists in that group online is somewhat stereotypical. So we’re going to perpetuate that stereotype moving forwards.”

Guardian Australia attempted to contact the page’s creator through multiple social media accounts and email but was yet to receive a response.

The Bush Legend account has seemingly addressed the criticism through its avatar, saying the page doesn’t seek to “represent any culture or group”. “This channel is simply about animal stories,” the AI creation said in a video last week.

It went on to say the page “isn’t asking for money, donations or support” and content is “free to watch”, and suggests people “scroll on” if they don’t like it. Earlier plugs asked followers to subscribe for $2.99 per month.

Meta has been contacted for further comment.

Walsh says while digital literacy can assist users with identifying AI content, the “tells” that help identify it are getting increasingly hard to spot. “If not now, in the very near future, it’s going to be next to impossible to be able to identify for yourself whether this was real or fake,” Walsh says.

“We used to believe in things that we see, because it used to be the things that you saw were largely real things.

“Now it’s not hard to fake stuff. It’s incredibly easy to fake stuff in a very convincing way, so we’re going to stretch the boundaries of what is true and false.”

‘Not regulated’: launch of ChatGPT Health in Australia causes concern among experts

Australia Post apologises for losing Aboriginal artist’s painting worth $4,000

Full StoryThe Descendants episode 2: the search for Tom Wills – Full Story podcast

More than 4.7m social media accounts blocked after Australia’s under-16 ban came into force, PM says

Full StoryThe Descendants episode 1: decoding a massacre – Full Story podcast

Elon Musk’s Grok made the world less safe – his humiliating backdown gives me hopium

Australian politicians are condemning X and Grok, so why won’t they leave the platform?

Aboriginal woman’s death in custody in NT prompts calls for independent investigation

Full StoryWhy AI datacentres are draining our energy and water – Full Story podcast

‘It’s like you’re sitting in front of an oven’: surviving the summer in one of Australia’s hottest towns

Most viewed

Most viewed

相關文章