AI晶片銷售數據

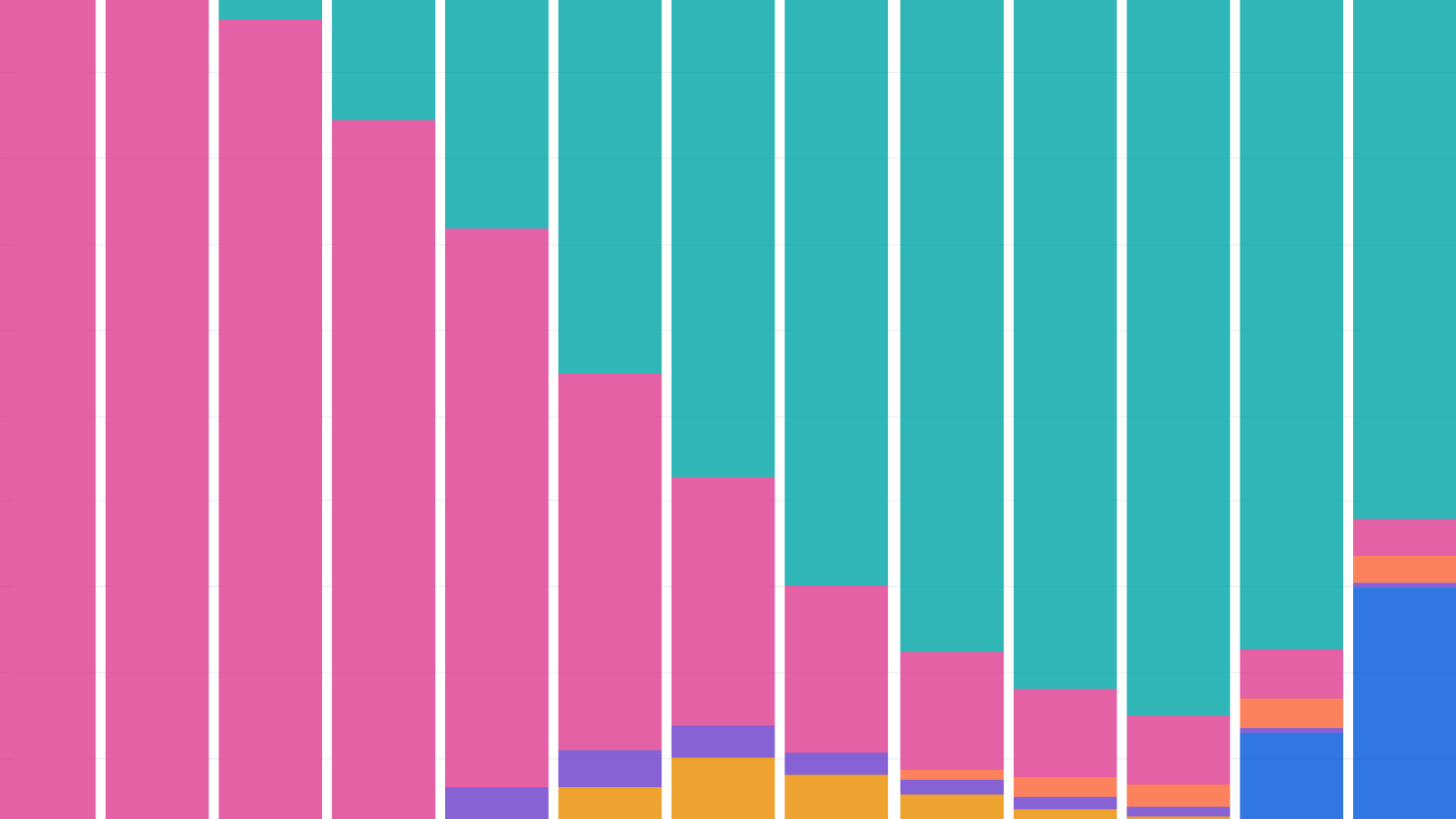

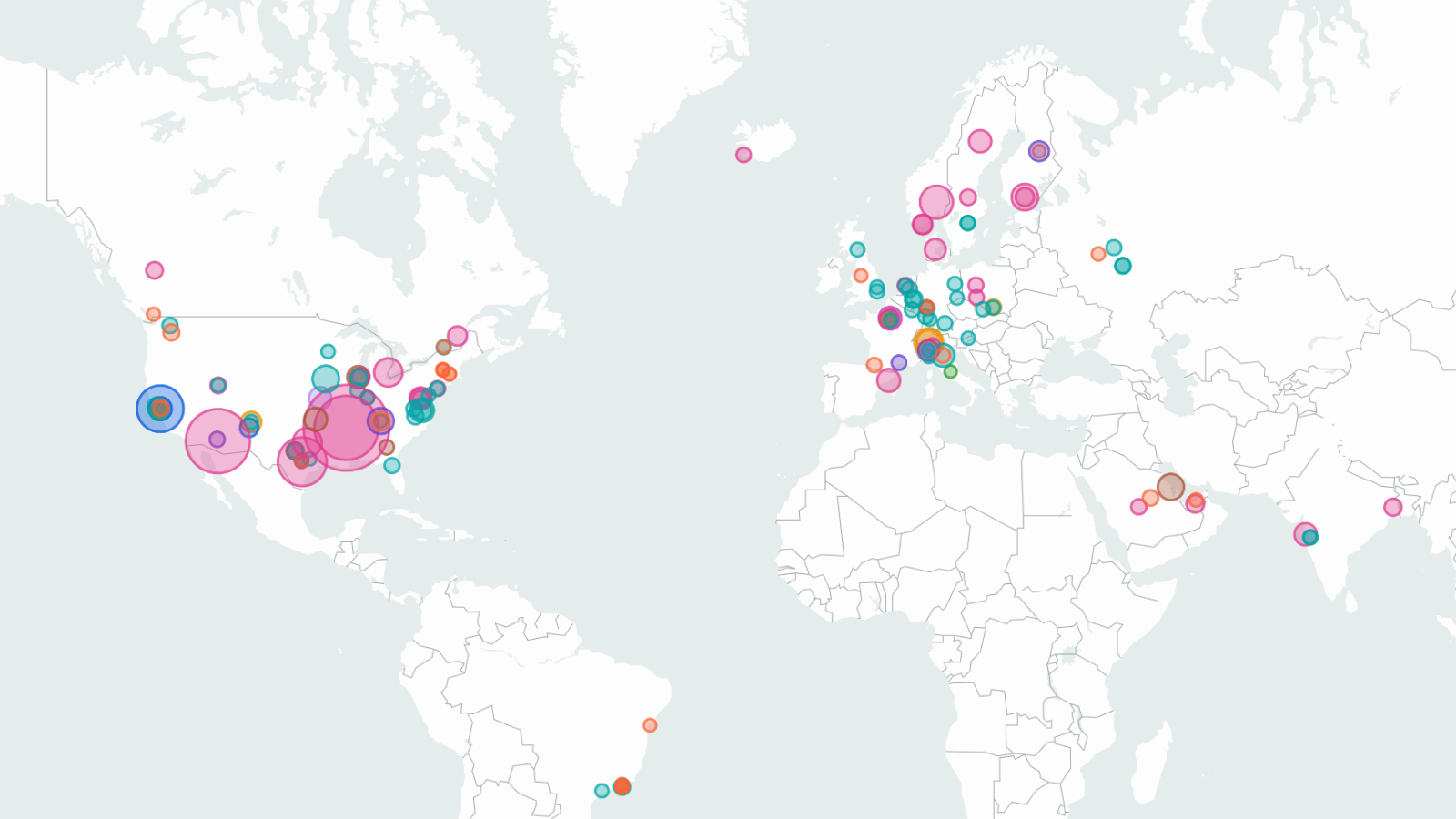

Epoch AI 發布了一個開放數據庫,估計AI晶片的銷售情況,涵蓋運算能力、功耗和支出隨時間的變化,該數據基於財務報告和公司披露。數據集按晶片製造商、特定晶片型號和時間段細分銷售量,不同設計師的信心水平有所不同。

AI Chip Sales

Our open database of AI Chip sales, using financial reports, company disclosures, and more to estimate compute, power usage, and spending over time for a wide variety of AI chips.

Last updated January 9, 2026

FAQ

What does this dataset measure?

Our AI Chip Sales dataset compiles our estimates of the number of dedicated AI accelerators that have been sold or shipped by major chip designers over time. This is broken down by chip manufacturer, by specific chip model, and by time period (mainly fiscal or calendar quarters).

How do you estimate production volumes for different manufacturers?

Our methodology varies by designer based on available data. Please refer to the Documentation section for a detailed overview of our methodology for each chip designer.

How confident are you in these estimates?

Our figures are estimates, because chip companies do not consistently disclose exact sales, though Nvidia has provided the most informative direct disclosures on total Hopper and Blackwell sales.

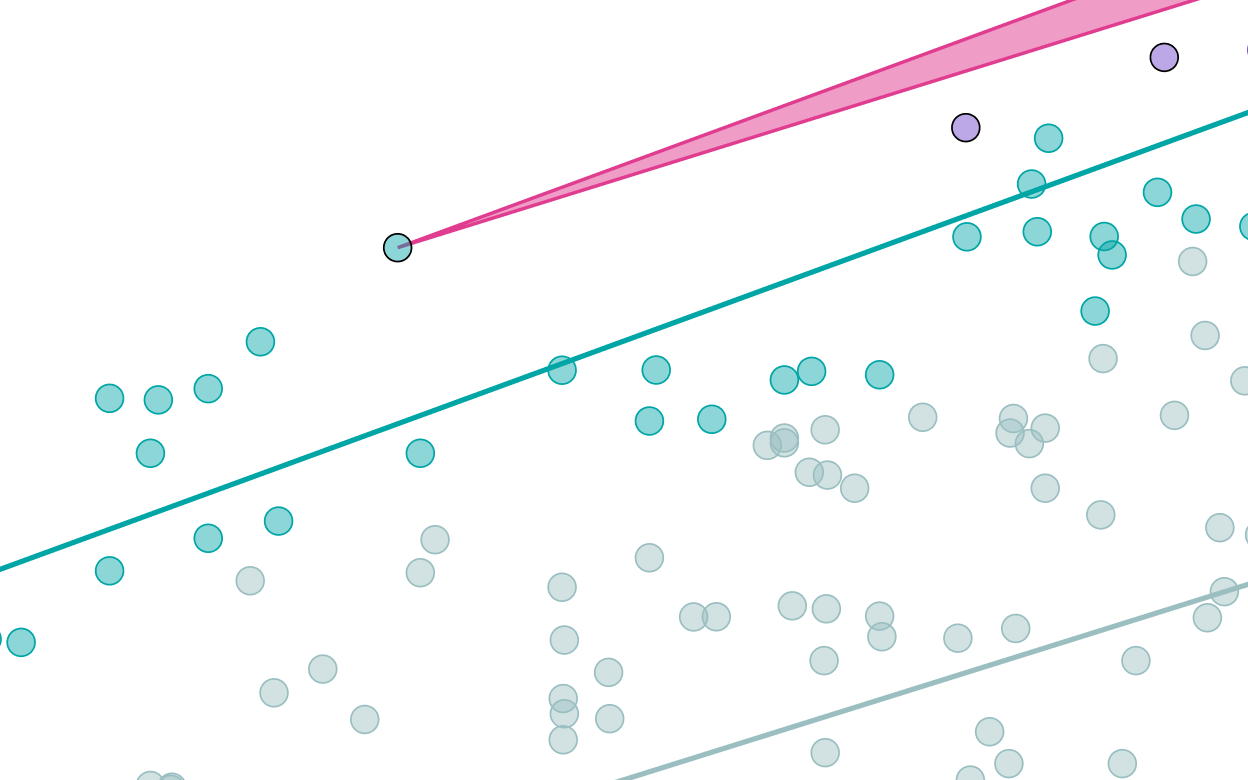

When feasible, we model our uncertainty in 90% confidence intervals. These can be viewed in the tooltips and in the table view; most of our intervals span a factor of roughly 2x, or ~1.5x in either direction from the median.

Our uncertainty, and the robustness of our methodologies, varies by chip designer. We have the highest confidence in our Nvidia estimates, which are based on Nvidia-reported compute revenue and extensive media and analyst coverage, and corroborated by direct disclosures from Nvidia of Hopper and Blackwell shipments. We are least confident in our Amazon Trainium estimates; while large-scale Trainium data centers provide a robust floor on volumes, our central estimates rely on indirect evidence and fairly limited analyst coverage.

For more information on how we produce our estimates, see our methodology page.

What does "H100e" compute capacity mean?

H100e (H100-equivalent) compute capacity is total computing power measured in terms of the equivalent number of Nvidia H100 GPUs. We divide the peak number of dense 8-bit operations (FP8 or INT8) each chip can perform by the Nvidia H100’s spec, and then multiply this ratio by the number of chips. For example, since a TPUv7 can perform ~2.3x as many operations as an H100, then one million TPUv7s have a compute capacity of 2.3 million H100e.

We use H100e because citing a reference chip is much more intuitive than total operations per second. The H100 is chosen because it was the most widely-used AI chip in 2023 and 2024.

We choose 8-bit number formats since our understanding is that they are now widely used for inference, and for training at major AI labs (see more on number format trends here). Alternative metrics such as different bit counts or total processing performance (operations x bit width) can lead to different performance ratios between chip types, but in most cases this choice does not matter because peak operations per second usually scales inversely with the bit width of the number format.

Is H100-equivalent compute a true measure of relative chip performance?

No, this is an imperfect proxy based on specifications on paper. While operations/second is the single most useful metric because the basic purpose of AI chips is to perform computer operations, real-world performance can diverge from paper specs for several reasons:

How are chip costs calculated?

Chip costs are based on approximate average purchase prices for each chip type, multiplied by the number of chips. This price is the price paid by the final customer or user, not the underlying production cost to the chip designer or manufacturer. These are intended for useful reference, and we don’t currently implement confidence intervals for total chip costs.

For AI chips that are sold directly to external customers, such as Nvidia’s and AMD’s, this is the price paid by those customers, synthesized from reports or analyst estimates. For custom chips such as Google’s TPU, this is the estimated price paid to the relevant supply partners (e.g. Broadcom). This is a fair comparison while Google is the sole direct customer of TPU, but once TPUs are sold to external customers such as Anthropic in 2026, future chip costs will be adjusted to account for the average price paid by all TPU customers.

Note that chip prices do not represent the full capital cost of AI hardware, due to the substantial overhead costs of servers, compute clusters, and data center facilities. You can find more information about AI capital costs here.

How is chip power measured?

The power figures are based on each chip’s thermal design power (TDP), which is a specification that roughly means the maximum possible sustained electric power draw of the chip. This is multiplied by the total quantity of a given chip.

Importantly, the total power draw of an AI data center is typically much higher (roughly twice as high) than the total TDP of the chips inside, due to overheads at the server, cluster, and facility level.

Do you track deployed, delivered, or just produced compute?

We strive to track the total number of chips that are delivered and ready for installation or use, but not necessarily already online in a data center. This can vary by designer in practice due to differences in available evidence:

In all cases, delivered chips may take additional time to be installed and brought online in data centers.

Does this track all AI chip production?

No. We focus on the largest designers of dedicated AI accelerators: NVIDIA, Google (TPUs), Amazon (Trainium/Inferentia), AMD (Instinct series), and Huawei (Ascend series), prioritizing both overall volume and geographic diversity. Together, these account for the large majority of global AI compute capacity.

We do not currently track:

We may expand coverage as other manufacturers scale production or more data becomes available.

How far back does this data go?*

Our data and estimates date back to 2022 for Nvidia, 2023 for Google, and 2024 for other chip designers. This is mainly due to greater availability of information over time. In addition, given a trend of rapid growth in the stock of computing power over time, the last two years of shipments would make up a large majority of the total compute stock even if chips had infinite lifetimes. We may later expand our coverage to earlier years.

Do you account for chip failures or retirements?

We do not explicitly account for chip retirements. AI hardware generally lasts for several years, though exact figures are a matter of controversy, so many chips sold in 2023 or earlier are likely still in service. You can find more information on historical Nvidia AI chip production dating back to 2019 here.

How do you align data from different time periods?

Our bar chart visualization shows chip sales by calendar quarter. However, some underlying estimates don’t line up with calendar quarters, either because they’re based on fiscal quarters that don’t line up with the calendar (e.g. Nvidia’s last full fiscal quarter of 2025 ended in late October), or because our estimates are broken down by years or half-years rather than quarters.

In these cases, we interpolate the data to fit calendar quarters by assuming a constant rate of sales per time period in the underlying data. This method is simple but slightly imperfect since chip sales have usually grown over time in recent years (or shrink when a new generation is introduced).

How is the data licensed?

Epoch AI’s data is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons Attribution license.

Documentation

The AI Chip Sales hub tracks and estimates sales and shipments of leading AI chips over time, as well as their estimated total computing power, cost, and power draw. The analysis is primarily based on data on chip volumes, revenue, and prices sourced from company earnings commentary, analyst estimates, and media reports.

Read the full documentation here.

Use this work

Licensing

Epoch AI's data is free to use, distribute, and reproduce provided the source and authors are credited under the Creative Commons Attribution license.

Citation

BibTeX Citation

Python Import

Download this data

AI Chip Sales

ZIP, Updated January 9, 2026

Related work

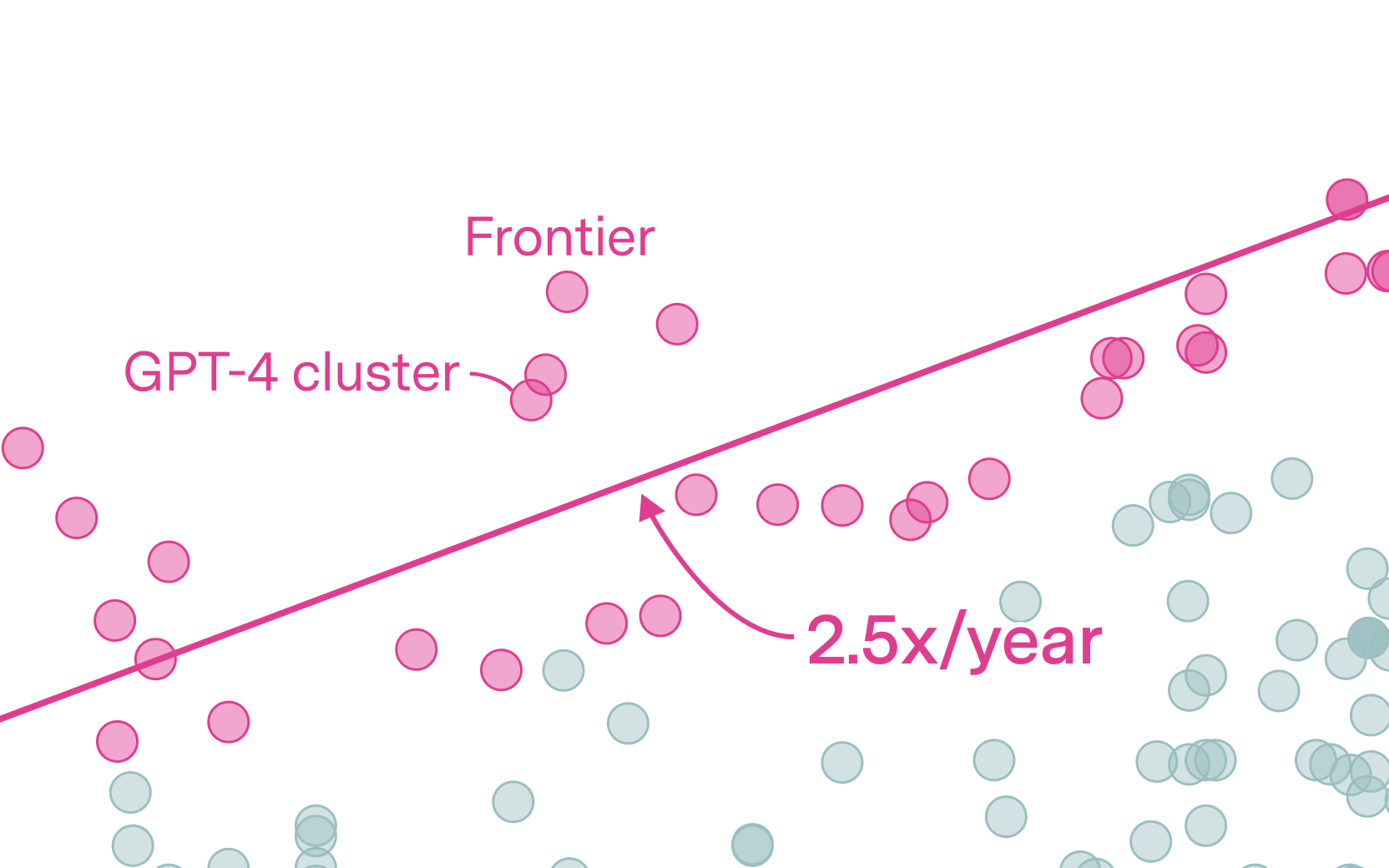

Compute Trends Across Three Eras of Machine Learning

How Much Does It Cost to Train Frontier AI Models?

Trends in AI Supercomputers

Explore other work

Data on AI Models

Our public database, the largest of its kind, tracks over 3200 machine learning models from 1950 to today. Explore data and graphs showing the trajectory of AI.

Updated Jan 6, 2026

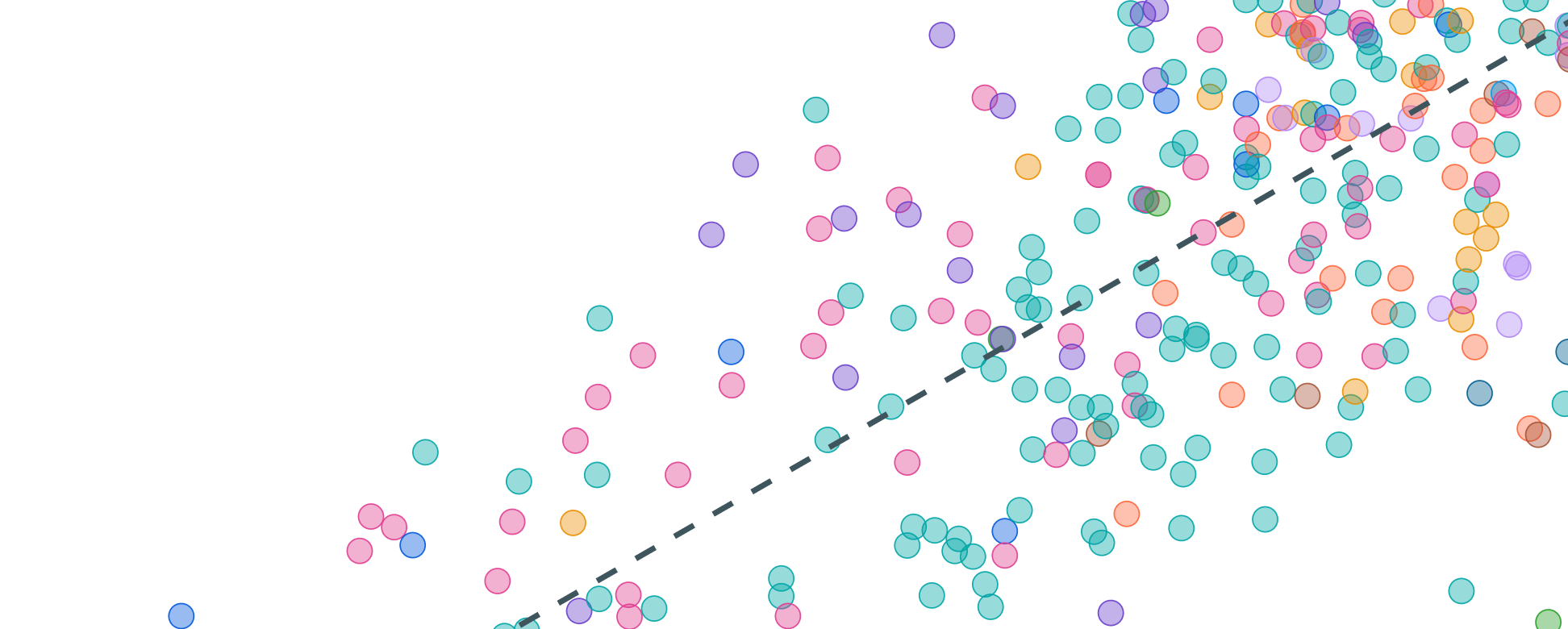

Data on GPU clusters

Our database of over 500 GPU clusters and supercomputers tracks large hardware facilities, including those used for AI training and inference.

Updated Dec 30, 2025

Collaborate with us

We're proud to partner with select stakeholders on projects aligned with our mission.

We're gathering stories about how our research has informed decisions, shaped strategies, or sparked insights.

Have a question? Noticed something wrong? Let us know.

If you would like a reply, please include your name and email address.

Your comment will be reviewed. We may not be able to respond to every submission.

There’s been an error in submitting your feedback. Please try again later.

相關文章