AI監管:事實或虛構?

本文釐清了關於AI監管的普遍誤解,強調監管風險源於在具有重大後果的決策中對AI生成聲明的依賴,而非AI系統本身。文章重點闡述了《歐盟AI法案》對使用和影響的關注,要求組織能夠證明依賴的基礎。

AI Regulation: Fact and Fiction

What the EU AI Act, the U.S. Securities and Exchange Commission, and Global Regulators Actually Require When AI Is Relied Upon

Executive Summary

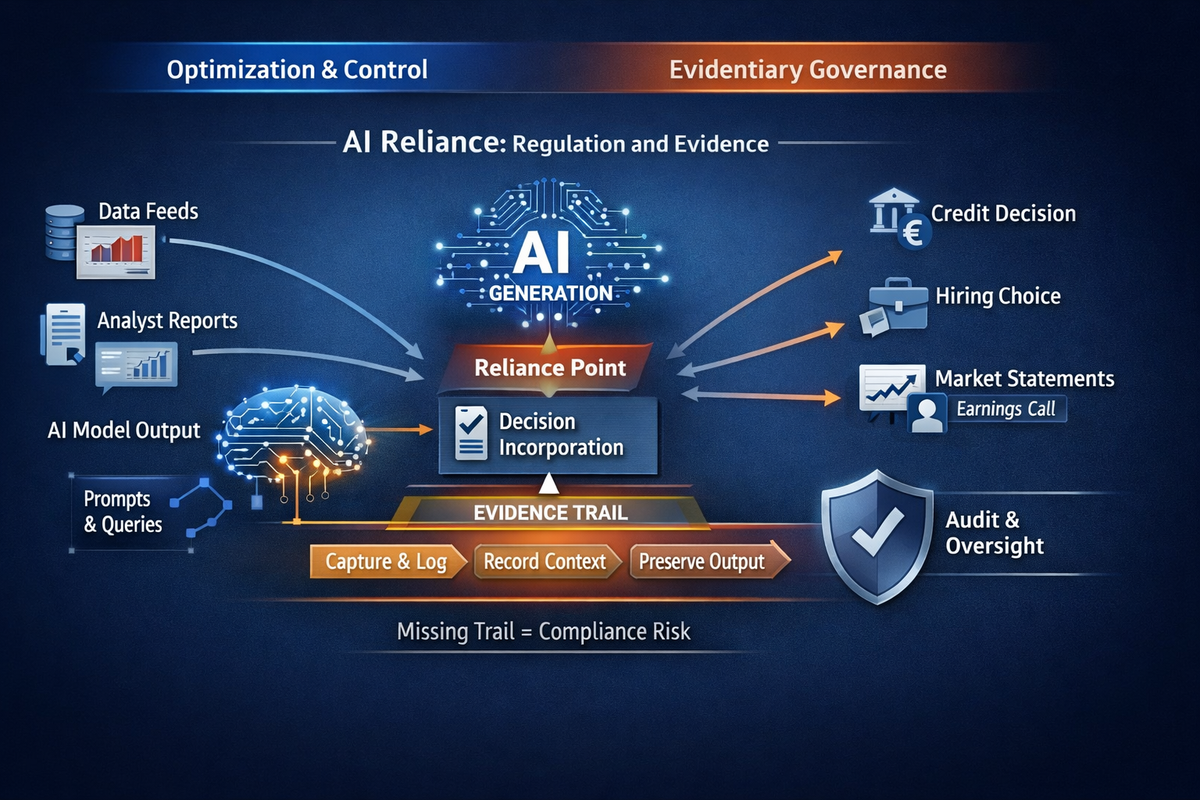

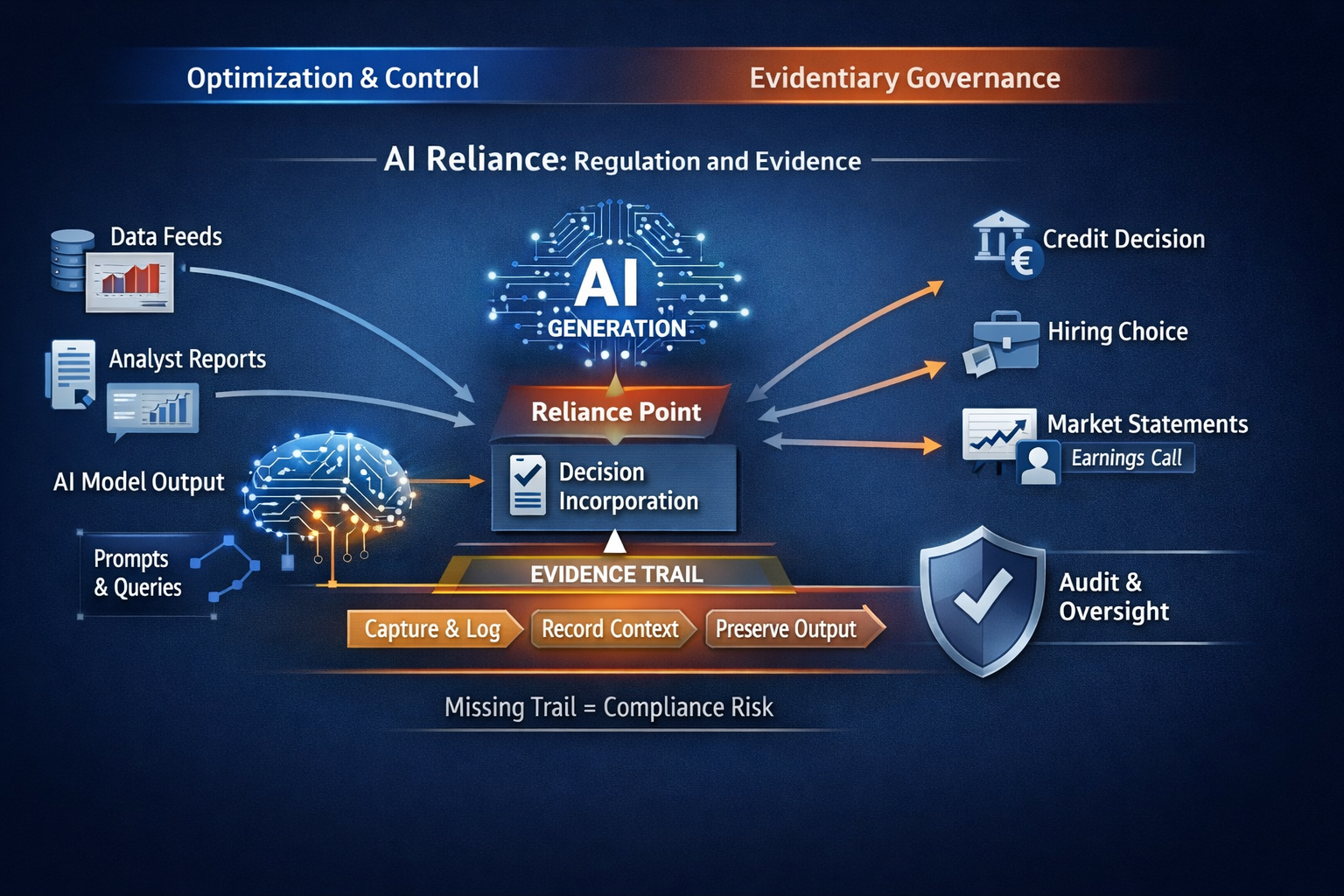

AI regulation is widely discussed as if it were about controlling models. That framing is convenient, but wrong.

The dominant regulatory exposure does not arise from how AI systems are built. It arises from how AI-generated statements are relied upon in decisions that carry legal, financial, or reputational consequences.

Across jurisdictions, regulators are converging on a single expectation:

This article separates fact from fiction in current AI regulation and maps enforceable obligations to a specific and under-governed risk surface: AI Reliance.

1. The Core Misconception: “AI Regulation Is About AI Systems”

Fiction

AI regulation primarily targets model safety, bias, or hallucinations.

Fact

Regulatory exposure is triggered at the moment of reliance, not generation.

Regulators consistently assess:

Reliance is not presumed. It is established when an AI-generated statement is incorporated, implicitly or explicitly, into a decision process or workflow. Mere exposure is insufficient. Reliance is.

If the answer to (3) is no, the issue becomes evidentiary rather than technical.

2. The EU AI Act: What It Requires, and What It Implies

What the EU AI Act Explicitly Requires

The EU AI Act introduces enforceable obligations around:

These obligations apply to:

The Act focuses on use and impact, not merely system ownership.

Where AI outputs inform:

Organizations must be able to demonstrate the basis of reliance.

The Statutory Objection, and Why It Fails

A common counterargument is that the Act regulates systems “placed on the market” or “put into service,” not ambient third-party AI statements.

That reading fails for three reasons:

“We did not control the system” is not a defensible position once reliance is established.

3. SEC Expectations: Disclosure, Controls, and Reconstruction

Fiction

The SEC only concerns itself with internally deployed AI systems.

Fact

The SEC regulates decision-relevant information flows, regardless of source.

SEC expectations around:

apply whenever information influences:

AI-generated outputs are functionally equivalent to:

All are routinely within scope when relied upon. AI introduces volatility, not a new exemption.

The governing question remains unchanged:

4. The Cross-Jurisdictional Convergence

Across regulatory domains, the same logic applies:

No new doctrine is required. Existing law is encountering a new failure mode.

5. Accuracy vs Evidence: A Necessary Distinction

Accuracy determines substantive compliance.Evidence determines whether compliance can be assessed at all.

An organization may believe an AI-generated statement was accurate. That belief is irrelevant if the statement cannot later be reconstructed.

Regulators consistently treat the absence of evidence as a control failure, not a technical limitation.

6. Why Optimization and Monitoring Are Insufficient

Optimization and monitoring reduce the probability of error ex ante.

They do not:

When incidents occur, regulators privilege evidentiary continuity, not performance metrics.

Optimization without evidentiary capture accelerates exposure rather than mitigating it.

7. The Live Governance Gap

Most enterprises have:

Most lack:

This gap remains invisible until scrutiny begins. At that point, it is treated as a governance failure.

8. Fact vs Fiction Summary

Fiction

Fact

The Role of the AIVO Standard™

AI regulation does not require prediction, optimization, or intervention.It requires evidence.

The AIVO Standard™ exists to address a narrow but critical gap:

It defines a neutral evidentiary framework for:

In regulatory terms, AIVO operates as an assurance and evidence layer, analogous to logging and audit trails in financial systems.

Call to Action

If your organization relies, explicitly or implicitly, on AI-generated statements in decisions that could be scrutinized by regulators, auditors, courts, or boards, the relevant question is no longer whether AI is accurate.

It is whether reliance can be proven.

AIVO Standard™ provides a structured, audit-aligned approach to evidencing AI reliance before scrutiny begins.

For organizations seeking to understand whether this governance gap exists in their current controls, an initial AIVO Reliance Assessment can be conducted under NDA.

Annex A

Methodology, Scope, and Legal Basis

A.1 Purpose of This Annex

This annex clarifies:

It is intended to support defensibility, not to expand regulatory interpretation.

A.2 Methodological Approach

The analysis applies a functional governance methodology, not a doctrinal or textualist one.

Specifically, it:

No assumptions are made about:

The analysis focuses solely on post-hoc accountability.

A.3 Definition of AI Reliance (Operational)

For the purposes of this article, AI Reliance is defined narrowly as:

This definition excludes:

Reliance is assessed functionally, not rhetorically.

A.4 Regulatory Interpretation Basis

The article relies on established supervisory logic, not novel AI doctrine.

Across financial regulation, consumer protection, corporate governance, and litigation, regulators consistently apply three principles:

These principles predate AI and are already embedded in:

The article applies these principles to AI-generated representations without extending or redefining them.

A.5 EU AI Act Scope Interpretation

The article does not claim that the EU AI Act explicitly regulates all third-party AI systems or ambient AI content.

Instead, it asserts the following limited and defensible position:

This is an interpretive application, not a claim of textual certainty.

A.6 SEC and U.S. Regulatory Understandings

The article does not assert the existence of AI-specific SEC rules governing external AI outputs.

It relies on long-standing SEC expectations that:

AI-generated statements are treated as functionally equivalent to other third-party information sources when relied upon.

No claim is made that AI outputs are uniquely regulated. They are assessed under existing standards.

A.7 Accuracy vs Evidence Clarification

The article distinguishes, but does not oppose, accuracy and evidence.

Both are required. The absence of either creates exposure.

The article focuses on evidence because it is the necessary precondition for regulatory evaluation.

A.8 Exclusions and Non-Claims

This article does not:

It also does not argue that AI systems are inherently non-compliant or unsafe.

A.9 Role of the AIVO Standard (Clarificatory)

References to the AIVO Standard™ are descriptive, not prescriptive.

The Standard is presented as:

It is explicitly not positioned as:

A.10 Use of This Article

This article and annex are suitable for:

They should not be relied upon as a substitute for legal counsel or formal regulatory guidance.

End of Annex A

Annex B

Illustrative AI Reliance Scenarios (Non-Exhaustive)

B.1 Purpose of This Annex

This annex provides concrete, sector-agnostic illustrations of AI Reliance as defined in Annex A.Its purpose is to clarify how reliance is established in practice, not to enumerate all possible cases.

Each scenario is:

No scenario assumes misconduct, negligence, or regulatory breach by default.

B.2 Banking and Financial Services

ScenarioA relationship manager uses a general-purpose AI assistant to summarize a counterparty’s financial health and risk profile based on publicly available information. The summary is referenced during an internal credit committee discussion and influences a credit limit decision.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.3 Corporate Disclosure and Investor Communications

ScenarioA corporate communications team uses an AI assistant to draft language summarizing competitive positioning and market outlook. Elements of the draft are incorporated into an earnings call script and investor Q&A preparation.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.4 Healthcare and Life Sciences

ScenarioA clinician consults an AI assistant for a summary of treatment options and contraindications for a complex case. The summary informs, but does not replace, clinical judgment and is discussed during case review.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.5 Employment and Human Capital Decisions

ScenarioAn HR team uses an AI assistant to summarize candidate strengths and weaknesses from CVs and online profiles. The summary is discussed during hiring deliberations.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.6 Consumer Communications and Product Claims

ScenarioA marketing team uses an AI assistant to generate product descriptions and comparative claims. The language is published with minor edits.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.7 Board and Executive Decision Support

ScenarioAn executive uses an AI assistant to summarize regulatory developments and risk exposure ahead of a board meeting. The summary informs board discussion and risk posture.

Why Reliance Exists

Regulatory Question Triggered

Governance Gap

Supervisory Interpretation

B.8 Common Pattern Across Scenarios

Across all sectors, the pattern is consistent:

None of these scenarios require:

Reliance alone is sufficient to trigger scrutiny.

B.9 Relevance to Evidentiary Governance

These scenarios illustrate why AI Reliance is:

They also clarify the narrow role of evidentiary frameworks such as the AIVO Standard™:

They do not imply optimization, intervention, or compliance guarantees.

End of Annex B

Sign up for more like this.

Why AI Agents Increase External AI Reliance

AI Discovery Without a Record

External AI Representations and Evidentiary Reconstructability

相關文章