外部AI推理為何預設會違反歐盟AI法案的第12條和第61條

本文指出,歐盟AI法案的合規缺口並非來自部署內部AI,而是外部AI推理影響受監管的決策而缺乏證據控制,導致無法重建決策依據。

Why External AI Reasoning Breaks Articles 12 and 61 by Default

Why probability becomes a governance diagnostic, not a prediction

For many enterprises, the EU AI Act still feels like a future problem. The debate is framed around internal AI systems, model development, and hypothetical harms that will materialize once enforcement begins in earnest.

That framing misses a more immediate exposure.

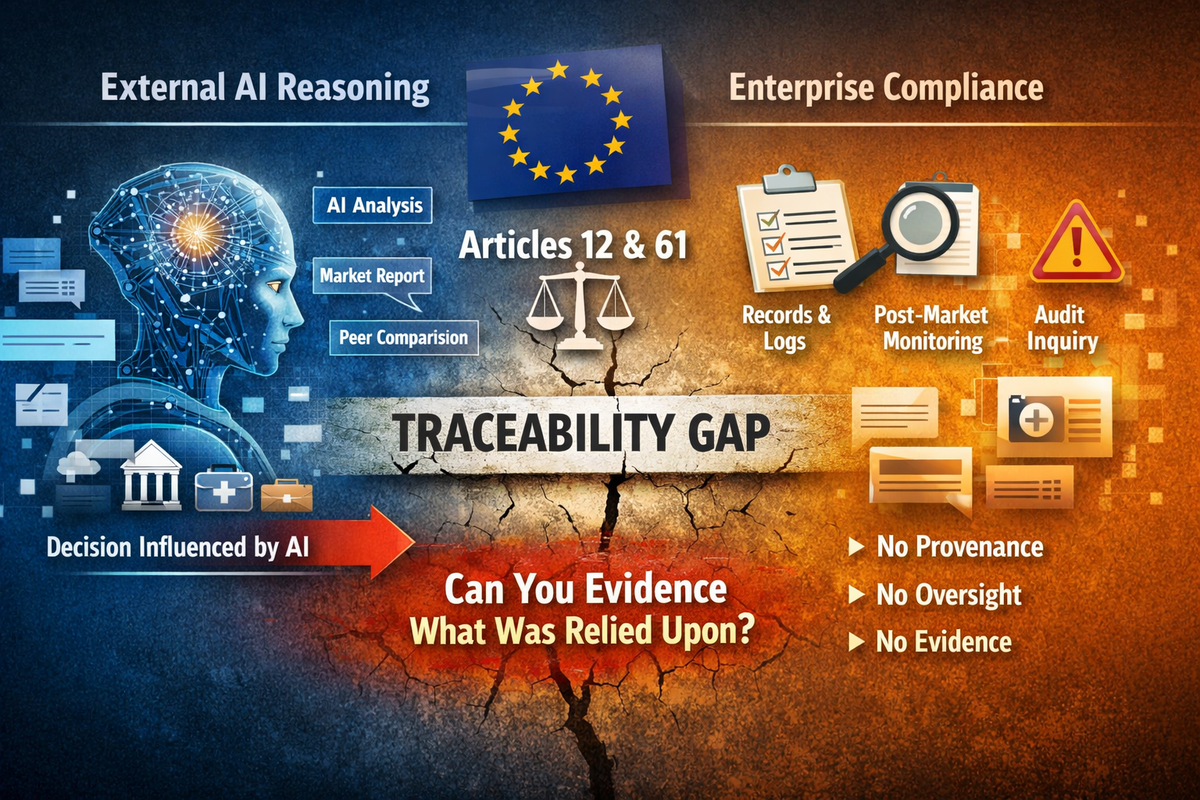

A compliance gap is already emerging that does not depend on whether an organization builds, deploys, or even authorizes AI systems at all. It arises when external AI reasoning about the organization enters regulated decision pathways without an evidentiary control.

When that happens, compliance does not fail because the AI is inaccurate. It fails because the organization cannot reconstruct what was relied upon.

This article introduces a probability-based diagnostic framework designed to surface that exposure early, before Articles 12 and 61 are tested in enforcement, supervision, or litigation.

The real risk is evidentiary, not artificial intelligence

Articles 12 and 61 of the EU AI Act are often discussed as technical obligations tied to high-risk AI systems. Read carefully, they encode something more basic:

Neither obligation is limited to AI systems an organization owns.

Both are engaged the moment AI outputs influence decisions in Annex III domains, including credit, healthcare, access to services, employment, and financial stability.

Where enterprises are now exposed is not in deploying AI, but in being reasoned about by it, externally, at scale, in ways that plausibly shape consequential decisions.

Why accuracy is the wrong lens

A persistent misconception is that compliance risk arises only when AI outputs are wrong.

In practice, the most problematic cases are often directionally reasonable outputs that blend facts with inference in ways that cannot later be disentangled. These outputs invite reliance precisely because they sound plausible.

From an evidentiary perspective, confidence without provenance is more dangerous than obvious error. Error can be corrected. Unlogged reasoning cannot be reconstructed.

The diagnostic framework described here is deliberately agnostic to factual correctness. It measures synthesis under reliance, because that is where Articles 12 and 61 fail.

The diagnostic framework, in brief

The framework estimates the relative likelihood that an organization will face at least one situation in which it is asked to explain or evidence external AI reasoning that influenced a decision, typically across six- and twelve-month horizons.

It evaluates five independent drivers:

The first four drivers establish a base hazard. The fifth determines whether that hazard is governable.

How the probability diagnostic is constructed

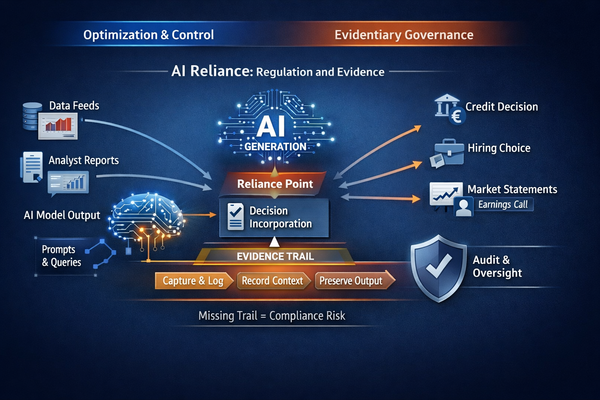

The five drivers are assessed independently and then combined into a single Relative Incident Likelihood score. Exposure surface, narrative ambiguity, synthesis elasticity, and decision adjacency establish a base hazard, while control asymmetry operates multiplicatively as an exposure amplifier rather than an additive factor.

This reflects a regulatory reality. Modest exposure with evidentiary controls creates manageable compliance work. Modest exposure without controls creates obligations that cannot be met.

Scores are peer-normalized within relevant universes and translated into scenario-calibrated probabilities to support governance decision-making, not actuarial prediction.

What “scenario-calibrated” means in practice

Probabilities are calibrated against observed patterns in regulated environments where evidentiary failure becomes visible, including procurement escalations, compliance inquiries, dispute resolution, and supervisory follow-up triggered by AI-influenced narratives.

Given the prevalence of private reliance and the absence of systematic logging, these figures should be read as conservative, relative indicators rather than exhaustive counts of latent incidents. Their value lies in signaling governance exposure, not in claiming statistical precision.

A composite example: how Article 12 fails without anyone noticing

Consider a pharmaceutical company whose public sustainability and safety disclosures are referenced dozens of times per month in analyst-oriented AI queries. When those prompts include comparative language such as “versus peers” or “relative safety profile,” observed synthesis rates increase materially, with models blending reported data, inferred context, and unstated assumptions into confident narratives.

If even one such output informs an investment weighting, formulary discussion, or counterparty assessment, Articles 12 and 61 are engaged.

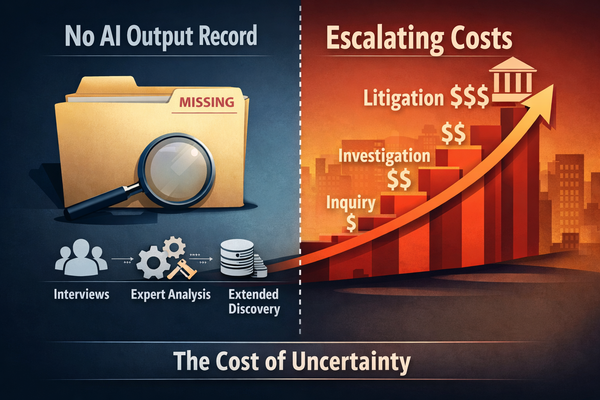

Yet without capture or retention of the external AI output, the organization cannot evidence what was stated, how it was framed, or what reasoning characteristics were present. No factual error is required for compliance to fail. Reliance alone is sufficient.

Why control asymmetry is a multiplier, not a factor

Control asymmetry is not simply another risk input. It is the point at which exposure becomes categorically different.

Modest exposure with logging and retention creates bounded compliance obligations. Modest exposure without logging creates obligations that are structurally unmeetable. The nonlinearity is regulatory, not statistical.

The standard is binary. An organization can either reconstruct what was relied upon, or it cannot. Where reconstruction is impossible, Articles 12 and 61 fail simultaneously, regardless of intent or accuracy.

Diagnostic value for Articles 12 and 61

Used properly, this framework does not predict incidents. It diagnoses evidentiary readiness.

A high relative likelihood score signals that:

In that state, Articles 12 and 61 are not future risks. They are already unmeetable, even if no incident has yet surfaced publicly.

This matters because many reliance events occur privately: in internal analysis, advisory work, or preliminary decision-making. The absence of visible harm does not imply compliance.

The strategic implication

The EU AI Act does not require enterprises to prevent external AI reasoning. That would be neither realistic nor implied.

It does require that where AI influences consequential decisions, organizations can demonstrate traceability, oversight, and post-market monitoring.

Probability-based diagnostics provide an early-warning signal where traditional AI governance tools fail. They surface exposure before Articles 12 and 61 are tested in enforcement, litigation, or supervisory review.

External AI reasoning is already part of the decision infrastructure. The only open question is whether it remains unevidenced.

For enterprises operating in Annex III domains, that is no longer a theoretical issue. It is a present-day governance gap, and one that probability can make visible before it becomes enforceable.

Can you evidence what external AI reasoning was relied upon?

If an AI-generated statement influenced a regulated decision tomorrow, could your organization reconstruct what was said, in what context, and with what reasoning characteristics?

We conduct a confidential External AI Reasoning Exposure Briefing for enterprises operating in Annex III domains.The briefing assesses:

Request a confidential exposure briefing: [email protected]

Sign up for more like this.

The Cost of Not Knowing

AI Regulation: Fact and Fiction

Why AI Agents Increase External AI Reliance

相關文章