您的組織文化是否正在阻礙您的 AI 實施?

文章指出,阻礙 AI 採用的主要瓶頸是組織文化而非技術。文章強調了風險規避和完美主義等問題,並提議轉向將 AI 視為一個重視速度、學習和賦權團隊的執行系統。

Is Your Organizational Culture Holding Your AI Execution Hostage?

The problems slowing AI execution and the solutions that unlock scale

TL;DR

The real bottleneck in AI adoption is no longer technology, but governance and culture.

The problem:Most organizations are failing to execute on AI not because of technical limitations, but because of a stack of reinforcing constraints: risk-averse culture, perfectionism, human fear and cynicism, pilot purgatory, over-engineered solutions, data perfection myths, shallow AI literacy, centralized discovery, slow governance, and rigid vendor architectures. Together, these forces stall learning, trap investment in non-production work, and compound the cost of inaction. In 2026, hesitation itself has become the dominant risk.

The solution:Shift from AI as a controlled experiment to AI as an execution system. This requires replacing permission with parameters, pilots with pathways, users with problem architects, and rigid stacks with modular, multi-vendor architectures. Culture must reward speed and learning, governance must scale with risk rather than hierarchy, data strategy must prioritize context over cleanliness, and teams closest to the work must be empowered to discover and deploy value. When execution velocity becomes the operating principle, safety, scale, and adaptability follow.

In 2026, the luxury of the wait-and-see approach has officially expired. We have moved from the parlor tricks of 2023 to the total systemic disruption of Agentic AI in a timeframe that has decapitated traditional five-year strategic cycles. This isn’t the slow-burn adoption of the Cloud or the gradual shift to Mobile. This is a compressed evolution. The fuse was lit by Google’s “Attention is All You Need” paper, but the resulting explosion has been faster than any corporate structure was designed to handle.

In less than 36 months, we have moved through three distinct eras of machine intelligence:

The Content Era: AI acted as a more efficient typewriter. We used early LLMs to summarize emails and write marketing copy. It was reactive, prompt-dependent, and external to core systems.

The Reasoning Era: Models shifted focus to long-context reasoning. We stopped asking for summaries and began uploading 1,000-page supply chain contracts to identify liabilities.

The Agentic Era: We no longer need to prompt a chatbot because we can now delegate to an agent. These systems do not just talk; they act. They navigate your CRM, cross-reference messy ERP data, and execute multi-step workflows autonomously.

If you are still perfecting the use case or waiting for a pristine data warehouse, you are not being diligent. You are managing your own obsolescence. The old cadence—pilot, perfect, approve, scale—has collapsed. While you were polishing a slide deck, agentic systems began planning, deciding, and acting. Every week of delay compounds your cost of inaction. Opportunity drains away, data debt deepens, and competitors quietly harvest a cognitive dividend you can no longer outspend. According to a Cognizant, 85% of executives report serious concerns that their current technology estate cannot support AI, indicating that most organizations are still constrained by legacy platforms, architectures, and operating models.

The uncomfortable truth is that the biggest risk in 2026 is no longer unsafe AI. It is Safe Stagnation. Speed is now a form of risk management. The landscape is not just shifting; it is accelerating. This report synthesizes insights from deep-bench conversations with industry peers to outline key bottlenecks and a pragmatic execution framework designed to scale AI before organizations fall behind.

PROBLEM 1: The Fourth Pillar- Why People, Process, and Technology Are Not Enough?

We have always been told that successful transformation relies on a stable tripod of people, process, and technology. For decades, this has been the gold standard for change management. But even with those three pillars aligned, change is inherently difficult. It is a disruption to the status quo, and most organizations are designed to resist it like an immune system attacking a foreign body. In the agentic era, that friction has become terminal.

This brings us to the hidden pillar that dictates whether the other three succeed or fail: Culture. You can hire the best talent, map efficient processes, and license advanced models, but those pillars will collapse under the weight of legacy thinking and risk-averse reflexes. According to research by BCG and McKinsey, roughly 70% of digital transformations fail, with both firms consistently finding that people-related factors such as organizational design, operating model, processes, and culture are the primary determinants of success or failure rather than technology itself.

For leadership, this is the most challenging frontier. It requires more than symbolic sponsorship. It demands a clear,irreversible decision to change how decisions are made and how action is rewarded. If experimentation must navigate fear, ambiguity, and informal veto power, velocity will die. The uncomfortable reality is that your technology is ready,your processes are mappable, and your people are waiting. Your culture is the bottleneck holding the entire enterprise hostage.

PROBLEM 2: The Perfection Trap is an Execution Gap

In 2026, revolutions are not slowed by a lack of ideas. They are suffocated by hesitation dressed up as diligence. This creates a Perfection Trap - where the desire for certainty overrides the need for movement. Many organizations are currently trapped in a Waterfall Mindset. This legacy approach treats AI like a traditional IT roll-out, leading to a vicious cycle of excessive deliberation where perfecting the plan becomes a defense mechanism against the discomfort of the unknown.

The result is a state of strategy-without-execution that inevitably leads to Pilot Purgatory. An astounding ~95% of AI pilots fail to make it into production according to an MIT-affiliated research. This is the graveyard of transformation: a landscape littered with successful demos that never reach production because the organization lacks the behavioral muscles to scale them. The challenge is that GenAI has introduced an inverted risk profile. Taking it slow no longer reduces risk; it increases it. If you spend twelve months perfecting a use case within a waterfall framework, that concept will be obsolete before you even launch.

Yet, many leaders still demand a guaranteed ROI before they have even opened the box. This fear of learning in public ensures that projects remain small, safe, and ultimately irrelevant. When an organization’s default response is caution and delay, investments in people, process, and technology stop compounding. In this era, the only way to manage risk is to move at the speed of the technology itself.

MANDATE 1 & 2: Leadership as the Execution Catalyst

Culture cannot be crowd-sourced; it must be set from the top. In the agentic era, leadership’s role is not to oversee AI but to deliberately shape the behaviors the system rewards. It starts with an honest maturity assessment: Are you AI-Ready or just AI-Curious? AI-readiness is not a line item in a budget. It is the organizational capacity to execute. Tone is not what you say. It is what moves faster, what gets funded, and what gets protected from friction. When AI initiatives stall while legacy workflows glide through untouched, the organization has already learned what truly matters.

Setting momentum requires more than approving a budget. It means allocating dedicated execution capacity, clarifying decision rights, and aggressively removing bottlenecks disguised as standard procedure. Every unresolved handoff, ambiguous owner, or redundant review trains the organization to slow down. Culture is formed in these micro-decisions, not in town halls.

Critically, culture change is not a side effect of new tools. It requires its own strategy. Announcing a framework without changing incentives, escalation paths, and cross-functional expectations only creates isolated pockets of progress surrounded by inertia. When departments retain informal veto power and risk avoidance masquerades as professionalism, transformation remains local, fragile, and easily reversed.

Ask yourself: what behaviors are actually reinforced by how AI work gets done in your organization today? Are you prioritizing speed over polish, ownership over deferral, and delivery over debate? In 2026, Execution IS Strategy. By failing to act, you are acting to fail. If a POC solves 70% of a departmental plague, you must have the pragmatic courage to deploy it. Iterating on a live tool provides more data and cultural buy-in than debating a 100% solution that never launches. If you cannot point to a deployment that reached production in the last 30 days, your culture has already answered.

If you want the cognitive dividend, you must pay for it in behavioral currency. This requires shifting from a finalization obsession to a cycle of well-designed experiments with SMART goals. Leaders must clear paths and make it unmistakable that movement is competence. Stop sponsoring AI potential and start rewarding Execution Velocity. The first move is not another framework. It is replacing permission with pathways.

PROBLEM 3: The Human Friction: Fear, Cynicism, and the Identity Crisis

While the Perfection Trap stalls the organization’s mechanics, a deeper friction stalls its heart: the workforce’s emotional reaction to the machine. In 2026, we have moved past the initial novelty of GenAI into a period of profound psychological tension. This tension manifests in two destructive ways: the paralyzing fear of being replaced and a deep-seated indifference born from the belief that AI is simply not effective.

For some, the cognitive dividend feels less like a benefit and more like a threat to professional identity. They see years of expertise being compressed into a prompt. Consequently, they move to protect their territory through subtle sabotage or a refusal to engage. For others, the defense mechanism is cynicism. By focusing on hallucinations and edge cases, they dismiss the technology as a toy. They remain indifferent to the tectonic shifts happening in their industry.

Both groups are reacting to a perceived loss of agency. Without addressing this human friction, even the most streamlined pathway will be met with a silent, invisible wall of resistance.

MANDATE 3 : Human Agency and the Talent Strategy

To solve the human friction of fear and indifference, leadership must execute a strategic pivot. You cannot ignore replacement anxiety. You must overwrite it with a new definition of professional value. The goal is to reclaim employee agency by shifting the narrative from “AI as a peer” to “AI as a Power Tool.”

The first move is to explicitly sell the “Cognitive Load Dividend.” Leadership must frame AI not as a cost-cutting measure but as a liberation strategy. By automating drudge work (repetitive data entry, basic drafting, and administrative friction) employees are freed to focus on high-value innovation and strategic priorities.

However, liberation without a plan creates anxiety. To create a true sense of security, you must have a Concrete Upskilling Roadmap. For roles where tasks are being fully automated, leadership must provide clear pathways to transition talent into higher-order areas like AI Curation, complex problem-solving, and relationship management. If you want buy-in, you must prove that the time saved by AI is being reinvested in the employee’s longevity, not just subtracted from the payroll.

To win over skeptics, you must move beyond promises and into Evidence-Based Storytelling. Skepticism thrives in a vacuum of information. Turn skeptics into believers by showcasing peer-led results from successful internal projects. When a cynical veteran sees a colleague use AI to slash a 40-hour process down to four, the technology is no longer an abstract threat. It is a proven advantage. Use these internal case studies to prove that AI is not just doing work; it is solving the specific frustrations that make daily lives difficult.

Next, leadership must deliver the “job security talk” with radical transparency. In 2026, job security is no longer tied to performing a specific task. It is tied to being the person who knows how to direct the AI to perform that task. The moat around a career is now built on AI Literacy and adoption. By being honest about the fact that roles are changing, you remove the silent sabotage that happens when employees try to protect obsolete workflows.

Finally, you must redefine your human capital strategy. Technical skills are now shifting monthly, rendering years of experience a diminishing metric. In this era, the only future-proof skills are adaptability, curiosity, creative problem-solving, and rapid learning. This requires a fundamental shift in how you hire and reward talent. Stop hiring based on years of experience alone. In the knowledge economy, AI has put a curious junior employee with high AI-fluency on equal footing with a veteran clinging to legacy methods. While high-stakes specialized fields like medicine still require years of clinical seasoning, for most of the enterprise, a Fast Learner is now more valuable than a Long-time Expert.

If you want your people to move with you, you must make it unmistakable that their value has not decreased. It has shifted. Leadership’s job is to ensure that in the partnership between human and machine, the human remains the architect.

PROBLEM 4: The Broadway Debut Syndrome

Most transformation efforts and POCs fail because they are treated like high-stakes Broadway debuts. There is an unspoken organizational requirement that every AI pilot must be a hit on opening night. This creates a destructive binary. If a project does not receive a standing ovation in the form of immediate ROI, it is either killed prematurely or it begins to drag on for months without a quick pivot in solution, strategy, or execution. Absent a clear strategic next step, these initiatives become zombie projects. They are technically dead yet still consuming the budget as decisions are endlessly deferred. This pattern is reflected in Gartner’s projection that over 40% of agentic AI projects will be canceled, leaving many initiatives trapped in a zombie state.

Compounding this is the trap of Over-Engineering. In an effort to ensure that opening night hits, organizations apply excessive rigor to the wrong problems. They build complex architectures, solutions, and strategies for simple use cases. This mismatch of effort stalls velocity and inflates costs and time-to-market before value is even proven. In the agentic era, the goal is to apply the right level of rigor to the right problem.

When you over-engineer a POC or involve too many layers of leadership governance in low-stakes design decisions, you are not building for stability. You are creating administrative friction that hides the possibility of a quick, informative failure. True execution requires keeping the strategy and the solution design simple. Move fast, test the core hypothesis, and save enterprise-grade rigor for the solutions that have proven they can stick.

MANDATE 4: FROM STALLING TO SIGNALING

To break the cycle of stagnation, the organization must redefine the purpose of a Proof of Concept (POC). The goal is not to build a finished tool. It is to extract a signal of value through a fail-fast experiment designed to test a hypothesis in the shortest window possible.

Under the Weeks-not-Months Rule, you must be willing to kill or pivot experiments that are not showing promise within a tight timeframe. While certain edge cases (such as projects dependent on external vendor data or government agencies) may require longer timelines, the active work time should remain lean. The objective is to extract the lesson and iterate immediately rather than protecting an idea that is not sticking.

Furthermore, leadership must embrace the Value of Failure. It is acceptable for a POC to fail technically. A failed experiment that proves a specific process cannot yet be automated is significantly more valuable than a successful political demo that uses smoke and mirrors to hide manual work. Identifying limitations early provides the honest data needed to refine a real strategy. Leadership must curate a culture where it is acceptable to fail, provided the failure is fast. When the penalty for an unsuccessful experiment is removed, teams stop hiding flaws and start sharing insights.

Finally, prioritize Signaling over Finalizing. POCs must remain light, simple, and iterative. Because they are signals of value rather than final products, they allow for rapid learning. This shift in mindset ensures that resources are constantly reallocated to the most viable solutions rather than being locked into over-engineered projects that lack a clear path to scaled production.

PROBLEM 5: DATA UTILITY - PROVING VALUE WITH WHAT YOU HAVE

The most common excuse for inaction is the belief that data must be perfectly structured and centralized before AI can be applied. Many organizations stall for years waiting for a pristine Data Warehouse, treating “dirty data” as an insurmountable wall. This stagnation is often compounded by a gate-driven decision model, where teams spend months waiting for access or excessive security reviews for sensitive datasets. This creates a cycle of zero action where the fear of data messiness or data sensitivity prevents even the most basic hypothesis testing.

MANDATE 5: Context over Cleaning

Instead of waiting for a perfect environment, the strategy is to work with what you have by identifying “clean pockets” of data to prove the initial model. To bypass the sensitivity bottleneck, teams should be empowered to use small amounts of synthetic or de-identified data for the initial POC.

This allows for rapid prototyping without the lag of exhaustive security reviews. Use “low-stakes” environments to identify which data flaws actually matter; this allows you to prioritize cleanup and formal access requests based on proven value rather than theoretical necessity. Don’t let the search for perfect data, or the wait for perfect permission, be an excuse for zero progress.

PROBLEM 6: The Literacy Gap and The User Trap

The current organizational approach to AI is stalled by a fundamental misunderstanding of literacy. Most teams view AI literacy through the narrow lens of Basic Prompting, or knowing how to ask a chatbot for an answer. This is the User Trap. It creates a workforce that knows how to turn the key but does not understand how the engine works or where the car should go. When employees are viewed merely as users, they wait for IT to deliver finished tools rather than identifying where those tools are actually needed.

This leads to a lack of a Problem-Solving Mindset. Because discovery is centralized within a small AI task force, there is a massive disconnect between technical capability and the actual departmental plagues festering on the front lines. A central team can only guess at the friction in accounting or operations. Without a decentralized hunt for bottlenecks, the organization remains stuck in a vicious cycle of planning and prioritizing. High-level strategies are discussed, but the chronic, daily workflows that drain productivity remain untouched.

MANDATE 6: Moving from Passive Users to Problem Architects

To move the needle, we must shift the workforce from user to innovator. True AI literacy goes far beyond basic prompt engineering. It requires educating employees to look beyond individual tasks and identify systemic inefficiencies. By granting teams the autonomy to create small, personal-use assistive agents for immediate productivity gains (such as policy-to-practice translators, executive brief generators, cross-domain translators, and structured formatters) you incentivize curiosity and reinforce a bias toward execution.

This decentralized approach is powered by KPI-Driven Innovation. Every department head and employee should be tasked with a new performance metric: identify and solve one chronic workflow bottleneck using AI or agents every quarter. These must be tied to tangible business value, such as direct ROI, hours of manual labor reclaimed, reduction in error rates, or increase in net promoter score. Success is measured by the actual removal of operational friction points. These are the deep-seated process gaps that hinder the department rather than mere personal productivity gains that only benefit a few individuals. MIT Sloan Management Review reinforces this dynamic, finding that 72% of surveyed leaders believe corporate teams should set only high-level AI guardrails, while individual teams define the rules locally, underscoring that context and judgment sit closest to the work.

Instead of a central AI task force guessing what is broken in accounting, the accounting team is incentivized to hunt their own departmental bottlenecks. This dramatically accelerates discovery, allowing departments to come to leadership with ready-to-vet ideas rather than vague complaints. When every department participates, the result is a high-volume pipeline of dozens of mini-pilots running simultaneously.

To manage this scale and lower the barrier to entry, the organization can deploy a “Strategic AI Validator”. By leveraging general GenAI chatbots or custom models pre-loaded with feasibility instructions, employees can self-assess their concepts. These AI validators analyze data sources, manual steps, and technical viability. This removes administrative bottlenecks and ensures that only the most high-viability, ready-to-bake experiments move forward for professional solutioning and final approval.

PROBLEM 7: The Safety - Speed Paradox

Most organizations are attempting to manage 2026-era agentic AI using 2010-era governance frameworks. These legacy models emphasize comprehensive oversight through several layered committees, centralized review bodies, and sequential approval processes. While effective for deterministic, traditional software, this waterfall approach is fundamentally misaligned with the probabilistic nature of generative and agentic systems.

The core failure of traditional layered governance is its assumption that the primary risk is unsafe AI. By focusing exclusively on preventing a rogue deployment, these structures expand into selection, implementation, and oversight of even low-stakes initiatives. This creates a bottleneck that does not just slow down innovation. It suffocates the feedback loops required to make AI safe in the first place.

In the agentic era, the greatest risk is no longer technical failure, but stagnating through excessive caution. When a governance model is designed to control throughput rather than risk, it introduces a material delay that compounds your Cost of Inaction (COI). Only 6% of AI pilots reach production while nearly 60% of AI leaders cite governance, compliance, and legacy integration as top barriers to adoption, illustrating how governance friction compounds your Cost of Inaction. If your governance process takes twelve weeks to approve a three-week experiment, the framework is no longer protecting the organization. It is holding it hostage to obsolescence.

“Committees are often where ideas go to die and creative groups are where they come alive,” famously said by British author Sir Ken Robinson. To succeed with AI, we must move away from the graveyard of committee-led bureaucracy and toward the energy of small, empowered creative groups.

MANDATE 7: The Pathway-Based Governance Model

To break the cycle of stagnation, we must replace multi-tiered and overly complex governance structures with policy clarity and a pathway-based model. A pathway-based model ensures that governance is a catalyst, not a cage, by matching the level of scrutiny to the actual risk profile of the initiative. By defining the rules of engagement upfront, we transition from a culture of permission to a culture of parameters.

The Core Principles of Proportional Oversight

Pivot from waterfall to probabilistic thinking: Stop managing non-linear, adaptive AI with linear, rigid phases. Governance must support iterative feedback loops, allowing for real-world testing within safe boundaries.

Govern by risk, not by hierarchy: Approval authority should be dictated by the sensitivity of the data and the autonomy of the agent, not by the seniority of the person asking.

Predefined pathways over case-by-case deliberation: Establish a risk-level checklist to define safety criteria for low, medium, and high-risk zones and eliminate administrative friction. Initiatives move through pathways based on meeting specifications of the safety criteria.

Strategic oversight by default, detailed review by exception: Leadership should set the rules of the road and automate the compliance checks. Human experts should only be summoned to intervene when an experiment attempts to cross a high-stakes threshold.

By shifting the focus from controlling the throughput to securing the environment, we enable governance as velocity. This ensures that safety is a byproduct of our speed, rather than an obstacle to it. Below, we will review the pathway-based model. Note that this is not meant to be prescriptive, but rather an abstract starting point for simple pathway design, a conceptual framework rather than a complete, rigid workflow.

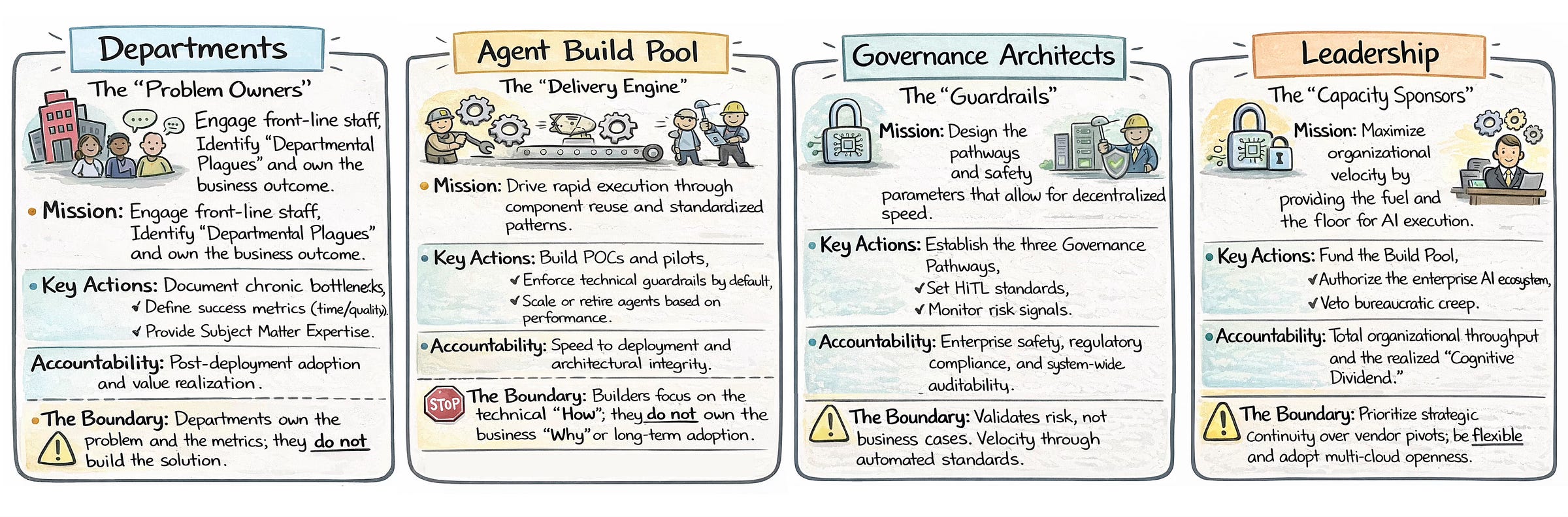

Core Roles: The AI Implementation Team

Before diving into specific pathways, we must review the core roles involved. The success of any AI initiative depends on a clear division of labor between four groups. Together, they ensure that AI moves beyond a technical experiment into a tool that solves real-world organizational bottlenecks.

The Architectures of Execution

Departments (the problem owners): The department acts as the catalyst by identifying chronic departmental plagues and owning the final business outcome. To succeed, they must elect a team of champions, ideally individuals involved in day-to-day operations who deeply understand the data and workflow. These champions do not hand work off. They stay accountable throughout delivery, actively supplying data definitions, sources, context, and decisions so the build team can execute without reverse-engineering the business. More importantly, they own continuous value-generation.

Agent build pool (the delivery engine): This team is responsible for rapid execution, building proofs of concept and pilots through standardized patterns. Their mission is focused on speed-to-deployment and technical integrity. While they own the technical how, they do not own the business why or the long-term adoption of the tool.

Governance architects (the guardrails): Their mission is to design the pathways and safety parameters that allow for decentralized speed. They establish the standards for human-in-the-loop requirements and monitor risk signals to ensure system-wide auditability and regulatory compliance. They validate risk, not business cases.

Leadership (the capacity sponsors): Leadership provides the necessary fuel and floor for AI execution by authorizing the enterprise ecosystem and providing funding. Crucially, they act as a shield, vetoing bureaucratic creep and preventing middle management from pulling fast-tracked projects back into slow, manual review cycles.

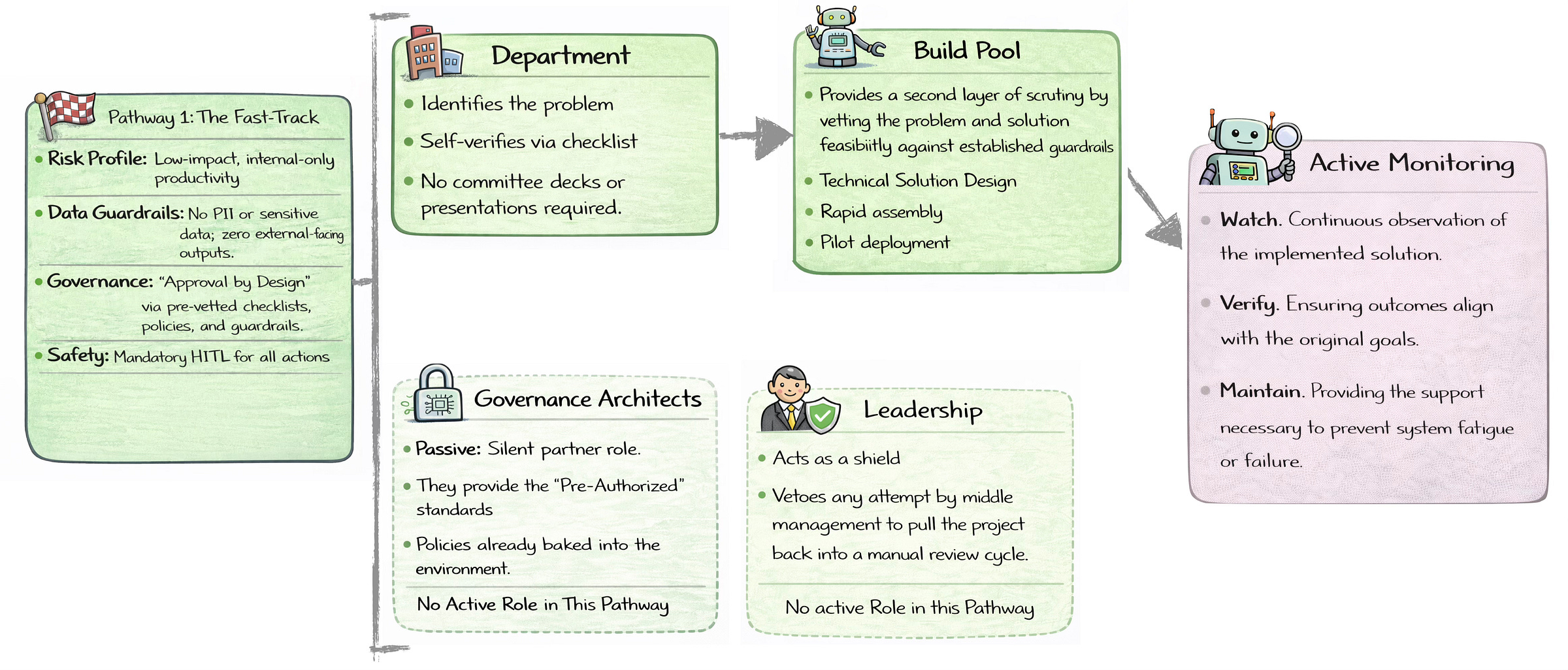

The first pathway is designed for low-impact, internal productivity tools where the risk profile is minimal and no sensitive data or personally identifiable information is involved. In this flow, the department identifies a problem and self-verifies against a preauthorized checklist established by governance architects, bypassing the need for complex committee decks. The build pool then provides a second layer of scrutiny by assessing feasibility against established guardrails before moving into rapid assembly and pilot deployment. If the build pool determines that unexpected risk is involved, the project is escalated to a higher pathway. If it is deemed technically unfeasible, it is logged in a strategic backlog with a status update and deferred for future consideration. Because safety is built in through mandatory human-in-the-loop requirements, governance architects and leadership remain passive, acting as silent partners to prevent middle management from pulling the project back into slow, manual review cycles.

Examples of low-risk automation in a marketing context include a campaign localization agent that adapts central messaging into region-specific drafts, a brand compliance sentinel that flags noncompliant language against preset standards, and a content repurposing orchestrator that converts approved assets into multiple social media formats.

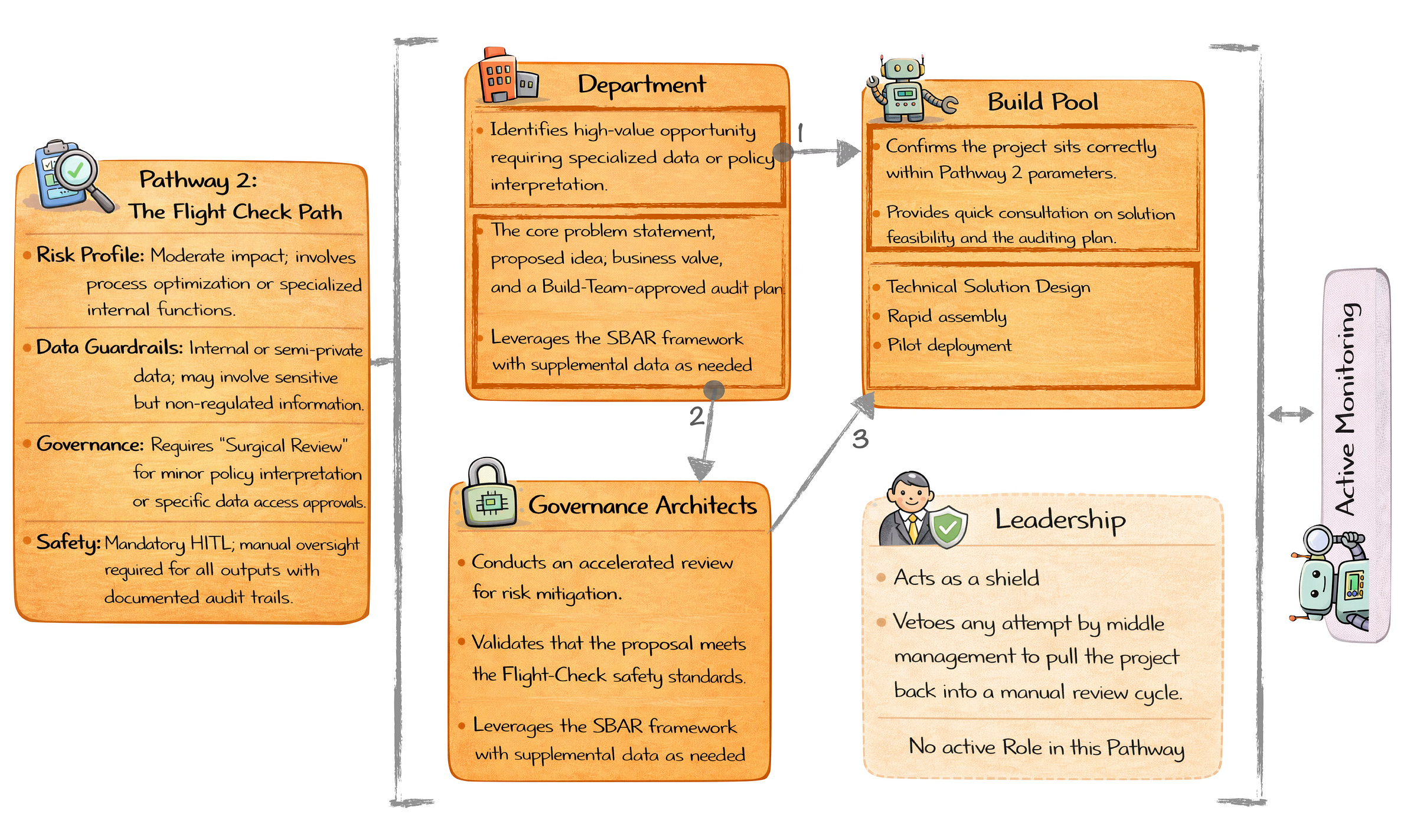

The second pathway is designed for moderate impact initiatives that involve sensitive data, specialized internal functions, or process optimization. Unlike the self verification approach in pathway 1, this flow requires a surgical review, a targeted and high speed assessment that focuses only on specific data access and policy interpretation risks rather than a broad, exhaustive audit.

In this pathway, the department initiates the process by identifying a high value opportunity and consulting with the build pool for a sanity check against pathway 2 parameters, along with a technical and audit feasibility review. Once the foundation is confirmed, the department presents an SBAR proposal to the governance architects. After the proposal is validated against defined safety standards, similar to a flight check mandate, the build pool proceeds with rapid assembly and pilot deployment, contingent on a formal approval from governance.

Examples of initiatives that can be accelerated using this pathway include a regulatory impact interpreter agent in the financial sector that reviews new SEC or Basel guidance and maps the implications directly to internal controls and reporting requirements. In healthcare, a prior authorization risk flagging agent analyzes internal denial patterns to identify high risk submissions for staff review before they are sent to payers. In both cases, the AI provides recommendations and clarity, while final decision making remains strictly in human hands with a documented audit trail.

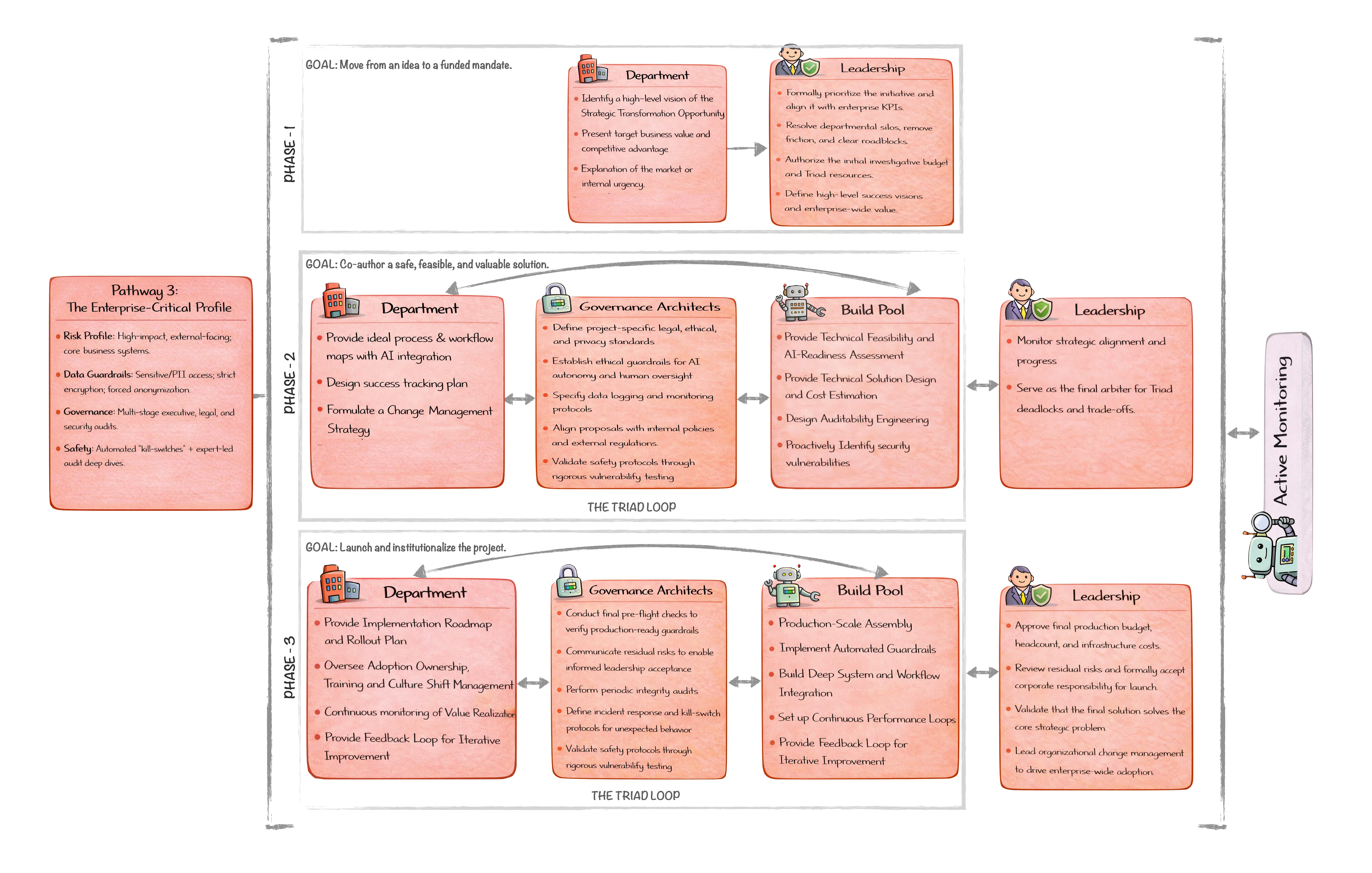

The third pathway is reserved for high stakes initiatives where the complexity of agentic autonomy or the sensitivity of the data requires a high degree of cross functional collaboration. Unlike the more independent flows of pathways 1 and 2, this model requires active and continuous participation from leadership and the triad, a dedicated working group consisting of the department, the build pool, and the governance architects.

Phase 1: This phase focuses on moving from an idea to a funded mandate. The department defines a high level vision for a strategic transformation and presents the target business value. Leadership plays an active role by formally prioritizing the initiative, resolving departmental silos, and authorizing the initial investigative budget.

Phase 2: The goal is to jointly design a solution that is safe, feasible, and valuable. The triad enters an iterative working loop to refine the technical design, ethical guardrails, and success measures in parallel. Leadership remains engaged by monitoring strategic alignment and acting as the final decision maker when trade offs or deadlocks arise.

Phase 3: The focus shifts to institutionalizing and launching the project. The triad continues final validation and readiness checks while governance architects communicate residual risks to ensure informed acceptance. Leadership provides final approval by authorizing the production budget and formally accepting corporate responsibility for the launch. This phase includes the technical build, production scale assembly, and deep system and workflow integration, supported by rigorous testing and continuous performance monitoring.

For example, in manufacturing and industrial operations, an autonomous production line control system can dynamically adjust parameters such as speed, temperature, and materials in real time. In healthcare, a patient facing symptom triage and routing system can collect patient reported symptoms and route them to virtual care, urgent care, or emergency services. Because these systems make decisions with real world consequences and physical or clinical impact, they require ongoing oversight and iterative refinement rather than a set once deployment.

In summary, pathway based governance replaces hesitation with momentum and permission with parameters. Governance does not need to be complex to be effective. In practice, complexity is often the fastest way to reduce velocity. Start with frugal engineering by designing the simplest structure that can safely move work forward, then add rigor only when risk demands it. Do not lead with layers, process, or precautionary complexity. In fast moving domains, simplicity is not naive, it is strategic.

PROBLEM 8: The Vendor Lock-In Fallacy

Most enterprises are making a quiet but dangerous assumption that today’s AI vendor choices will remain optimal tomorrow. They will not. The fixation on a mythical final stack is the real threat to long term AI strategy. The risk is no longer choosing the wrong partner, but building a rigid architecture that makes change impossible. In the current agentic race, organizations are embedding intelligence directly into workflows as if there were a stable end state to reach. There is not.

Vendor lock-in is no longer just a commercial concern. It is a risk of falling behind. It is now a strategic concern for enterprises: a survey by Flexera shows that 68% of CIOs worry about lock-in with public cloud providers, highlighting that long-term dependency on a single vendor is a key risk factor in enterprise technology strategy. When models, tools, or cloud platforms are tightly coupled to business logic, every major upgrade becomes a rewrite, every innovation becomes a migration, and every competitive advantage arrives faster than the organization can respond. Many enterprises commit so deeply to a single ecosystem that they become dependent on that vendor’s roadmap, mistaking dependence for partnership. The result is institutional paralysis disguised as stability. Without modularity, organizations are not building a foundation. They are building a cage.

What makes this especially dangerous is the cost of inaction. Every month spent locked into an inflexible stack compounds loss rather than preserving value. While competitors experiment across models, platforms, and agents, rigid organizations accumulate data debt, talent frustration, and missed learning cycles. In generative AI, the cost of inaction is not just missed revenue. It is falling behind an exponential curve where catching up later becomes structurally harder and more expensive. Flexibility is no longer optional. It is the price of staying in the game.

MANDATE 8: Build for adaptability

The mandate is simple and non negotiable: build for adaptability, not allegiance. Partner deeply, but architect loosely. Your AI stack must be modular by design, with clear separation between workflows, orchestration, and underlying models. If Gemini, Claude, or the next breakthrough model delivers a step change tomorrow, you should be able to plug in a new brain without rebuilding the body. Increasingly, multiple models should be able to coexist, each optimized for different tasks.

There is no final state for your AI stack. Accept it. Vendors will change. Models will change. Cloud strategy may change. This is not a failure of planning. It is the nature of the landscape. Organizations that succeed design for this reality from the start by enforcing abstraction layers, standard interfaces, multi model interoperability, and independent monitoring and audit capabilities that span vendors and agents.

Risk compounds as agents proliferate, and they will. Every major vendor is racing to ship its own agents, which means enterprises will soon operate a heterogeneous mix of first party and third party agents inside critical workflows. Without independent monitoring and auditing, this complexity creates blind spots at scale. This is not a future concern. It is an imminent reality that must be built into AI strategy now. Organizations must be prepared with a centralized agent monitoring and oversight layer. The winners in the agentic era will not be early vendor pickers, but organizations that engineered for multi cloud and multi vendor coexistence with strong control and oversight.

In Closing, the era of the perfect pilot is over. In 2026, the competitive divide is no longer defined by who has the most sophisticated vision, but by who moves with the greatest execution velocity. By replacing a culture of permission with a culture of parameters, and rigid stacks with modular architecture, governance shifts from a cage into a catalyst. The cognitive dividend awaits organizations with the pragmatic courage to deploy, the humility to learn in public, and the foresight to build for a future that refuses to stand still. The technology is ready. The only remaining question is whether the culture is brave enough to let it run.

Thanks for reading! Subscribe for free to receive new posts and support my work.

Thanks for reading! This post is public so feel free to share it.

Share

Share AI, Actually

Leave a comment

![]()

Ready for more?

相關文章