AI 的人類作業系統

本文認為企業 AI 失敗的根本原因在於未投資於人類使用 AI 的能力,而非技術本身。文章提出開發「AI 的人類作業系統」,以確保 AI 能夠提升人類而非取代他們,並預測在未來 24 個月內建立此系統的組織將取得決定性優勢。

Human OS for AI

The Missing Multiplier

January 2026

◈ Thesis#

Corporate AI failures share the same root cause: investment in technical capability, not in the human capacity to use it.

It’s a debatable claim. But test it against any stalled pilot, any evaporating productivity gain, any AI tool your people avoid. The pattern holds.

The technology works. The human system to operate it doesn’t exist.

The solution isn’t more GPUs, the latest models, or more powerful agents. It’s the human capacity to use them. What’s missing?

A Human OS for AI.

This paper names the problem, defines a working architecture, and takes a position: AI should elevate people, not eliminate them from org charts.

The prediction: organizations that build a Human OS in the next 24 months will pull irreversibly ahead of those that don’t.

This is a leadership crisis hiding in plain sight.

Structure of this paper:

Recommendation: Read in full (~15 minutes). Every section is hyperlinked above for quick reference. See Borrowed Time for context on the urgency to act now.

◉ Signal#

Something is wrong.

You feel it in the executive briefings. The slides say “transformation” but the room says “stall.” You see it in the gap between the AI partnerships announced and the AI value realized. You hear it in the questions that don’t get asked — because few know how to ask them yet.

Roughly $300 billion. That’s what enterprises spent on AI last year. The compute is in place. The models are deployed. The licenses are signed.

And still, the returns aren’t there.

Pilots that don’t scale. Productivity gains that evaporate. Tools people avoid. ROI never materializes.

This isn’t a technology problem. This is a human problem.

Few are treating it like one.

⟲ Inversion#

Our team has helped organizations navigate every big technology transition of the digital era — web, social, mobile, cloud. This one is different.

Not because AI is more powerful. It is. Not because it moves faster. It does. But because for the first time, the bottleneck isn’t technical capacity.

It’s human capacity.

Every previous technology wave asked one question: Can we build it?

The bottleneck was technical. Could we write the code, deploy the servers, scale the systems? Investment followed: more engineers, more infrastructure, more technical capability.

AI inverts this. The capability arrives fully formed — models that reason, generate, analyze, create, available to anyone with an API key. The question is no longer can we build it?

It’s can our people use it?

The bottleneck is human. Can we reskill, redesign work, realign incentives?

And the answer, in most organizations, is no. Not because they haven’t invested. Not because they haven’t trained. Not because they haven’t piloted. But because they haven’t built the human systems that make it all work together.

A different kind of investment is required: not more compute — more readiness and vibe that creates excitement.

△ ROI Deficit#

Only 25% of AI initiatives delivered expected returns over the past three years (IBM, 2025). Not because the technology failed — because organizations weren’t ready.

The pattern holds. 56% of CEOs report no revenue or cost benefit from AI — attributing the gap to skipped fundamentals like data quality, not weak technology. Rushed projects are backfiring (PwC, 2026).

The hype is loud. The results are thin. What’s missing isn’t more AI — it’s foundational work, not just rapid adoption, to achieve ROI.

This is the AI ROI Deficit.

It’s the gap between AI investment and AI returns. The quantifiable cost of running powerful technology through unprepared organizations. The missing multiplier that explains why capability doesn’t convert to value.

The formula is simple:

Value = AI Capability × Human Readiness

This isn’t metaphor. It’s observable. Organizations with 10x AI capability and near-zero human readiness produce less value than organizations with 2x capability and high human readiness. The multiplier dominates the equation.

When human readiness is near zero, it doesn’t matter how powerful the AI is. The human multiplier kills the equation.

The deficit isn’t a bug in the system. It is the system — or rather, the absence of one.

⬡ Architecture#

If human readiness is the multiplier, what does it require?

An architecture — two systems that work together.

Both systems must connect to create VALUE. Without the Human OS, the connection breaks.

AI capability without human readiness is waste. Human readiness without AI capability has nothing to multiply.

⌘ OS—Explained#

What’s needed is a new operating system. Not for the machines. For the people.

The infrastructure that sits between AI capability and organizational value — the layer that turns investment into returns.

This is the Human OS for AI.

You know when it’s missing. It’s the analyst who built a brilliant workflow on her laptop but can’t get anyone else to use it. It’s the pilot that proved 10x productivity but died when the champion changed roles. It’s the Copilot license that cost millions while employees use ChatGPT on their phones — because the Copilot premise didn’t match reality.

The Human OS isn’t training. The Human OS is the system of work.

What makes this different from Kotter’s 8 Step change method, Rogers’ Diffusion of innovation, or any change management framework? Those frameworks address adoption — they assume the technology works and the challenge is getting humans to accept it. Train people. Communicate the vision. Overcome resistance.

The Human OS isn’t about adoption. It’s about building a new operating layer designed specifically for human-AI collaboration. No prior framework integrates AI capability and human systems into a single architecture. This is new territory.

⬢ Six Components#

The Human OS is a flywheel of six components. Each builds momentum for the others. Miss one, the wheel stops. What follows aren’t case studies. They’re archetypes.

- Talent & Development

The flywheel starts with people. Hiring, reskilling, and role redesign for human-AI collaboration. Without a talent strategy, the other five components have no foundation.

Organizations that ignore AI talent needs will find themselves unable to staff AI programs, unable to retain people who want to work at the frontier, and unable to evolve as capability advances.

What’s your AI talent strategy? If the answer is “we’re working on it,” you’re already behind.

- Workflow Ownership

Every AI-integrated workflow needs a named owner with authority to build it. Not a committee. Not shared accountability. A person who wakes up responsible for making it work.

Without ownership, AI tools become everyone’s job and no one’s responsibility.

Who owns your AI workflows? If the answer is unclear, you don’t have a Human OS.

- Expert Interfaces

AI tools must fit the work, not the other way around. Not generic chatbots. Tools built for specific jobs. Contextual interaction. Purpose-built agents. Templates shaped to real tasks.

When your enterprise AI requires people to leave their workflow to use it, they won’t use it. Full stop.

Interface design is operating system design.

- Rituals and Cadence

Capability without rhythm decays. The Human OS requires operating cadence — weekly cycles that make improvement inevitable. Stand-ups. Retrospectives. Metric reviews. Shared learning sessions.

These aren’t bureaucratic overhead. They’re the heartbeat that keeps the system alive.

Organizations that treat AI as a one-time deployment will watch their gains evaporate. The Human OS runs on rhythm, not heroics.

- Incentives and Identity

People don’t engage with systems that diminish them. If AI threatens expertise rather than amplifying it, resistance is rational.

The Human OS must make participation rewarding and exciting.

Not through mandates — through genuine value creation. Expertise becomes leverage, not liability. Status is preserved. Identity is enhanced.

The organizations extracting AI value have figured out how to make their people want to use it.

- Expectations and Air Cover

The Human OS runs on clear expectations — what’s allowed, what’s not, and what success looks like — and air cover from leadership while teams figure it out. Guardrails define the boundaries. Measurement proves the value. Without all three, you can’t govern risk or defend the investment.

If you can’t measure the value, you can’t defend the investment. If you can’t govern the risk, you can’t scale the deployment.

⚠ Systemic Risk#

The flywheel slows without all six in motion. Here’s how risk re-enters the picture:

The components reinforce each other. Talent without ownership means capable people with no accountability. Ownership without rituals burns out champions. Interfaces without incentives go unused. Guardrails without ownership become bureaucratic obstruction.

This is a system. It must be designed and built as one.

⊕ Scope#

Who owns the Human OS for AI?

The obvious answer: HR. This is workforce redesign. Talent strategy. Role transformation. People leaders understand capability gaps, resistance patterns, and the identity work that adoption requires.

But AI is a silo buster. An advancement in one function applies to another. A prompt library built for legal works for compliance. A workflow redesign in finance spreads to operations. An interface breakthrough in customer service transforms sales. Plans that stay in one area die in one area.

Previous technology waves allowed functional silos. Cloud migration could live in IT. Digital marketing could live in marketing. AI doesn’t work that way. The capability is horizontal. The opportunity is horizontal. The threat is horizontal.

HR sits at the center. Not at the top.

The Human OS requires distributed responsibility:

HR brings the framework. The ELT brings the mandate. Functional leads bring the context. Business units bring the execution.

One architect. Many builders. Shared accountability.

Building the Human OS is in scope for all leaders accountable to turn AI spend into value.

↗ Potential#

When human readiness matches AI capability, the math changes. Everybody can make a difference with AI in their hands.

The analyst who spent three weeks on market research now spends three days — and invests the difference thinking about what the data means. The operations lead who fought spreadsheets now sees patterns across entire supply chains. The product manager who spent weeks on specs now tests ideas in days.

This isn’t automation replacing work. It’s amplification creating new categories of it.

Realizing this potential requires capacity building at three levels:

Cloud was an infrastructure project. Mobile was a channel extension. AI is different. It touches every role, every workflow, every decision — and demands capacity at all three levels simultaneously.

▣ Evidence#

Research is beginning to quantify what practitioners already know: when human readiness matches AI capability, value multiplies.

The most rigorous evidence comes from a field experiment by Procter & Gamble and Harvard Business School. They put 776 employees through a live product development challenge. Some worked alone, some in pairs, some with AI, some without. The results:

The pattern confirms the multiplier.

The gap isn’t about the AI. It’s about who’s best ready to use it.

⇋ Counterargument#

The strongest objection is obvious: what if AI gets good enough that human readiness stops mattering?

If models become sufficiently capable — reasoning, planning, executing without human direction — then the Human OS becomes irrelevant. The bottleneck vanishes. Organizations that waited would catch up instantly by deploying superior AI.

This objection deserves a direct response.

First, capability without integration is still waste. Even if AI can do the work, someone has to know what work to point it at, how to evaluate the output, and when to override the machine. That’s human readiness. It doesn’t disappear — it shifts.

Second, the objection ignores what gets destroyed along the way. Organizations that bypass human readiness in favor of pure automation lose more than efficiency — they lose the people who know why the system was built that way, who read between the lines with customers, who sense when something’s wrong before the dashboard turns red. Automate around them and you lose the organization’s immune system.

Third, the organizations building human readiness develop the judgement to know when AI should and shouldn’t act autonomously. They build the muscle to adapt as capability changes. Those without a Human OS won’t suddenly develop that judgment when better models arrive.

Fourth, the window isn’t about permanent advantage — it’s about compounding. Organizations that spend this window learning how to integrate AI with human work will compound that learning. Those that wait will start from zero.

The counterargument assumes a discontinuity: AI leaps to full autonomy, humans become irrelevant. The more likely path is continuous improvement, where humans and AI co-evolve. The Human OS is built for that path.

⧖ Window#

The window to build a synchronous system is closing faster than most leaders realize.

Every month, the capability gap widens. AI systems compound — each output becomes feedback, each update makes the next one smarter. Inside the machine, speed builds on itself.

Organizations don’t compound. They deliberate. They align. They wait for consensus. They operate on human time while the machines operate on algorithmic time.

This tempo mismatch creates fractures. Teams that surge ahead while leadership hesitates. Leaders with vision but organizations without capacity. Talent that leaves for places where they’re allowed to move.

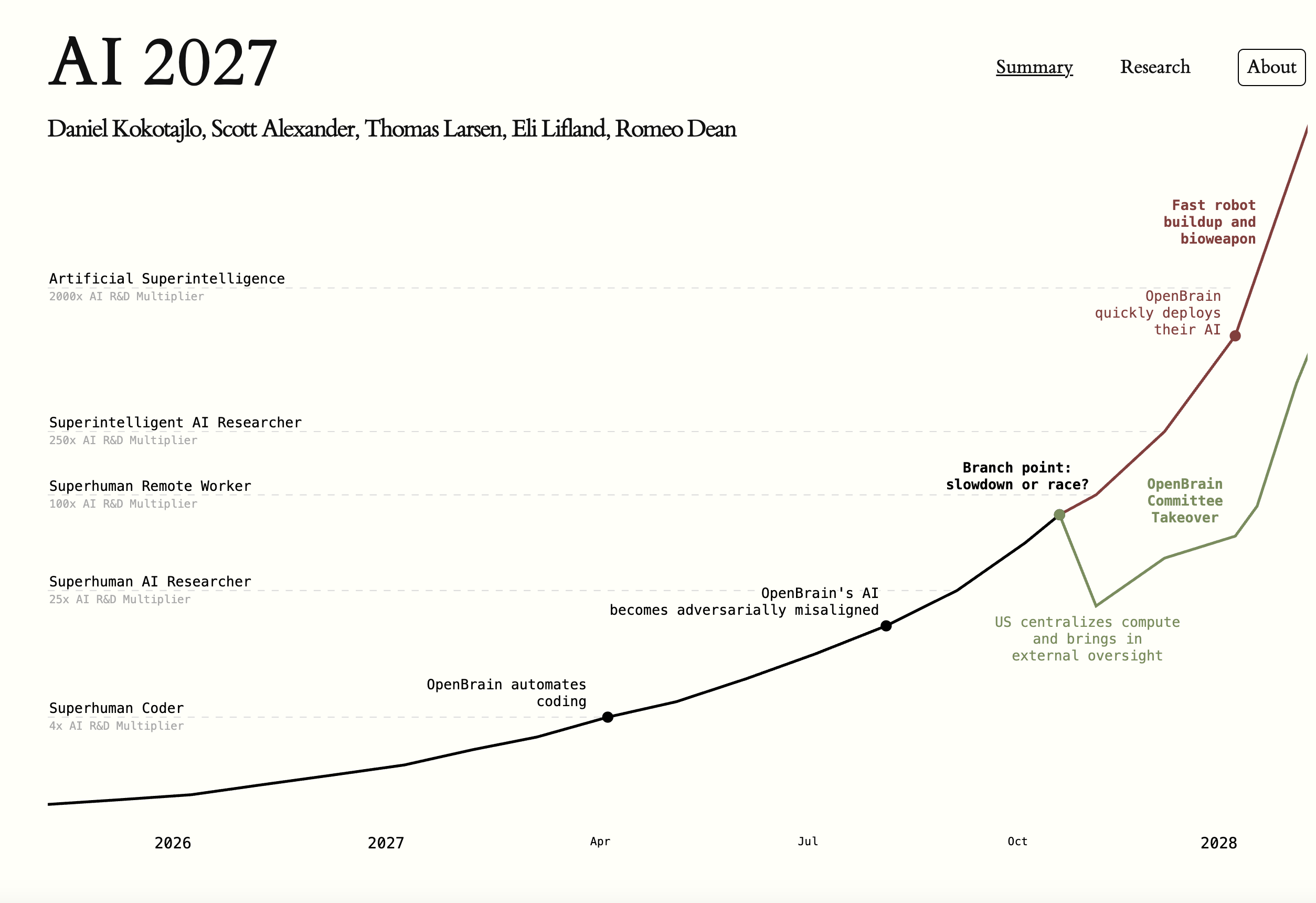

The AI 2027 scenario — from researchers and operators at the frontier — points to roughly two years to build human readiness before competitive separation locks in. These are timelines from the people building the systems.

At Davos 2026, Anthropic CEO Dario Amodei projected “Nobel-level” AI intelligence and the total replacement of software engineers within 6 to 12 months. Google DeepMind CEO Demis Hassabis put 50% odds on human-level AI by 2030. Both warned of a “lag” in labor statistics that masks a crisis already in motion.

The cost of delay is measurable:

Every quarter you wait, the deficit compounds.

There is no catching up later. The divergence is permanent. Build now or fall behind forever.

◎ Prediction#

The intelligence is compounding. It’s available to everyone. The gap isn’t access — it’s absorption.

Organizations that build the Human OS will integrate wave after wave of capability, each one multiplying the last. Those that don’t will watch the same waves crash against unprepared systems. The divergence has already begun.

Two categories are emerging:

These outcomes are measurable. Track them.

◆ Commitment#

Everything here rests on a singular conviction: care for people.

Not as resources to optimize. As humans.

People who fear what AI means for their work and identity. People with questions no one is answering. People with habits upended and curiosity they’re afraid to follow.

We have to respect what’s on people’s minds.

The disorientation is real. The anxiety is rational. The sense that the ground is shifting — that’s not resistance to change. That’s an honest read of the situation.

The organizations that pull ahead won’t have the most sophisticated models. Models commoditize. They’ll have figured out how to make humans and AI genuinely better together.

This requires a commitment: human agency over human automation.

Humans directing AI, not displaced by it. Expertise amplified, not eliminated.

Automation without agency creates fragility — systems that work until they don’t, with no one who understands why. Agency with automation creates resilience — people who understand the tools they wield, organizations that adapt because their people can think with them.

The choice is being made right now, in every organization, whether they realize it or not.

▶ A Final Declaration#

If you believe in the potential presented here, you are on Team Human.

You believe that AI should elevate people, not eliminate them.

That capability should enable human growth, not destroy it. That the measure of any technology is what it enables people to become.

You know the AI ROI Deficit is a solvable problem — not through better models, but through better systems for people to operate.

You see the ticking clock as an opportunity — a window to build what’s needed before the divergence becomes irreversible.

You commit your talents and resources to building the Human OS. A new way of thinking about what humans and machines can accomplish together.

≡ In Summary#

This paper makes three claims:

Claim 1: AI capability isn’t the bottleneck. Human readiness is. Test this against any failed AI initiative. The pattern holds.

Claim 2: Human readiness requires a system — the Human OS — with six specific components: Talent, Ownership, Interfaces, Rituals, Incentives, and Expectations. Organizations missing any component will experience predictable failure modes.

Claim 3: Organizations have a narrow window to build the Human OS before competitive divergence becomes irreversible. Those that build will compound value. Those that don’t will compound deficit.

The AI ROI Deficit is the defining challenge of our time. The Human OS is the architecture that solves it. The window to build is now.

The question isn’t whether AI will transform organizations. It will. The question is whether humans will direct that transformation — or be subject to it.

We choose to direct it. We choose agency over automation. We choose to build the Human OS.

→ My Lens#

I’ve spent three decades advising Fortune 500 leaders on technology transformation. In 2018, I built one of the first AI-native labs for corporate leaders — years before generative AI went mainstream. In 2024, I published Perspective Agents, anticipating how generative AI and agent proliferation would reshape the workplace, media, and culture.

This paper synthesizes observations from 300+ executive engagements and assignments over the past three years. I now work with others who share a similar vision at Andus Labs, exploring the intersection of humans, machines, and work.

Chris Perry // Andus Labs // [email protected]

相關文章